When I first heard about Mira Network, I honestly thought I already knew how the story would go. Another AI project. Another token. Another promise to “solve hallucinations.” I’ve seen that pattern enough times to be skeptical by default.

But the more I looked into it, the more I felt something different. Mira isn’t really trying to compete with the biggest AI labs like OpenAI or Google DeepMind. They’re not trying to train the largest model or chase the next benchmark score. They’re questioning something more uncomfortable.

What if AI isn’t lacking intelligence anymore? What if it’s lacking trust?

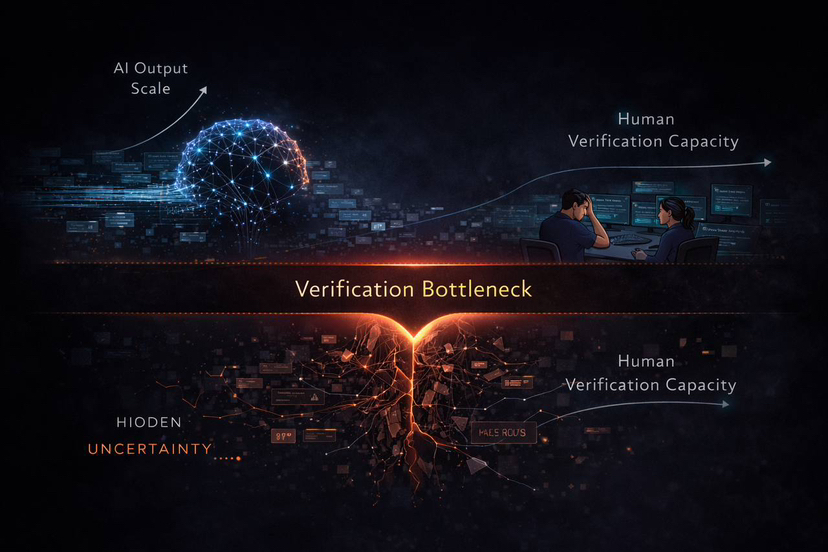

We’re living in a strange moment. AI systems can write essays, generate code, analyze markets, and explain complex topics in seconds. They sound confident. They sound structured. They sound right. And sometimes they are. But sometimes they’re not — and that’s where things get complicated.

The early versions of AI made obvious mistakes. You could catch them easily. Now the mistakes are subtle. A made-up citation. A slightly distorted explanation. A confident answer built on a weak assumption. The output looks professional even when the foundation isn’t solid. If it becomes harder for us to tell the difference between truth and a very convincing error, then the problem isn’t intelligence. It’s verification.

That’s where Mira enters the picture.

Instead of asking how to build a perfect model, they’re asking how to build a system where AI outputs are checked in a structured, scalable way. Not by a single authority. Not by random users in comment sections. But by a network designed specifically for verification.

The core idea is surprisingly simple. When an AI produces an output, that output can be broken into claims or reasoning steps. Those claims are sent to a decentralized network of verification nodes. These nodes evaluate whether the reasoning holds up. They stake capital to participate. If they verify correctly and align with accurate consensus, they earn rewards. If they verify poorly, they lose stake.

It sounds like a typical crypto mechanism at first. But when you think about it, it’s doing something deeper. It’s introducing economic accountability into AI reasoning.

Traditional blockchains like Bitcoin use computation to secure a ledger. Miners burn energy solving puzzles. Mira flips that logic. Instead of solving meaningless cryptographic problems, nodes are evaluating information. They’re using reasoning as the form of work.

That design choice matters. It means the network’s “security” is tied to how well it can evaluate truth, not how much energy it can burn.

What really stayed with me is this: AI systems don’t experience consequences. A model can produce an incorrect answer and move on instantly. There’s no cost embedded in the output itself. Humans, on the other hand, operate under accountability. Scientists face peer review. Investors risk capital. Analysts are judged on their track records.

Mira is trying to bring a version of that accountability into machine reasoning.

Of course, it’s not perfect. One concern that kept coming back to me is bias. If multiple AI models are trained on similar data, they may share the same blind spots. Consensus doesn’t automatically equal truth. Agreement can sometimes mean coordinated error. The team seems aware of this and emphasizes diversity among verification models, but true independence is hard to guarantee.

There’s also the practical question of speed. If verification slows down responses too much, developers may choose convenience over certainty. And then there’s the philosophical limit: not every real-world decision can be reduced to a simple true-or-false claim. Legal arguments, medical advice, financial strategies — they all involve nuance, context, and interpretation.

So no, Mira isn’t a magic solution. It doesn’t eliminate uncertainty. It doesn’t remove the need for human judgment entirely. But it shifts the conversation in a way that feels important.

Instead of asking, “How do we make AI smarter?” it asks, “How do we make AI accountable?”

That’s a different direction.

When I zoom out, Mira feels less like a product and more like a position. It’s a bet against the idea that one dominant AI model should define truth for everyone. Instead, it supports a world where intelligence is distributed and constantly reviewed by other intelligent systems.

That actually mirrors how human knowledge works. Truth doesn’t come from a single mind. It emerges from debate, disagreement, and repeated checking. Mira is trying to mechanize that process at machine scale.

If it becomes widely adopted, we’re not just looking at another crypto protocol. We’re looking at the early version of a distributed reasoning layer for the internet. A layer that sits quietly underneath applications, verifying outputs while most users never even notice.

And that’s what makes it interesting to me. The most powerful infrastructure is often invisible.

After spending time studying it, I don’t walk away thinking Mira is flawless. I see technical risks. I see adoption challenges. I see open questions about bias and latency.

But I also see a project asking the right question at the right time.

We’re seeing intelligence become abundant. Models are getting stronger, faster, more capable every month. But trust? Trust still feels expensive.

If Mira succeeds, it won’t be because it built the smartest model in the world. It will be because it recognized that in a world overflowing with answers, the rarest resource isn’t information.

It’s confidence in which answers we can actually rely on.

And maybe that’s the real frontier of AI.