Last month I watched a friend rely completely on an AI agent to manage a small crypto portfolio. Not just research. The agent was set up to rebalance, rotate between sectors, even draft posts explaining its own decisions. It felt efficient. Clean. Almost impressive. But when one of the summaries confidently cited a metric that didn’t exist, the mood shifted. The trade itself was fine. The explanation wasn’t. And that small crack made everything else feel less solid.

That’s the quiet tension inside autonomous AI apps. They don’t just suggest anymore. They act. They publish. They execute. Once you remove the human checkpoint, you’re not dealing with a chatbot. You’re dealing with a system that can move money, shape narratives, or influence decisions at scale. The intelligence might be probabilistic — meaning it works on likelihood, not certainty — but the consequences are very real.

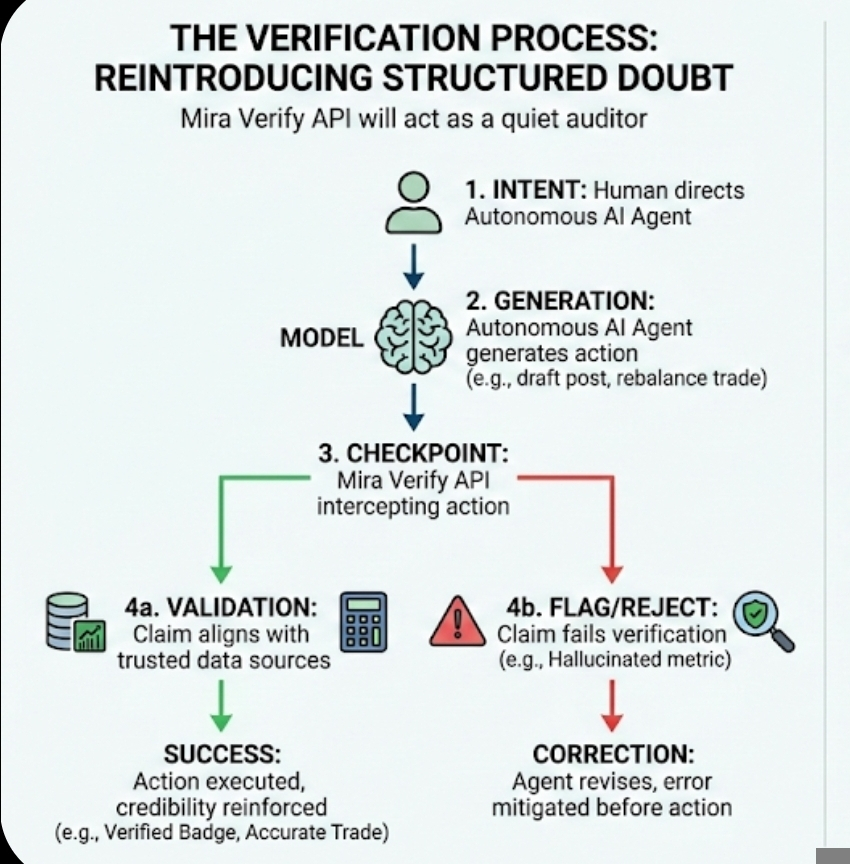

Mira Verify API enters at that uncomfortable space between output and action. An API, in simple terms, is just a connection point that lets different systems talk to each other. Mira’s role is not to generate answers. It listens after the answer is generated. It checks the claim before the claim travels further. Think of it less like a teacher grading an essay and more like a quiet auditor standing behind the machine.

What makes this interesting to me is not the technical architecture. It’s the psychological shift. Most AI systems are designed to sound confident. They don’t hesitate. They don’t say, “I’m 62 percent sure.” They speak in full sentences. A verification layer changes that tone indirectly. It forces the system to earn certainty instead of projecting it.

The process itself is straightforward on paper. An autonomous app generates a statement. That statement is compared against trusted data sources, predefined rules, or distributed validators. If the claim aligns, it moves forward. If it's not, it gets flagged or getting rejected. Nothing revolutionary there. But the simplicity hides something deeper. The system assumes error is normal.

That assumption matters.

For years, the dominant narrative around AI has been improvement through scale. Bigger models, larger datasets, more parameters. Parameters are the internal weights a model uses to make predictions. The higher the count, the more complex the pattern recognition. But complexity does not equal accountability. You can scale prediction, but you cannot scale trust the same way.

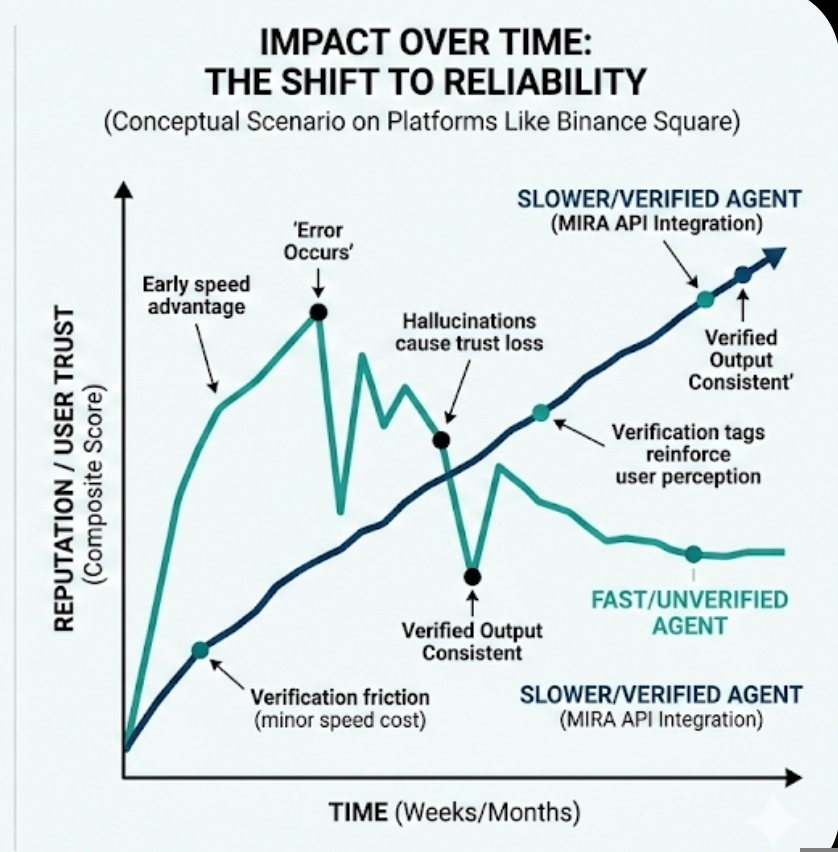

On platforms like Binance Square, credibility already works in layers. Visibility metrics, ranking systems, engagement dashboards — they all quietly reward certain behaviors. A post that receives strong interaction gets pushed further. An analyst with consistent calls gains followers. Over time, reputation becomes a currency of its own. If verified AI outputs start carrying visible reliability indicators, that changes the game. Not loudly. Gradually.

Imagine two AI-driven accounts that posting market breakdowns. One is fast and dramatic. The other is slightly slower because its claims pass through a verification check first. At first, speed wins. It always does. But if traders start noticing that the slower account is consistently accurate — and the verification tag reinforces that perception — the attention might shift. On Binance Square, where influence compounds through exposure, small credibility advantages can snowball.

There’s another angle that doesn’t get discussed enough. Developers behave differently when they know their outputs will be checked. If you’re building an autonomous trading bot that routes through a verification API, you won’t allow it to make sweeping macro predictions without data anchors. You’ll narrow the scope. You’ll define clearer rules. Verification doesn’t just filter results; it shapes how systems are designed from the start.

Still, there are trade-offs. Verification introduces friction. Autonomous systems are loved for their speed. Adding an external check, even if it takes milliseconds, changes timing. In high-frequency trading environments, milliseconds are not abstract. They are competitive edges. So developers will need to decide where verification is non-negotiable and where risk tolerance allows more autonomy.

And verification is only as good as its references. If the underlying dataset is outdated or biased, the system may confidently confirm something that is technically consistent but practically misleading. That’s uncomfortable. We like the idea of a clean “verified” badge. Reality is messier. Data sources disagree. Markets move. Regulations evolve. A verification layer has to remain transparent about what it checks against and why.

I also wonder about concentration risk. If too many autonomous apps rely on a single verification infrastructure, that layer becomes critical infrastructure. What happens if it fails? Or worse, if it becomes opaque? Trust can centralize quietly. And once centralized, it’s hard to question.

But here’s what I keep coming back to. Humans rarely trust a single source without hesitation. We cross-check headlines. We ask colleagues. We double-read contracts before signing. We’ve built social systems around layered confirmation because experience taught us that confidence alone is not proof. Autonomous AI systems skipped that social habit. They jumped straight to action.

Mira Verify API feels like an attempt to reintroduce that missing habit — structured doubt. Not dramatic skepticism. Just a pause. A quiet second look before execution.

For autonomous financial apps, especially those interacting with real capital or public platforms like Binance Square, that pause could matter more than incremental model improvements. Bigger models will continue to emerge. Faster responses will always attract attention. But over time, markets tend to reward reliability. Not instantly. Not emotionally. Just steadily.

I don’t see verification as a cure for AI’s weaknesses. It won’t eliminate hallucinations completely. It won’t make autonomous systems morally aware or strategically wise. What it does is reduce blind spots. It creates a layer where claims must align with reality before they influence it.

And maybe that’s the more mature stage of AI adoption. Not chasing perfection. Not pretending intelligence equals truth. Just building systems that accept uncertainty and design around it. Quietly. Without spectacle.

Artykuł

Mira Verify API: The Missing Layer for Autonomous AI Apps

Zastrzeżenie: zawiera opinie stron trzecich. To nie jest porada finansowa. Może zawierać treści sponsorowane. Zobacz Regulamin

0

2

103

Poznaj najnowsze wiadomości dotyczące krypto

⚡️ Weź udział w najnowszych dyskusjach na temat krypto

💬 Współpracuj ze swoimi ulubionymi twórcami

👍 Korzystaj z treści, które Cię interesują

E-mail / Numer telefonu