For years, we measured AI by how convincing it sounded. If the answer was smooth, structured, and confident, we assumed it was correct. Fluency became our shortcut for accuracy. Most of the time, that shortcut felt safe. But the moment AI stepped into high-stakes environments, we realized something uncomfortable: sounding right and being right are not the same thing.

AI systems are designed to generate plausible responses, not to verify truth. When they’re wrong, they don’t hesitate or warn you. They deliver errors with the same confidence as facts. That isn’t a flaw in personality — it’s a limitation in design. And when decisions affect finance, law, medicine, or infrastructure, that limitation becomes risky.

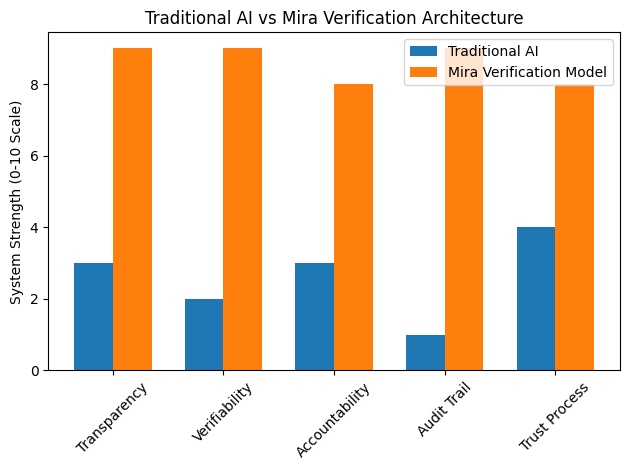

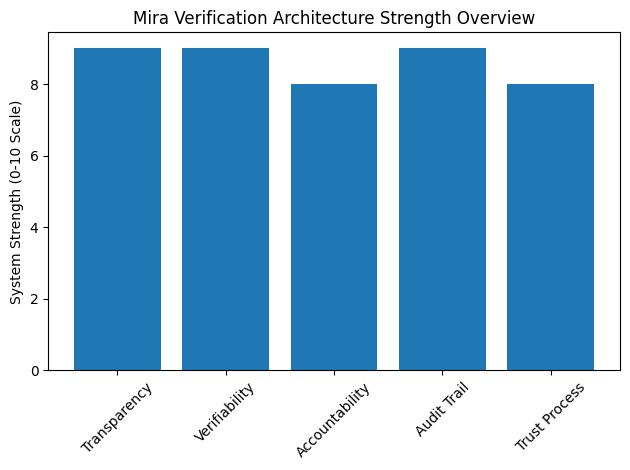

Mira approaches this differently. Instead of treating AI output as a final answer, it treats it as a hypothesis. Each response is broken into individual claims that can be independently checked. A network of verifiers evaluates those claims, forming agreement through consensus rather than authority.

The result isn’t just an answer. It’s an answer with proof — a transparent trail showing how it was tested. That shift from blind trust to procedural trust is what makes accountability possible.

@Mira - Trust Layer of AI #Mira $MIRA