When I first understood how Mira works, one idea immediately stood out to me:

Mira doesn’t treat AI inference as trustworthy unless it’s backed by stake.

That may sound simple, but it actually changes how AI networks behave at a fundamental level.

Most AI systems today run on blind execution.

A model produces an output, and users just assume it’s correct or at least honest.

There’s no cost for being careless, wrong, or even intentionally misleading.

Mira questions that assumption.

It asks something deeper:

Why should anyone trust an AI output if the provider has nothing at risk?

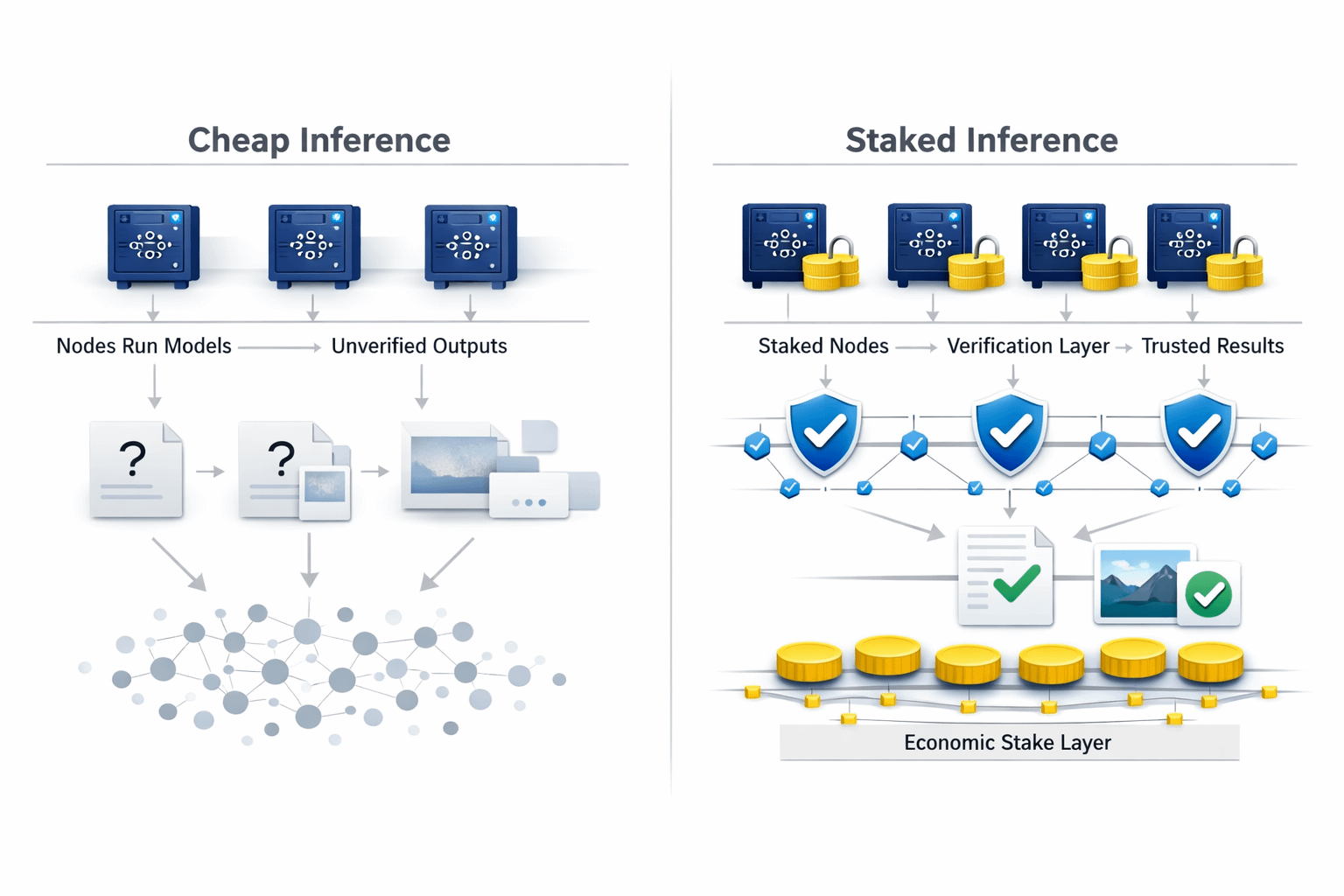

What I’ve noticed across a lot of AI infrastructure and decentralized compute networks is that inference is usually treated like a simple commodity. Nodes run models, produce outputs, and get paid and that’s pretty much where the story ends. There’s rarely any real accountability tied to how honest or high-quality that computation actually is. If a node cuts corners, swaps in a weaker model, or just returns low-effort results, the system often can’t really tell. And even when it can, the economic impact on that node is usually minimal. That’s the part that feels fundamentally different in Mira’s design to me because here, computation isn’t just performed, it’s something the provider actually has value at risk behind.

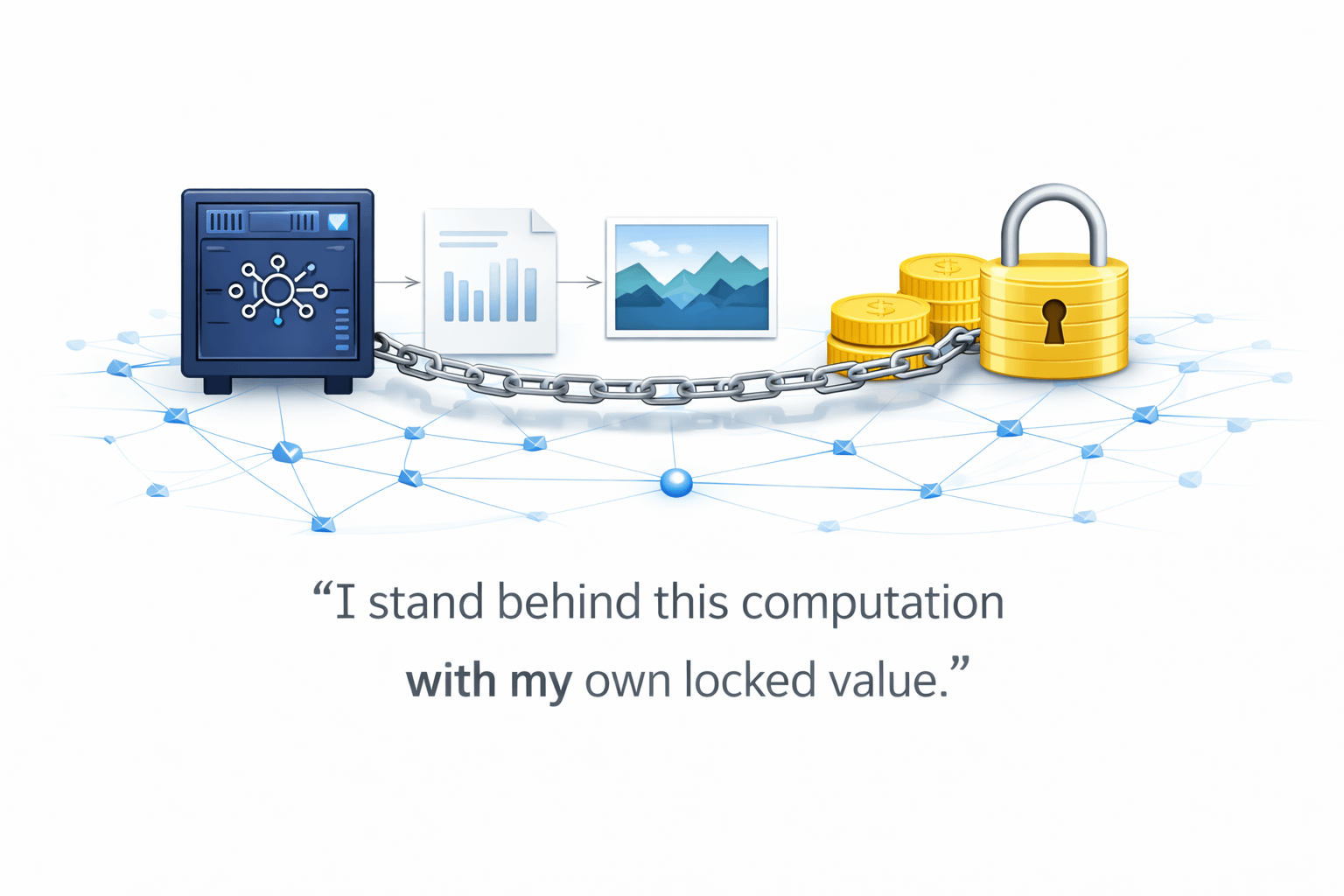

What really defines Mira approach for me is this simple shift: if you compute, you commit. In Mira, inference providers are required to stake value behind the computations they perform. So when a node runs a model and returns an output, it’s not just sending back a result it’s effectively putting its own locked value behind the correctness of that computation. That alone changes behavior immediately. Inference stops being free execution and becomes economically exposed execution. If a node delivers dishonest, low-quality, or manipulative results, there’s something real at risk on its side and that risk is exactly what pushes the system toward honest computation by design, rather than by assumption.

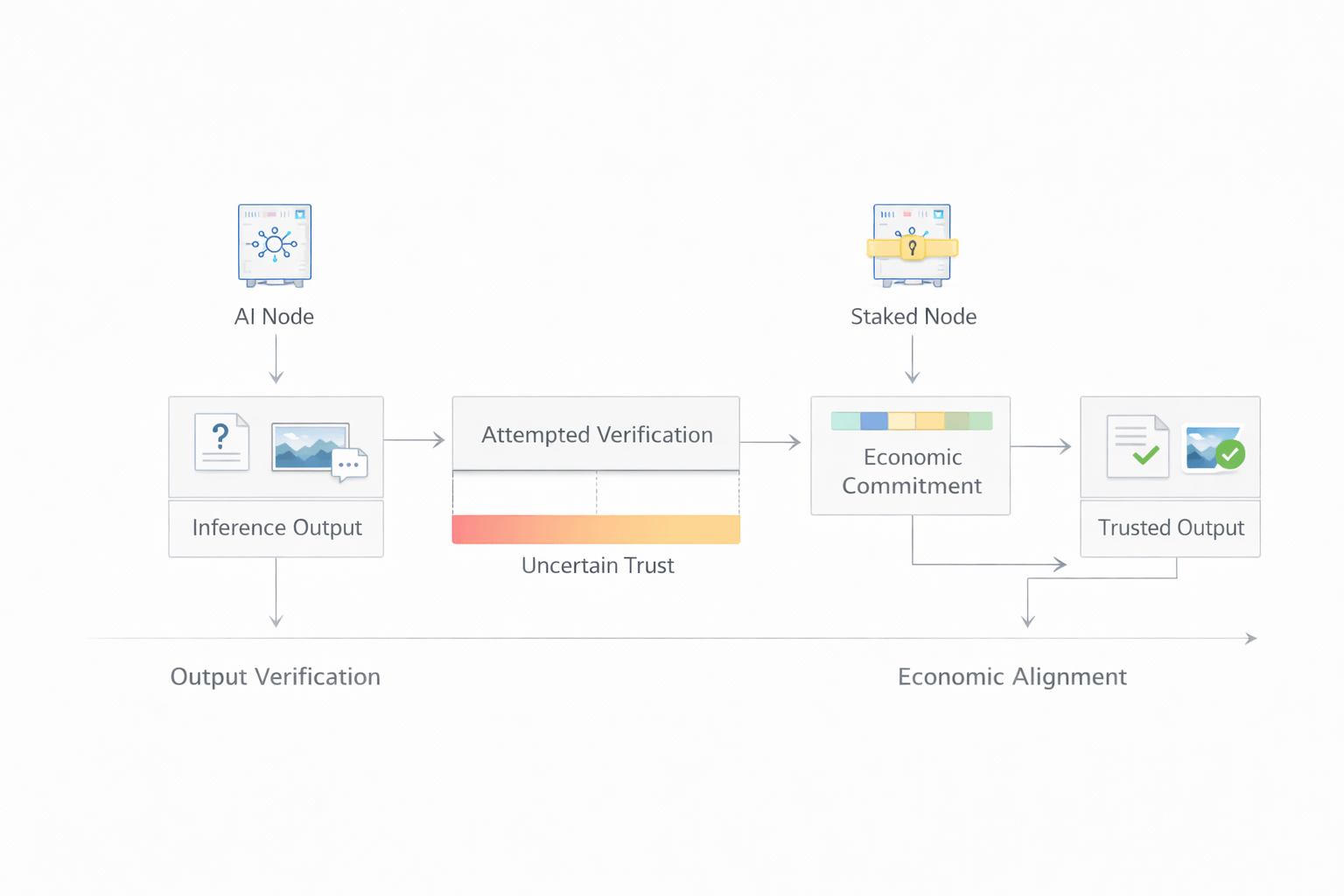

What makes this aspect of Mira more important than it first appears is that staking behind inference isn’t just another crypto-economic trick it addresses something deeper in AI systems: verifiability of intent. We usually focus on verifying outputs, but in practice that’s expensive, imperfect, and sometimes impossible, especially with complex models. What Mira does instead is shift the problem. Rather than trying to perfectly check every result, it economically aligns the provider with correctness from the start. In other words, the node has value at risk tied to the honesty of its computation. That’s powerful, because when actors carry real economic exposure, behavior adjusts naturally. You don’t need constant monitoring or heavy enforcement incentives themselves begin to enforce honesty.

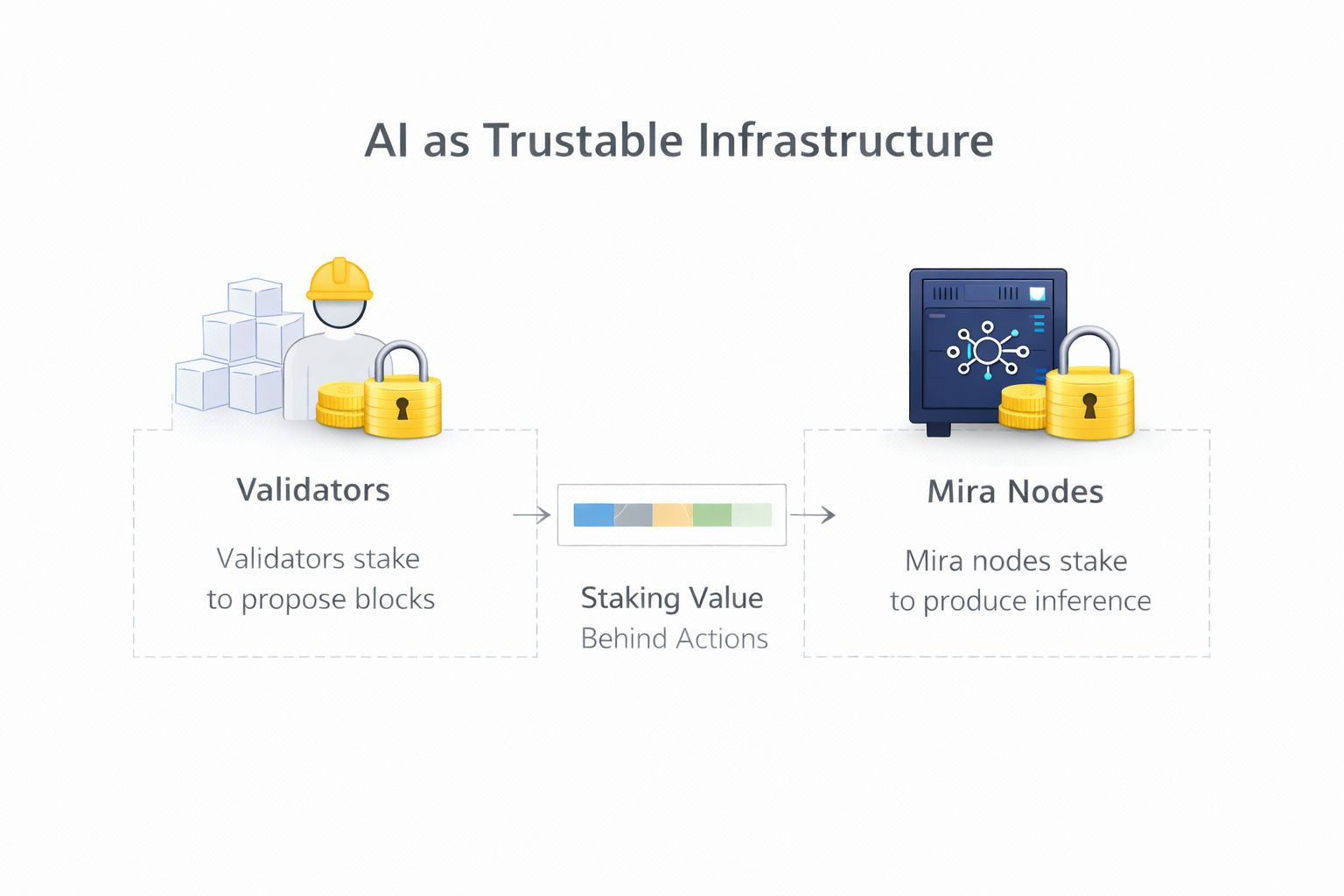

From my perspective, the real bottleneck in decentralized AI isn’t compute power or model capability it’s trust. If users can’t rely on the outputs they receive, the network quickly turns into noise rather than infrastructure. And when inference providers face no meaningful consequences for low-quality or dishonest computation, quality naturally drifts over time. Misaligned incentives eventually make manipulation the rational choice. What I find compelling in Mira’s design is that its staked inference model directly tackles this trust gap. It reframes AI execution in a way that feels very similar to blockchain validation: just as validators stake value to propose and secure blocks, Mira nodes stake value to produce inference. In both cases, actors are committing real economic weight behind their actions. That symmetry between validation and inference is what makes the model feel structurally sound to me.

To me, Mira’ approach reframes AI execution in a simple but powerful way:

Computation shouldn’t just be performed.

It should be backed.

When inference carries stake, honesty stops being optional.

It becomes economically enforced.

And that’s exactly what decentralized AI needs if it wants to move from experimental networks to dependable infrastructure. @Mira - Trust Layer of AI #mira $MIRA