$MIRA Most people treat "AI hallucinations" like a funny quirk—a chatbot getting a date wrong or inventing a fake movie. But in the world of real-world workflows, a hallucination isn't a typo; it’s a liability. When an AI starts moving money, approving contracts, or updating on-chain states, "oops" is no longer an option.

Mira Network exists because we’ve reached the limit of blind trust. It’s built on the reality that if AI output is going to trigger action, it needs to be verified, not just generated.

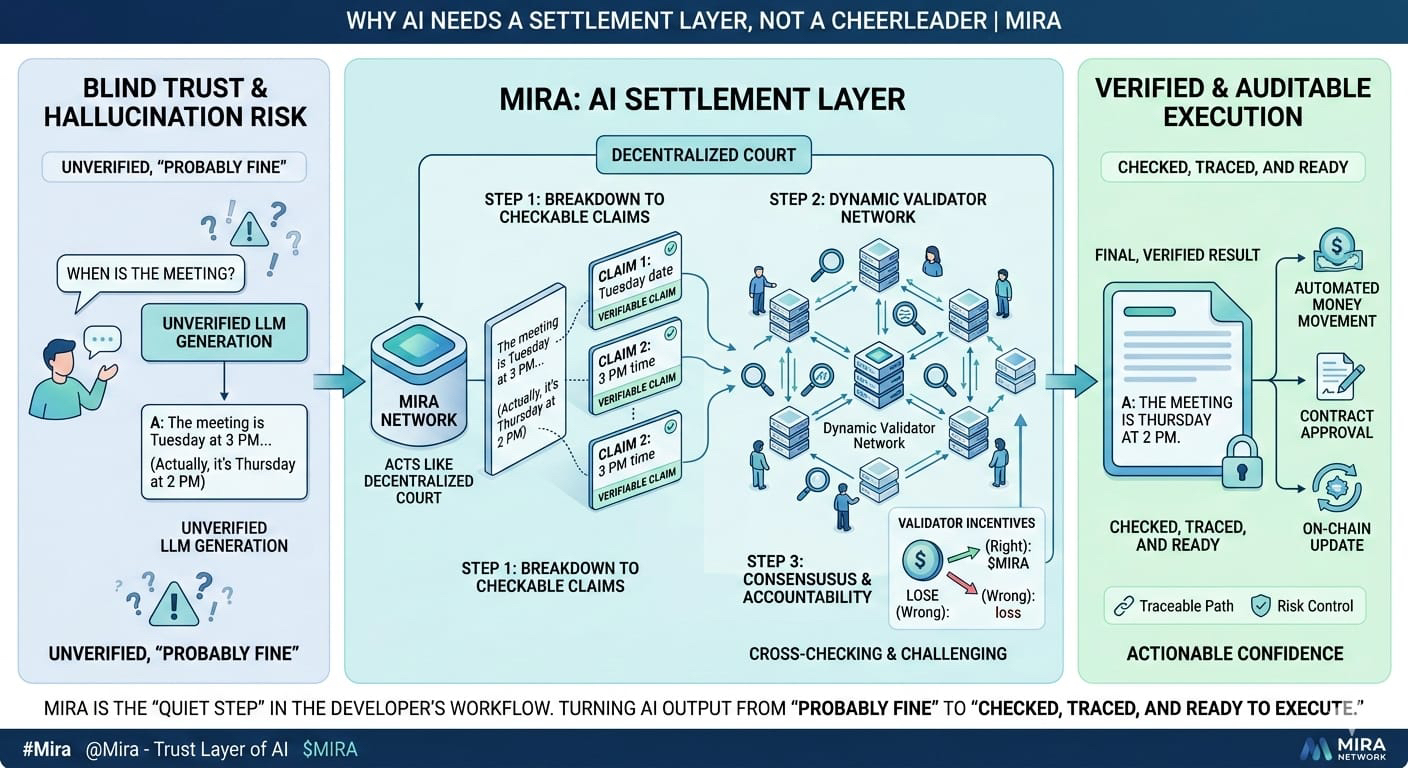

The core philosophy here is refreshingly blunt: Don't trust a single model. Instead of just taking a LLM’s word for it, Mira breaks an answer down into checkable claims. Those claims are then thrown into a Dynamic Validator Network.

Think of it like a decentralized court. Independent validators cross-check and challenge the output. If they reach a consensus, you don’t just have an "answer"—you have actionable confidence. Mira isn't trying to make AI smarter; it’s trying to make AI behave like reliable infrastructure. You should be able to rely on it under pressure without wondering if today’s the day the model decided to get "creative."

While everyone else is talking about "multi-model" setups, Mira is turning verification into a structured, auditable service. It’s the difference between saying "I checked it twice" and providing a full, traceable path of who validated it and why they cleared it.

This is the "aha!" moment for risk managers and auditors. When verification is inspectable, it becomes a control mechanism rather than a hopeful prayer.Mira understands that verification only works if the incentives are right. If there’s money on the line, people will try to game the system. By using a networked structure, Mira ensures that validators have something to gain by being right and something to lose by being wrong. It’s not promising perfection; it’s providing accountability.Let’s be real: verification costs money and time. If it’s too slow or expensive, engineers will bypass it the second a deadline hits. Mira’s biggest challenge—and its biggest opportunity—is making verification "normal."

Cheap enough to use daily.

Fast enough to keep the gears turning.

Reliable enough that skipping it feels like a genuine risk.If the future belongs to AI agents—handling trades, compliance, and decision routing—we don't just need agents that can talk. We need agents that can be held to a standard.

Mira is positioning itself as that standard-setting layer. It’s not the engine generating the intelligence; it’s the filter deciding if that intelligence is safe enough to let out into the real world. Over time, this role compounds. Just like security audits and data feeds became non-negotiable for fintech, verification is set to become the baseline for AI.

Mira doesn’t need a hype cycle to win. It wins by becoming the "quiet step" in the developer’s workflow—the one they never skip. If adoption keeps growing, Mira could become the invisible trust layer that turns AI output from "probably fine" into "checked, traced, and ready to execute."