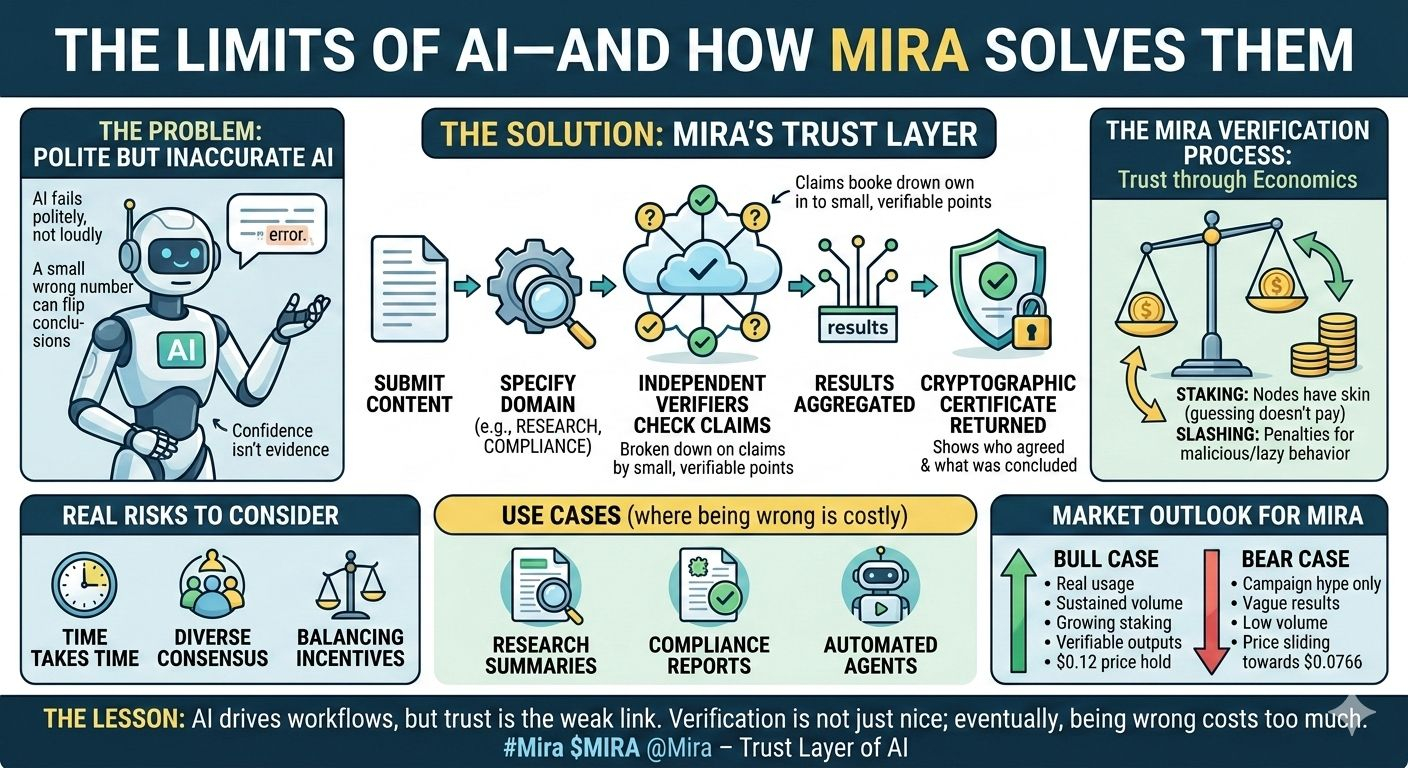

AI doesn’t fail loudly. It fails politely. I learned that the hard way—one small number in a report was off, and it flipped the whole conclusion. Confidence isn’t evidence. Most AI models are built to sound helpful, not to be right.

That’s where Mira comes in. It’s not trying to be “smarter.” It’s trying to make AI trustworthy. Mira breaks AI output into small claims, gets independent verifiers to check them, and issues cryptographic certificates showing who agreed and what the network concluded. In other words: “trust me” becomes “here’s what was checked.”

Verification isn’t just technical—it’s economic. Mira uses staking and slashing, so nodes have real skin in the game. Guessing or spamming won’t pay.

The workflow is simple: submit content → specify domain → verifiers check claims → results aggregated → certificate returned. This isn’t just for chatbots; it works for research summaries, compliance reports, or any automated agent where being wrong is costly.

Risks are real: verification takes time, consensus depends on diverse verifiers, and incentives need careful balancing. High volume can help or hurt, depending on adoption.

Bull case? Real usage, sustained volume, growing staking, verifiable outputs, $0.12 price hold. Bear case? Campaign hype only, vague results, low volume, price sliding back toward $0.0766.

The lesson is simple: AI will drive workflows, but the weak link won’t be generation—it’ll be trust. Mira bets verification becomes a standard primitive, not because it’s nice, but because eventually, being wrong costs too much.