I have learned to distrust moments when the industry appears unified in excitement. When consensus forms quickly around AI agents managing capital, interpreting governance, and executing strategy autonomously, I slow down. The louder the agreement, the more likely something structural is being ignored.

The current framing around decentralized AI is familiar. Market size projections. Cycle comparisons. Liquidity attaching itself to the dominant theme. In these environments, valuation often precedes verification. Exposure becomes the objective. Risk design becomes secondary.

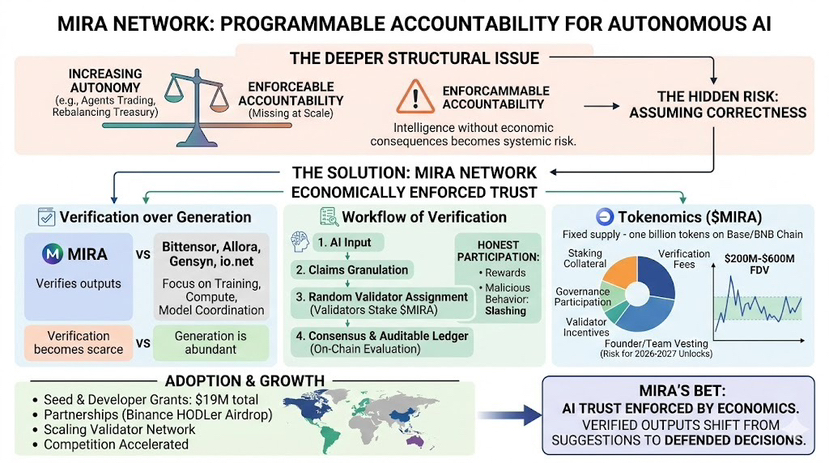

The underlying question is simpler and less discussed: when an autonomous agent makes a wrong decision on chain, who absorbs the cost?

If the answer is “no one in particular,” then the system is reputational.

If the answer is “validators who staked capital and can be slashed,” then we are discussing infrastructure.

Mira Network is built around that distinction. Its thesis is not that AI will become smarter. It is that trust in AI must be enforced economically. Outputs are decomposed into verifiable claims. Claims are distributed to independent validators. Consensus determines validity. Capital is at risk for dishonesty.

In theory, this creates a market for correctness.

In practice, it introduces coordination complexity. Are validators independently assessing claims, or rationally following perceived majority behavior? Does staking create genuine accountability, or simply concentrated influence? Incentive design only matters if it produces observable enforcement.

This is where story separates from structure.

Speculative capital can accumulate a token because it represents “AI infrastructure.” That reveals little about durability. Productive participation looks different. I look for validator retention during periods of compressed rewards. I look for actual slashing events that demonstrate consequence, not just architecture. I look for third-party integrations that accept added cost or latency because verification reduces measurable risk.

Adjacent networks like Bittensor and io.net focus on intelligence production and compute coordination. Verification occupies a narrower layer. Narrow does not mean weak. It means the burden of proof is behavioral.

What would validate this thesis?

Sustained validator participation without aggressive inflation.

Low concentration of stake relative to influence.

Clear economic penalties applied to incorrect validation.

Integrations driven by risk management, not incentives.

What would invalidate it?

High churn once emissions decline.

Governance capture through capital concentration.

Usage spikes tied primarily to liquidity programs.

An absence of visible enforcement despite errors.

If AI agents are going to control capital flows, someone must internalize the cost of error. Economics can enforce that. But enforcement must be exercised, not merely designed.

The question is not whether AI becomes autonomous.

It is whether autonomy becomes economically accountable.

Infrastructure does not prove itself during expansion. It proves itself when incentives tighten and participation persists. Price can reflect attention. Durability reflects coordination.

That is the real evaluation.

@Mira - Trust Layer of AI #Mira $MIRA