I keep coming back to one practical problem.Most token systems still behave like the network is a spreadsheet.Set an emissions curve. Announce the schedule. Wait for “growth.”That model maybe works better for simple crypto systems where activity is mostly digital and easy to count. I’m not sure it works for robotics.Because robotics has an ugly cold-start problem.Early on, real usage is thin. Real revenue is uneven. Quality is inconsistent. Some robots are useful, some are demos, and some are just noise with better marketing. If a network pays out tokens on a fixed timetable during that phase, it risks rewarding presence instead of performance. In plain English: the people best at farming the system can get paid before the system proves it creates value.

That is why Fabric Foundation’s token design caught my attention. Not because it promises a magical solution, but because the whitepaper explicitly treats fixed emissions as a weakness in a robotic service network. It argues that traditional fixed-emission models fail to adapt to network conditions, which can cause too much dilution in low-utilization periods and too little incentive during growth. Fabric’s answer is an Adaptive Emission Engine that adjusts emissions using utilization and quality signals, not just time.

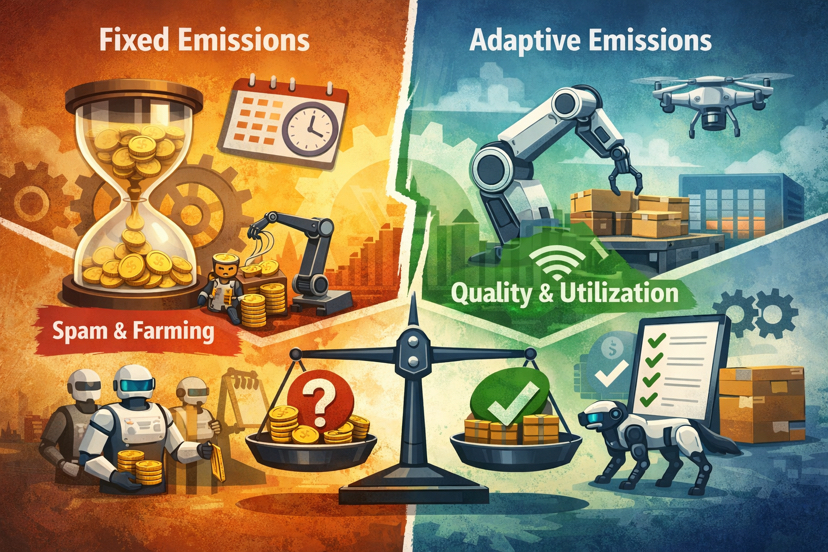

In robotics, emissions should follow verified work quality and network demand, not the calendar.Why? Because robots create cost before they create trust. Hardware has maintenance costs. Operators need incentives. Validators need to verify tasks. Users need reliability before they return. If tokens are distributed on a blind schedule, the network may attract the wrong supply first: actors optimizing for extraction rather than service quality.Here is the cleaner way to frame the difference:Fixed emissions reward waiting. If tokens unlock or distribute on a schedule no matter what, participants can optimize around time rather than useful output. That is efficient for farmers, not for customers.Fixed emissions invite spam during weak demand. When real robot usage is low, a preset reward stream can still flood the system with payout-seeking activity, causing dilution without matching economic value. Fabric explicitly flags this low-utilization dilution risk.Fixed emissions misread growth. If demand suddenly rises, a rigid schedule may underpay the operators actually delivering capacity, which slows useful expansion right when the network needs supply most.Adaptive emissions respond to utilization. Fabric defines network utilization as protocol revenue divided by aggregate robot capacity, then updates emissions based on whether utilization is above or below target. That means issuance is tied to how much the network is actually being used, not just how long it has existed.Adaptive emissions also respond to quality. Fabric’s controller includes a quality score aggregated from validator attestations and user feedback. More importantly, the paper says quality below threshold can reduce emissions even if utilization is high. That matters: bad service should not be rewarded just because traffic is busy.Adaptive design is still not enough unless work is hard to fake. Fabric pairs the emission controller with an evolutionary reward layer that blends activity and revenue during bootstrap, then shifts as the network matures. The paper presents this as part of the cold-start solution, but it also admits ongoing research is needed around “non-gameable” measurements and fake revenue risks such as self-dealing. That honesty is important.

That last point is where I think the real debate starts.Token designers love adaptive language because it sounds smarter than linear vesting. But “adaptive” can still become theater if the signals are weak. In a robotic network, measuring real work is hard. Measuring good work is harder. A robot can complete many tasks badly. A network can show activity without proving durable utility. Fabric at least tries to formalize the controller: emissions move with utilization and quality, and the per-epoch adjustment is bounded by a circuit breaker to avoid violent swings. That is better than hand-wavy tokenomics.

Still, I would not oversell it.Quality scores can be gamed. User feedback can be noisy. Validators can miss edge cases. Revenue can sometimes be manufactured. Even Fabric’s whitepaper notes that revenue alone is not enough and that future work should focus on broader non-gameable measures such as verified work, compliance, efficiency, power use, and human feedback.A small real-world scenario makes the problem clearer.Imagine a robotics network trying to bootstrap warehouse inspection bots across three cities. In month one, actual customer demand is low. Only a few warehouses use the service regularly. Under a fixed-emissions model, token rewards still flow on schedule. So what grows first? Probably not trusted coverage. More likely: low-quality operators, synthetic task loops, and participants optimizing for payout volume.Now change the design.

Suppose emissions rise only when utilization is genuinely below target and quality stays acceptable, then taper as fee revenue grows. Suddenly the incentive changes. Operators are pushed to deliver service that people keep paying for. Low-quality expansion becomes less attractive. This does not remove gaming. But it makes the network’s monetary policy more aligned with operational reality.That is why I think this topic matters beyond Fabric.Crypto keeps importing token models from software networks into systems that touch the physical world. But robotics is not just another app vertical. It has downtime, fraud surfaces, maintenance drag, and trust bottlenecks. If emissions are not linked to real work and service quality, the network can scale rewards faster than it scales usefulness.Fabric’s strongest idea here is not that emissions are dynamic. It is that cold start in robotics should be solved with signal-driven incentives, not calendar-driven generosity. The whitepaper’s combination of an adaptive controller, structural demand sinks, and a reward layer that shifts from activity to revenue is a serious attempt to encode that principle.What I’m still watching is the measurement layer.Who defines quality in practice?How resistant are the signals to collusion?And when the network is young, how do you distinguish genuine early activity from beautifully packaged farming?If Fabric wants adaptive emissions to be more than a nice formula, what exact signals should the network trust most: revenue, verified task completion, user feedback, or something else?