The more autonomous AI becomes, the less comfortable I am with blind trust.

We’ve moved far beyond chatbots answering questions. Today, AI agents execute trades, manage liquidity, approve transactions, optimize logistics, and even trigger automated governance actions. These systems are no longer assistive they are operational. And once an AI moves from suggesting to acting, accountability stops being philosophical and becomes infrastructural.

That’s where I started paying attention to Mira Network.

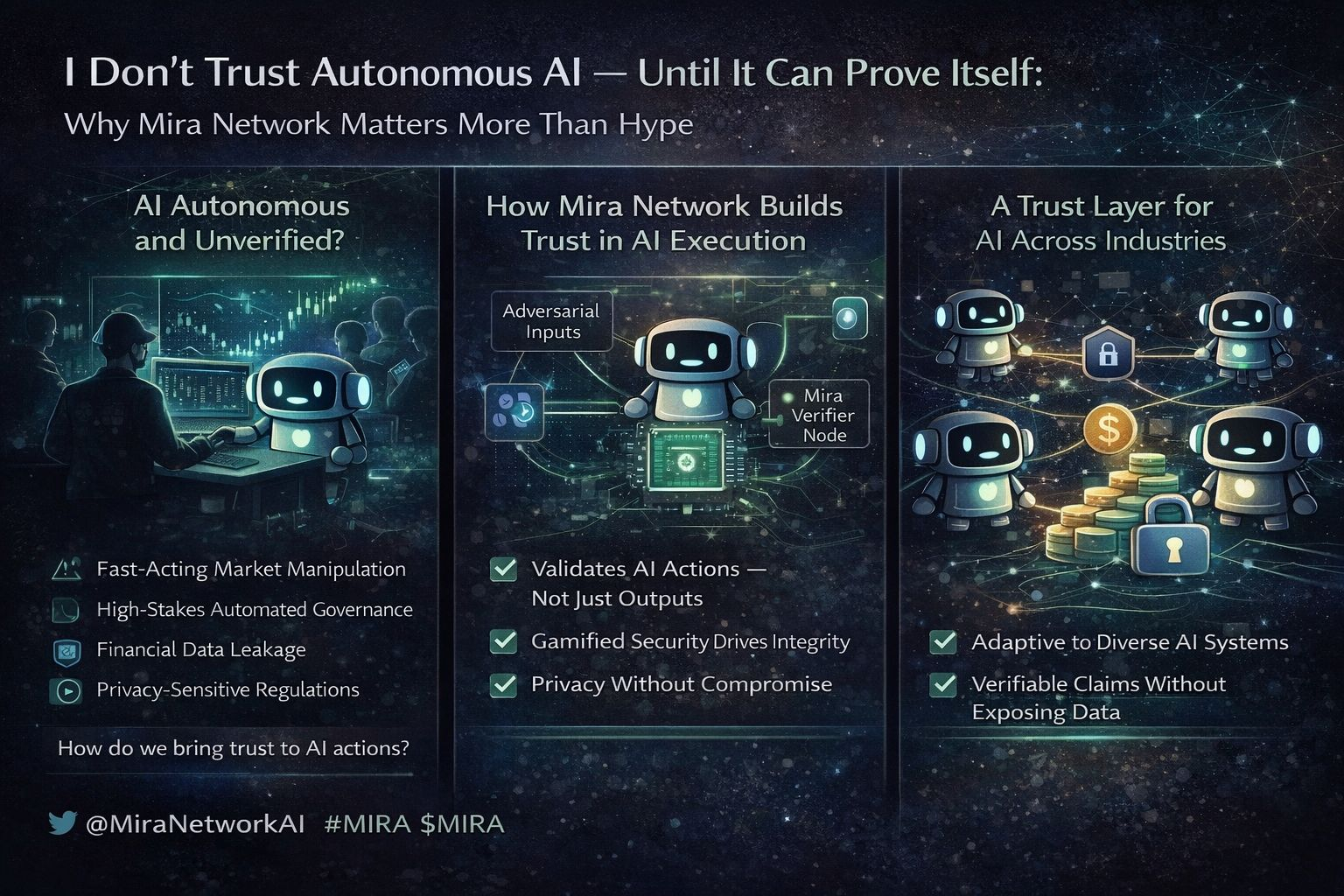

What stood out to me isn’t that Mira is building another AI tool. It’s building verification for AI actions not just outputs. That difference is subtle, but massive. Verifying a text response is one thing. Verifying that an autonomous agent executed a financial trade correctly, under defined constraints, without manipulation or hallucination? That’s a different category of risk.

This is an example: imagine an AI trading agent managing on-chain liquidity. It executes a series of automated swaps based on market signals. If it misinterprets data or is manipulated by adversarial inputs, losses happen instantly. In traditional systems, you rely on internal logs and company oversight. In a decentralized system, you need cryptographic verification that the action followed predefined logic. Mira introduces that accountability layer.

Another issue Mira addresses and I think this is underrated is verification spam. Open incentive systems often attract low-effort validators who chase rewards without contributing meaningful oversight. If verification becomes gamed, the system weakens. Mira’s architecture focuses on structured validation metrics rather than blind participation, reducing noise in favor of measurable integrity.Privacy is where things get more interesting. Many AI systems process sensitive financial or business data. Verification cannot mean exposure. Mira’s model enables validation of claims without revealing underlying data conceptually similar to zero-knowledge systems. You prove correctness without leaking the content. That’s critical if AI agents are operating in finance, healthcare, or governance.

What I respect most is neutrality. Mira doesn’t favor one AI model over another. It verifies claims, not brands. That matters because AI ecosystems are fragmenting quickly. A verification layer that is model-agnostic becomes reusable infrastructure across industries.

This is another example: imagine a decentralized insurance protocol relying on AI to assess claims. Instead of trusting a single model provider, the claim’s evaluation is verified through Mira’s framework. The protocol doesn’t care which AI generated the decision it cares whether the decision met verification standards.As misinformation tactics evolve, static defense mechanisms fail. Continuous verification becomes adaptive defense. Clear metrics define what counts as valid. That consistency is what transforms AI from powerful to reliable.

I don’t see Mira as just another AI token narrative. I see it as the beginning of a trust layer for autonomous systems.Because in a world where AI acts independently, trust cannot be implied.

It has to be proven.

What’s your view is verified AI execution the missing layer for serious adoption, or are we still underestimating the risks of autonomous agents?

Let’s discuss.