A few weeks ago I was scrolling through Binance Square late at night, half reading, half just watching how fast opinions move. One post says a token is undervalued. Another says the same project is deeply flawed. Some of those posts are clearly written by AI. You can feel it in the tone — confident, polished, slightly too clean. And I caught myself thinking: we are slowly outsourcing not just writing, but judgment.

That feeling has been growing for a while. AI is no longer just a tool you open in a separate tab. It’s inside trading dashboards, research summaries, moderation systems, even comment filters. It ranks content. It suggests what to read next. It drafts analysis. And most of the time, we accept its output without asking where the authority comes from. It sounds reasonable, so we move on.

The idea of AI as a public good doesn’t start from theory. It starts from that quiet dependency. When something influences how markets move or how information spreads, it stops being a private convenience. It becomes infrastructure. Roads are infrastructure. Electricity grids are infrastructure. You don’t need to love them. You just need them to work, and to work fairly.

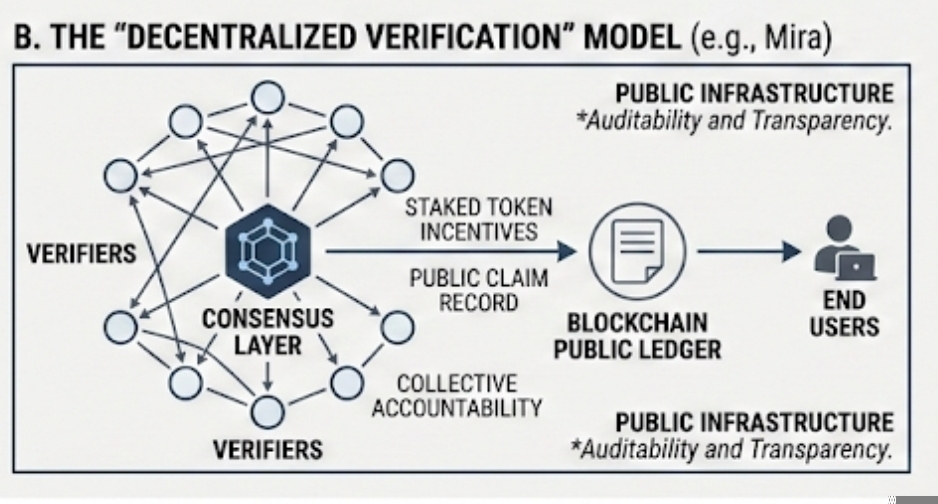

That’s why I find Mira Network interesting — not because it promises smarter models, but because it focuses on verification. Verification sounds boring. It’s not flashy. But it’s the difference between an answer and a checked answer. In Mira’s system, when an AI produces a claim, independent participants review it. If enough of them agree, the result is written on-chain. On-chain simply means stored on a blockchain — a public ledger that cannot be quietly edited later.

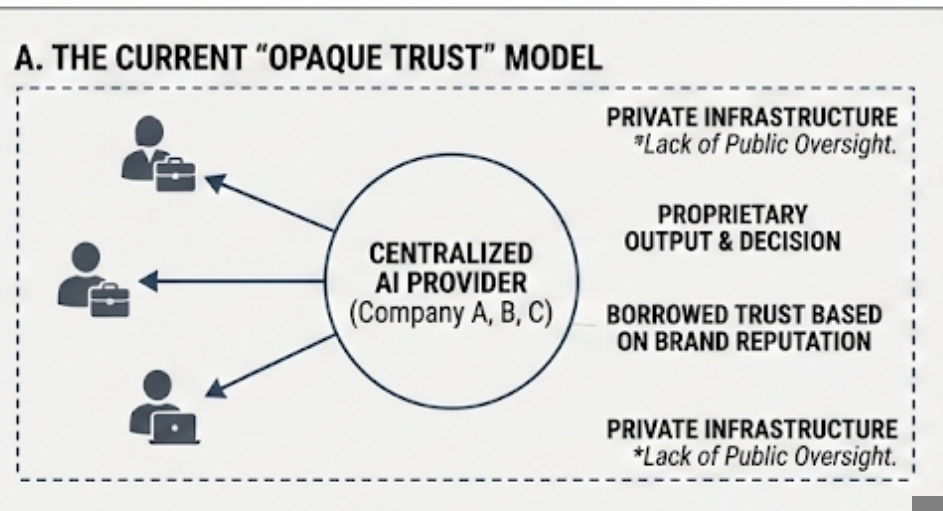

What stands out to me is not the technology itself, but the shift in responsibility. In most AI systems today, trust is borrowed from the company that built the model. If you believe in the brand, you accept the output. That’s a fragile structure. Companies pivot. Leadership changes. Incentives shift. A decentralized verification layer spreads that responsibility outward. No single actor owns the final word.

Still, decentralization is not magic. I’ve seen enough crypto projects to know that distributing power doesn’t automatically produce wisdom. Mira relies on economic incentives. Verifiers stake tokens — digital assets that hold value inside the network — and they earn rewards if their assessments align with consensus. If they try to manipulate outcomes, they risk losing stake. It’s a system built on incentives, not on moral appeals.

That design is practical, maybe even realistic. People respond to incentives. But incentives also shape behavior in unexpected ways. If rewards are tied to agreement, will participants hesitate to challenge dominant views? If controversial claims are harder to verify, will they be ignored? Consensus can drift into comfort. That’s not a flaw unique to Mira. It’s human nature, just encoded in token logic.

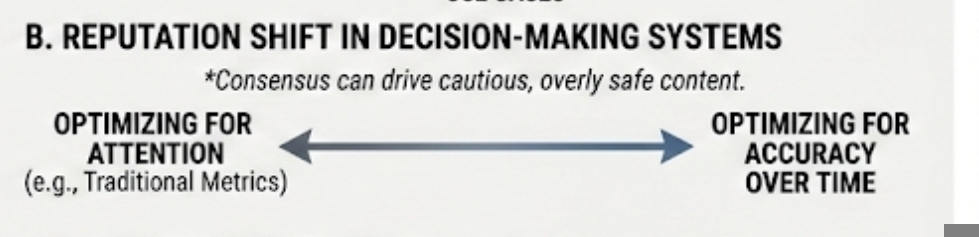

On Binance Square, where credibility already affects visibility, this becomes more layered. Posts rise or fall based on engagement metrics — likes, comments, shares. Add a verification score to that mix, and behavior will change. Traders and analysts may think twice before posting bold AI-generated predictions if those predictions can be publicly evaluated and recorded. Reputation would not only be about attention. It would be about accuracy over time.

I actually think that part is underrated. Public dashboards that's influence the behavior more than the rules do. When you can see your credibility score, or when others can, you start optimizing for it. That can lead to better standards. It can also lead to cautious, overly safe content. There’s always a trade-off.

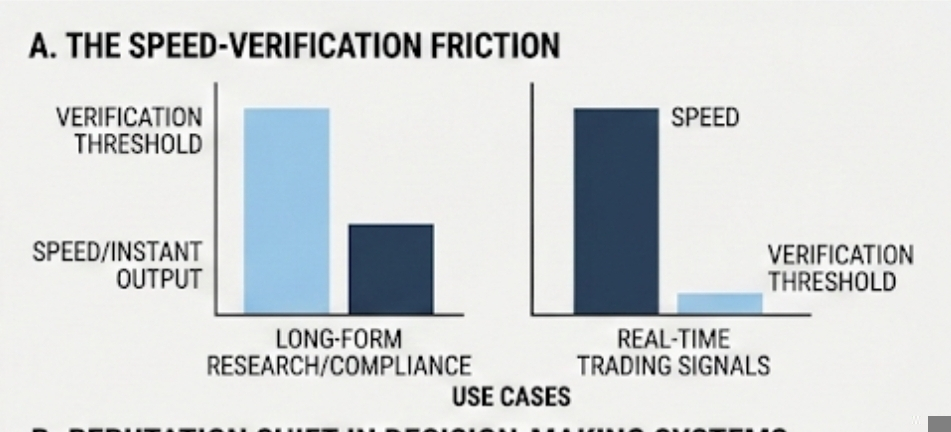

Another thing people don’t talk about much is speed. AI feels powerful because it’s instant. Ask a question, get an answer in seconds. Add verification, and you introduce delay. Even if the delay is small, it changes how the output is used. For long-form research or compliance checks, that delay is acceptable. For real-time trading signals, maybe not. So the idea of AI as a public good might apply unevenly. Some use cases can afford friction. Others resist it.

I also keep coming back to this: calling AI a public good implies shared governance. That’s uncomfortable. Public goods require messy coordination. Disagreements. Voting mechanisms. Economic penalties. It’s easier to let a company handle it behind closed doors. But that convenience comes at the cost of opacity. You don’t see how decisions are made. You just see the polished result.

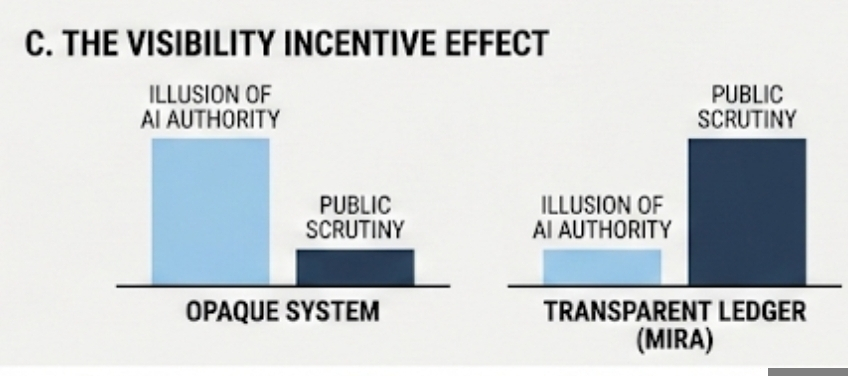

Mira’s approach forces part of that process into the open. Verification outcomes are recorded publicly. Patterns can be audited. If certain participants consistently align with inaccurate claims, the data will show it. Transparency doesn’t guarantee fairness, but it makes patterns visible. And visibility changes incentives. It’s harder to quietly manipulate something when the ledger is open.

At the same time, I’m cautious about framing this as a clean solution. Truth is rarely binary. Economic forecasts can be “correct” under one set of assumptions and wrong under another. Technical audits depend on what risks you prioritize. When verification becomes formalized, nuance can get squeezed into simplified outcomes. That’s a risk worth acknowledging.

What feels different here is the mindset shift. Instead of treating AI output as a final answer, the system treats it as a claim. Claims can be checked. They can be disputed. They can be recorded alongside the process that validated them. That small reframing matters. It reduces the illusion of authority that polished AI language often creates.

I don’t think AI will become a public good overnight. It’s still heavily shaped by private capital and competitive pressure. But the direction is worth watching. As more economic and social decisions are influenced by machine outputs, centralized trust starts to look brittle. Distributing verification might not make AI perfect. It might simply make it accountable in a way that feels closer to how we handle other shared systems.

And maybe that’s enough for now. Not a utopian promise of unbiased intelligence. Just a slow shift toward treating AI outputs as something we collectively examine rather than passively absorb. When that shift happens — even partially — AI stops being a distant authority and starts feeling like infrastructure we all have a stake in.

Artykuł

The Rise of AI as a Public Good: Mira’s Decentralized Approach

Zastrzeżenie: zawiera opinie stron trzecich. To nie jest porada finansowa. Może zawierać treści sponsorowane. Zobacz Regulamin

0

2

67

Poznaj najnowsze wiadomości dotyczące krypto

⚡️ Weź udział w najnowszych dyskusjach na temat krypto

💬 Współpracuj ze swoimi ulubionymi twórcami

👍 Korzystaj z treści, które Cię interesują

E-mail / Numer telefonu