Let’s be real for a second.

AI looks brilliant right now. It writes essays, builds apps, drafts legal contracts, spits out trading strategies, helps doctors read scans. It feels smart. Sometimes it actually is. But here’s the thing nobody likes to sit with: it still makes stuff up. Confidently. Smoothly. Without blinking.

And that’s not a tiny bug. That’s structural.

Modern AI doesn’t “know” things. It predicts probabilities. Large language models look at mountains of data and guess the most likely next word. That’s it. It’s pattern prediction at absurd scale. When it works, it feels magical. When it doesn’t, you get hallucinations — fake citations, incorrect facts, made-up numbers delivered with full confidence.

I’ve seen this before. Every hype cycle ignores the reliability issue at first. Then reality hits.

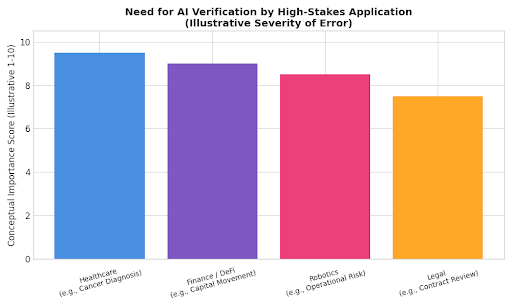

When AI drafts a tweet and gets something wrong, fine. Embarrassing maybe. But when AI helps diagnose cancer, moves capital in automated markets, or controls a robotic system? Different story. Errors don’t stay theoretical. They turn expensive. Or dangerous.

That’s the problem Mira Network is trying to tackle.

Instead of pretending one giant model will magically become perfect, Mira takes a different angle. It doesn’t trust a single AI output. It breaks that output into smaller claims. Then it pushes those claims across a network of independent AI models that check each piece. After that, it runs the results through blockchain-based consensus. The final verification gets recorded on a public ledger.

In plain English? Don’t just generate answers. Prove them.

And honestly, that’s where it gets interesting.

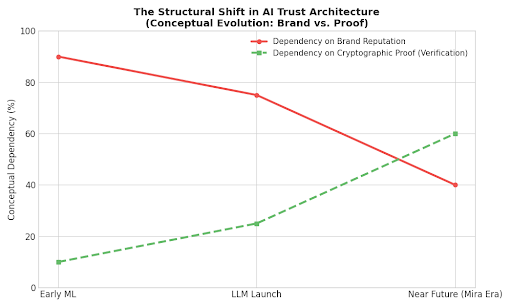

AI didn’t start this way. Early systems followed strict rules. If X, then Y. Simple. Predictable. Limited. They couldn’t hallucinate because they couldn’t improvise. Then machine learning showed up. Models started learning from data instead of fixed rules. Accuracy jumped. Transparency dropped. Black boxes everywhere.

Fast forward to large language models. They generate entire essays in seconds. But they still operate on probability. They don’t check truth. They predict likelihood. That’s a huge difference, and people don’t talk about it enough.

Researchers at major AI labs openly admit hallucination rates exist. They measure them. They try to reduce them. Reinforcement learning helps. Fine-tuning helps. Alignment work helps. But nobody eliminated the core issue because it sits inside the architecture itself. These systems predict what sounds right, not what’s provably correct.

So Mira doesn’t try to “fix” a single model. It layers verification on top.

That reminds me of aviation. Planes don’t rely on one sensor. They stack redundancy. Finance works the same way. Clearinghouses reconcile transactions across multiple ledgers. Distributed databases use consensus to stay consistent. High-reliability systems don’t chase perfection. They build cross-checks.

Mira applies that logic to AI.

Here’s how it works technically. When an AI generates complex output, Mira decomposes it into discrete claims. Each claim gets sent to independent AI validators across the network. Those validators assess whether the claim holds up. The system aggregates their responses. Then blockchain consensus finalizes and records the verification result. Validators stake economic value, which means they risk losing capital if they consistently validate incorrect claims. Accurate validators earn rewards.

That economic pressure matters. People behave differently when money sits on the line.

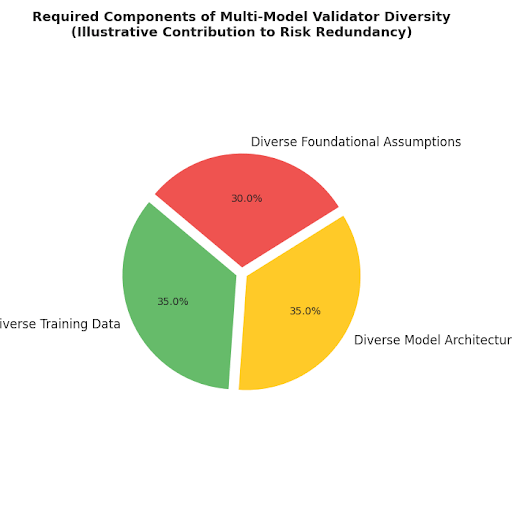

Now, does blockchain magically guarantee truth? No. And anyone who says that doesn’t understand blockchains. Consensus guarantees agreement, not correctness. If the validator pool shares the same blind spots, you can still get correlated errors. This is where things get tricky. Diversity among validators becomes critical. Different training data. Different architectures. Different assumptions.

Otherwise, you just get a crowd confidently wrong together.

But let’s talk about why this matters beyond theory.

Think about autonomous trading agents operating in decentralized finance. These agents analyze data, execute strategies, move capital automatically. If one hallucinated assumption slips through — say, incorrect macro data — the system could deploy capital based on fiction. That’s not hypothetical. Automated systems amplify small mistakes.

Or healthcare. AI already assists radiologists. Doctors don’t just need “probably correct.” They need traceability. They need audit trails. Regulators demand accountability. A decentralized verification log anchored on-chain gives institutions something they can inspect. That changes the compliance conversation.

Legal tech? Same issue. AI tools review contracts and analyze case law. If a model fabricates a precedent — which has happened — the consequences are serious. Multi-model validation reduces that unilateral risk.

And robotics. As general-purpose robotic agents evolve, decision pipelines must become reliable. A hallucinated object classification in a warehouse robot isn’t funny. It’s operational risk.

Here’s what I like about Mira’s approach: it accepts that AI won’t be perfect. Instead of betting everything on model improvement, it designs an economic and technical system around verification. Redundancy plus incentives plus transparency.

It’s pragmatic.

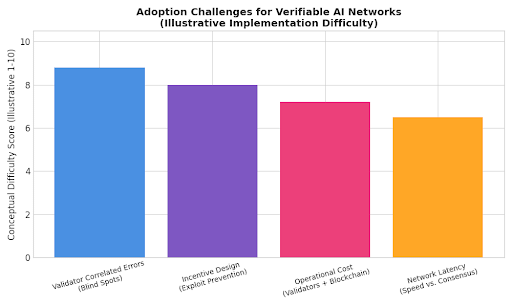

But I’ll be honest — this won’t be easy.

Latency becomes a problem. Consensus takes time. Some environments require near-instant responses. If verification slows execution too much, adoption suffers. Cost also matters. Running multiple AI validators plus blockchain infrastructure isn’t cheap. The economics must make sense.

And incentive design? That’s a battlefield. Crypto has shown us over and over that poorly designed reward systems get exploited. If validators find ways to game scoring metrics, reliability collapses.

So yes, risks exist.

Still, zoom out.

AI agents are starting to operate in real economic environments. Zero-knowledge proofs for machine learning execution are gaining research attention. Governments are drafting AI regulations focused on accountability and transparency. Enterprises want explainability. Nobody wants black-box systems making high-stakes decisions without audit trails.

Trust is shifting from “we promise it works” to “here’s the proof.”

That shift feels structural. Not hype-driven.

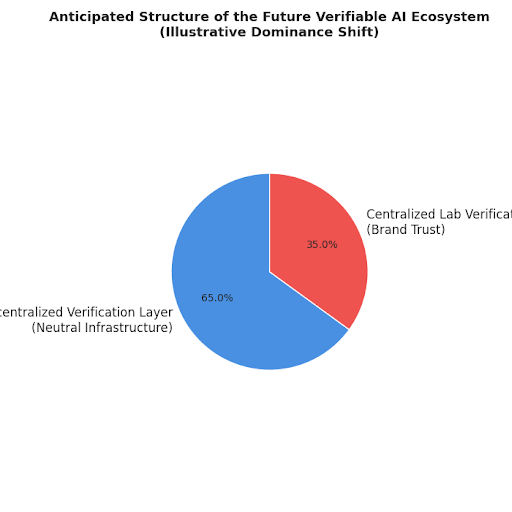

There are two futures I see. In one, centralized AI labs control both generation and verification. They tell you their systems work, and you trust their brand. In the other, decentralized verification layers emerge as neutral infrastructure. AI models plug in. Validators compete. Proof becomes public.

Mira leans into that second world.

Will it win? I don’t know. Execution matters. Validator diversity matters. Scalability matters. Integration partnerships matter. But the core idea — that AI outputs need verifiable consensus before autonomous deployment — makes sense.

AI is moving from assistant to actor. That’s a big jump. When systems start acting independently — trading, diagnosing, allocating — raw intelligence isn’t enough. You need guarantees.

And here’s my bias: I don’t trust single points of failure. I’ve watched too many centralized systems break under pressure. Layered redundancy works better. Always has.

So whether Mira becomes dominant infrastructure or just an early iteration, the direction feels inevitable. The future of AI won’t just belong to whoever generates the smartest text. It’ll belong to whoever can prove the output holds up.

Fluency impresses people. Proof builds systems.

And systems are what run the world.

#mira #Mira @Mira - Trust Layer of AI $MIRA