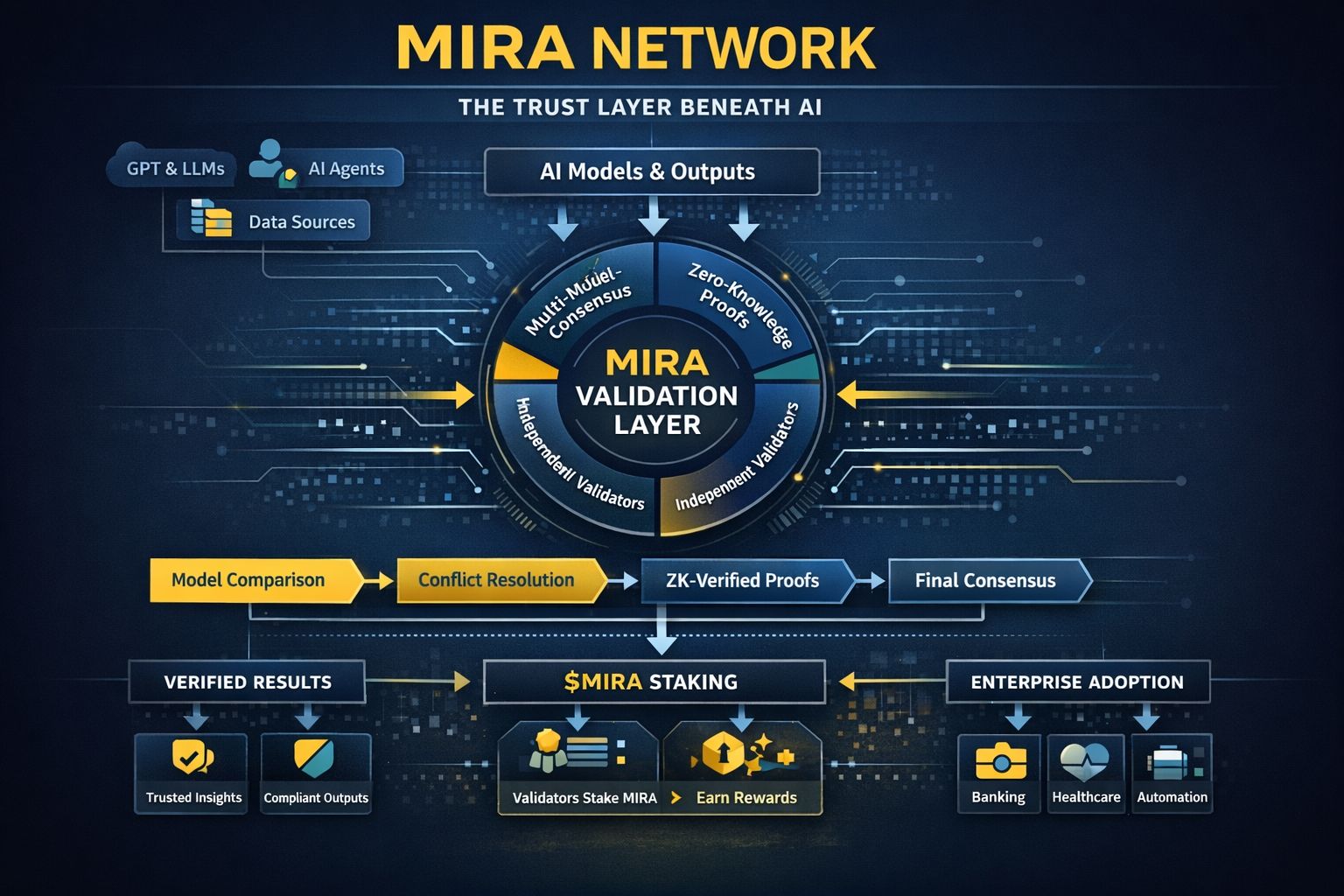

For years, the world treated AI hallucination as a small error, something that could be fixed with more training data or more powerful models. But as AI systems moved into finance, health, security, enterprise automation, and government operations, it became clear that hallucination wasn’t a bug. It was a structural threat. The problem was never just that AI gets facts wrong. The real problem is that there is no independent system that proves what AI says is actually true. Mira Network is built to solve exactly this issue, and it approaches the problem from a completely different angle. Instead of trying to make a single model more accurate, Mira introduces multi-model consensus, zero-knowledge-backed verification, and an entirely new trust layer beneath AI models. In this architecture, hallucination is not “fixed.” It is defeated through outvoting and independent validation, turning AI output into verifiable, auditable, and tamper-resistant information.

Mira works below the model layer and above the application layer, making it a neutral verification engine that can support any AI model, any agent system, any enterprise workflow, and any data pipeline. This makes Mira fundamentally different from every other AI project. It isn’t competing with models like GPT, Claude, or Gemini. It sits beneath them, verifying their outputs, analyzing inconsistencies, eliminating conflicts, and securing the truth layer of the entire AI stack. As AI becomes more deeply integrated into critical systems, this trust layer becomes necessary, not optional. A financial model that hallucinates a risk score, a healthcare system that misinterprets a diagnosis, or an autonomous agent that executes the wrong instruction creates real-world damage. AI cannot operate safely at scale without a verification architecture that catches errors before they become decisions.

This is the role Mira is claiming in the global AI ecosystem. When multiple AI models evaluate the same claim, Mira compares their responses, ranks them, and calculates a consensus score. If the models disagree, Mira flags the conflict. If the models align, Mira generates a verified output. This cycle is protected using cryptographic proofs, ensuring that validation can happen without exposing sensitive data. As more enterprises adopt AI, demand for this type of verification layer grows very quickly. The world is shifting from “AI that generates” to “AI that can be trusted.” Generation is optional; verification is essential. Mira is building infrastructure for the second category.

The $MIRA token secures this entire process. Validators stake $MIRA to participate in the consensus cycle, earning rewards for accurate validation and facing slashing risks for dishonest behavior. This ensures that verification remains economically secure, trustworthy, and aligned with real-world requirements. As more enterprises and agent systems rely on verified AI output, the validation load increases, and with it, the importance of staking grows. This turns $MIRA into the economic engine behind the trust layer of AI, powering a new “validation economy” where accuracy and integrity have measurable value.

AI agents represent the next major technological shift, and they amplify the importance of Mira. Agents can write emails, automate workflows, move funds, analyze documents, execute trades, and perform research without human supervision. But agents also hallucinate, misunderstand, or misinterpret context. Before executing any important task, an agent can route its decision through Mira: “Is this correct?”, “Is this compliant?”, “Is this safe?”, “Does this follow the rules?” This transforms agents from unpredictable tools into reliable digital workers capable of operating in regulated, high-stakes environments. Without verification, agents remain risky experiments. With Mira, they become enterprise-ready.

Another major strength of Mira is knowledge integrity. The network processes billions of words per day and cross-checks massive pools of structured knowledge, including a significant portion of Wikipedia-level content. This makes Mira a defender of information truthfulness. If two sources contradict each other, Mira detects it. If a model fabricates information, Mira exposes it. If a dataset carries bias or manipulation, Mira surfaces it. In an internet landscape where misinformation spreads faster than ever, a verification network becomes not just useful — it becomes essential infrastructure. This positions Mira as a global AI integrity layer, controlling consistency across knowledge ecosystems.

Enterprises adopting AI today face compliance risks, privacy requirements, missing audit trails, and legal liabilities when an AI system makes an unverified decision. Mira solves these challenges immediately. It adds traceability, transparency, privacy-friendly verification, and accountability to every AI-driven output. This is why Mira is increasingly viewed as the foundation of enterprise AI adoption. Companies are no longer asking, “How do we use AI?” They’re asking, “How do we trust AI?” And Mira is the first large-scale answer to that question.

The deeper you look at the evolution of AI infrastructure, the clearer one thing becomes: the most powerful layer will not be the model layer. It will be the layer that determines which information can be trusted. That is where real control and real influence will exist. Mira is building exactly that layer. It doesn’t matter which model becomes the smartest; all models will eventually rely on a verification system when operating inside critical industries. In the same way blockchains became the trust layer for digital value, Mira is becoming the trust layer for digital intelligence.

Mira Network stands at the beginning of a new category: verifiable AI. The world no longer needs faster guesses; it needs provable truth. As AI expands into finance, governance, medicine, robotics, and autonomous systems, verification becomes the foundation of safety. Mira is building this foundation with multi-model consensus, cryptographic validation, enterprise-grade architecture, and an economic system secured by $MIRA.

The next decade of AI won’t be defined by who builds the biggest model, but by who builds the infrastructure that determines what can be trusted. Mira is positioning itself to become that infrastructure. And as AI becomes the operating system of the global economy, the network that verifies AI becomes one of the most important layers of all.