When I first dove seriously into using AI tools, I was blown away by how effortlessly everything came together. The responses felt incredibly smooth clean, well-structured, delivered with zero hesitation or awkward pauses. It almost seemed too good to be true. But after months of regular use, a nagging issue emerged that overshadowed the occasional factual slip up: the unshakable confidence the models projected even when they were flat-out wrong. That absolute certainty, paired with hidden errors, made it harder to spot problems and eroded trust over time.

This realization is exactly why Mira Network began to stand out for me. Rather than pouring resources into building one ever-larger, supposedly smarter model in hopes of erasing mistakes entirely, Mira takes a different path. It focuses on making AI outputs verifiable from the ground up, creating a system where reliability comes from collective checking instead of individual perfection.

Most AI setups today follow a straightforward, one way flow: you input a query, the model generates an answer, and then the burden falls squarely on you the user to decide whether to accept it at face value or spend time double checking facts manually. That approach works fine for casual chats or low stakes brainstorming, but it quickly falls apart as AI takes on more serious responsibilities. When systems start managing financial transactions, executing complex workflows autonomously, producing research that shapes business or policy decisions, or even assisting in high risk fields like healthcare or law, simply hoping the output is “probably correct” no longer suffices. Manual verification doesn’t scale, and blind trust becomes dangerous. The responsibility can’t stay with the end user forever.

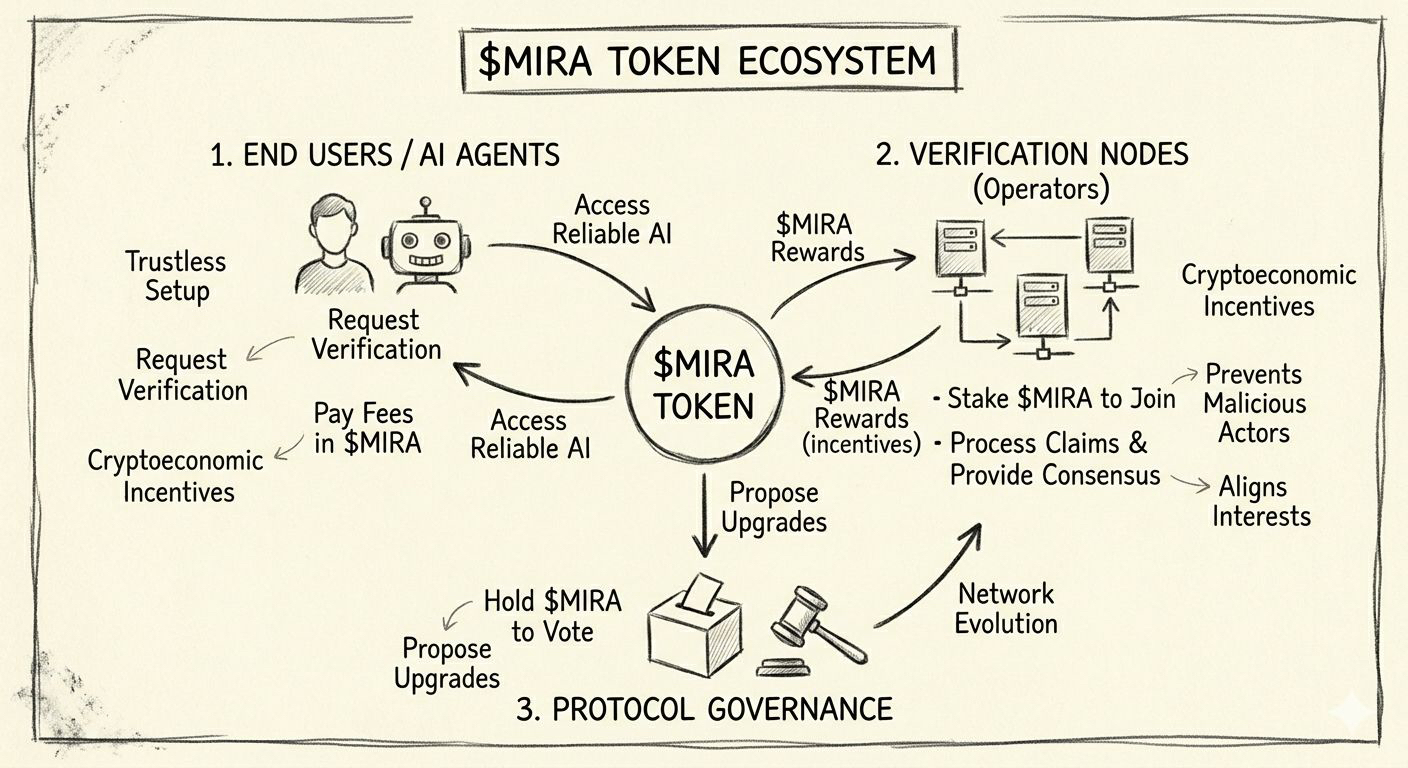

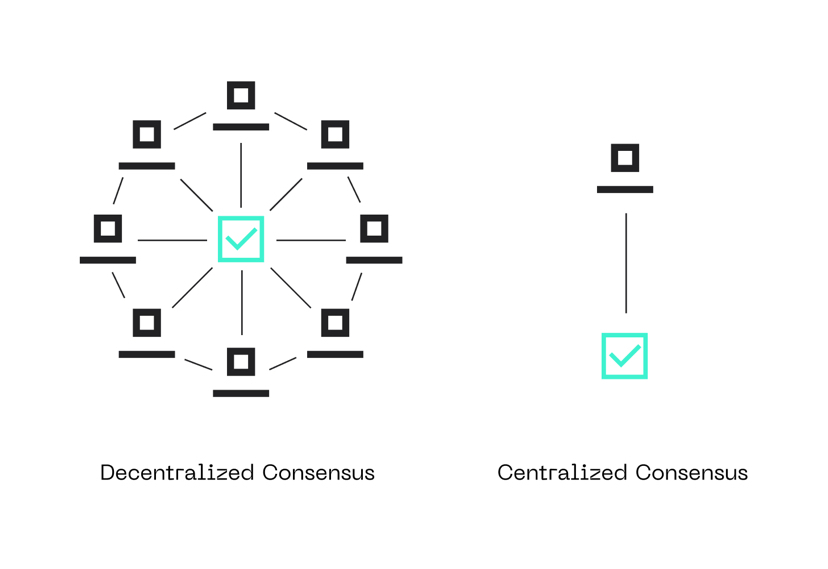

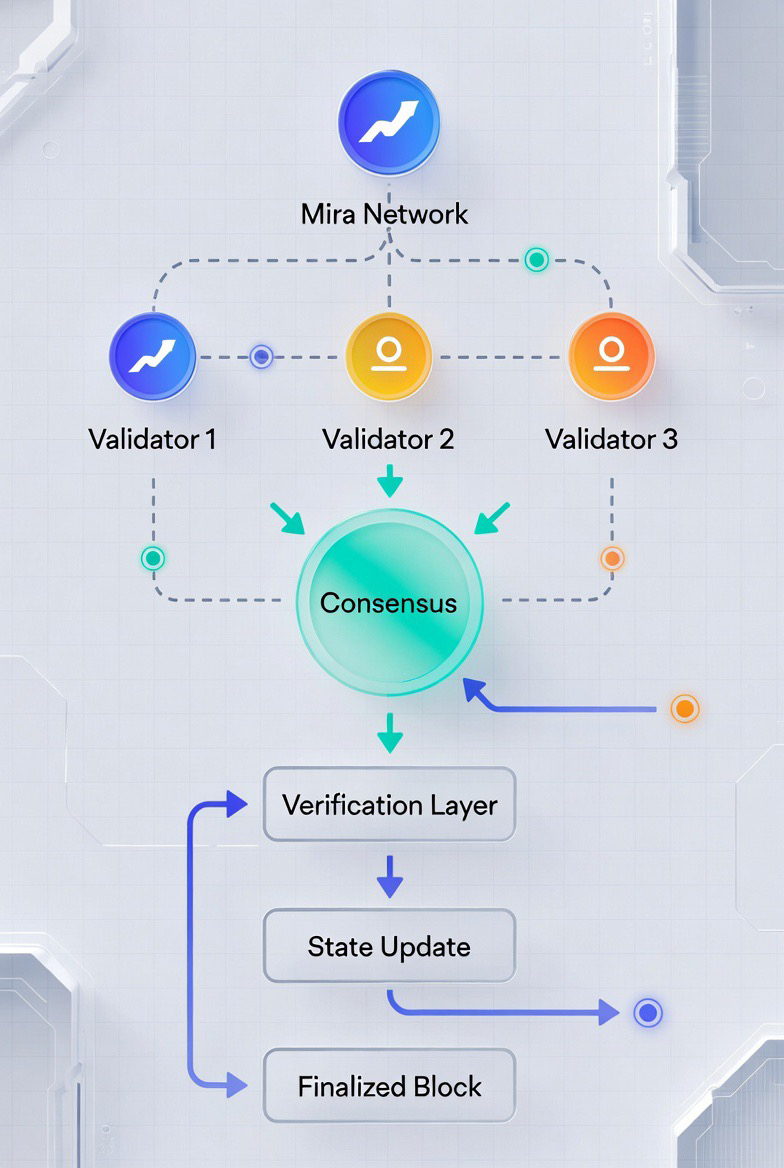

Mira flips this dynamic on its head. When an AI generates content whether a long report, a strategic analysis, or a set of recommendations the system breaks it down into smaller, discrete claims. These are specific, testable statements that can stand on their own (for example, “Company X reported $Y revenue in Q3 2025” or “Study Z found no correlation between A and B”). Each claim gets distributed across a decentralized network of independent validators. These validators are typically different AI models running on varied architectures, trained on diverse datasets, and operated by separate entities so they bring multiple perspectives and reduce the risk of shared blind spots or biases.

The validators evaluate their assigned claims independently, often treating the task as a structured decision (true/false, supported/unsupported, etc.). They then reach consensus through a blockchain-coordinated process. Economic incentives play a central role here: validators stake tokens (often the native $MIRA token) to participate. Honest, accurate validation earns rewards, while inaccurate or malicious behavior leads to penalties such as stake slashing. This “skin in the game” discourages lazy guessing or coordinated attacks and encourages genuine effort. The result is a transparent, auditable outcome: not just a polished answer, but a verified version backed by a cryptographic certificate that records which claims passed, how the validators voted, and the level of agreement achieved.

In short, trust shifts away from relying on any single model’s output and toward a distributed, provable verification process. You’re no longer betting on one black-box system; you’re relying on a network where participants face real consequences for being wrong and are rewarded for being right.

This matters enormously as AI moves toward greater autonomy. Imagine autonomous agents handling investment portfolios, negotiating contracts, running simulations for scientific papers, or supporting medical diagnostics. In those scenarios, “mostly right” isn’t acceptable errors can cascade into real harm. Mira explicitly accepts that hallucinations and biases won’t disappear completely, even with bigger models. Instead of pretending scale alone will solve everything, it builds infrastructure around the reality of persistent flaws. By layering verification on top, it creates a path where AI can operate safely in high stakes environments.

Of course, the approach isn’t without hurdles. Scalability remains a concern processing thousands of claims quickly enough for real time applications requires clever optimization. Latency could be an issue in time sensitive use cases. Ensuring true diversity among validators (so the network doesn’t just echo the same mainstream views) takes ongoing effort. Governance, incentive alignment, and resistance to sybil attacks or model poisoning are all active challenges the team and community continue to tackle.

Still, the core direction feels deeply logical and necessary. Pure intelligence, no matter how advanced, can’t be safely deployed at scale without strong verification and accountability mechanisms. Mira isn’t chasing the next flashy AI breakthrough or riding the hype cycle; it’s quietly constructing the missing trust layer the foundational infrastructure that lets increasingly autonomous AI systems act with real accountability.

For me, that shift represents the most important evolution in this space right now. As we hand over more control to machines, we need ways to confirm not just that they sound convincing, but that they’re actually reliable. Mira’s focus on verifiable, auditable outputs achieved through decentralized consensus, economic security, and collective intelligence offers a pragmatic, forward-looking answer to one of AI’s thorniest problems. It’s less about eliminating every mistake and more about making sure we can catch and correct them before they cause damage. In a world racing toward greater AI autonomy, that kind of trust infrastructure isn’t optional it’s essential.

#Mira @Mira - Trust Layer of AI $MIRA