@Mira - Trust Layer of AI

In the rapidly evolving world of AI, there's a common assumption: the model’s output is likely correct, and any mistakes can be fixed later. For everyday applications like drafting content, generating search suggestions, or creating customer support scripts, this mindset works fine. Errors are minor inconveniences, easily corrected by humans. But when AI is making decisions with real-world consequences, this assumption becomes risky.

The Stakes Are Higher in Autonomous Systems

Consider autonomous finance applications, like DeFi strategies executing on-chain, research agents synthesizing complex literature, or DAOs relying on AI-generated insights for governance proposals. In these high-stakes environments, “probably right” isn’t sufficient. Errors can result in financial losses, flawed research conclusions, or misguided governance decisions. The challenge isn’t that AI is inherently unreliable; it’s that measuring reliability in context is difficult. Traditional models provide no clear signal of confidence, leaving stakeholders without assurance when outcomes matter most.

Closing the Verification Gap

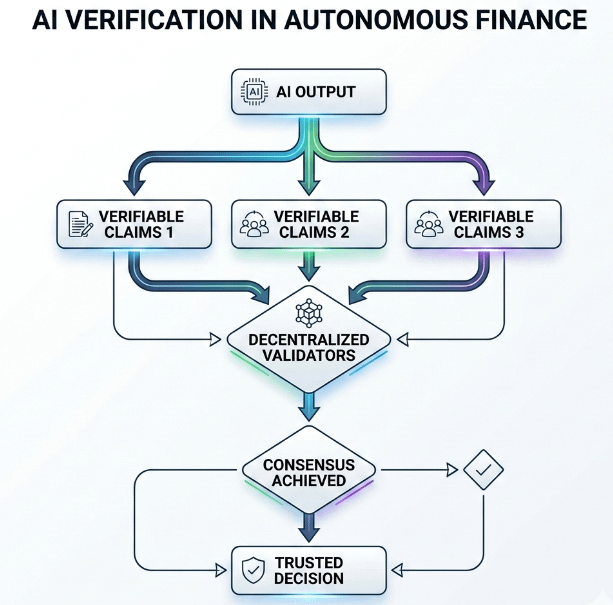

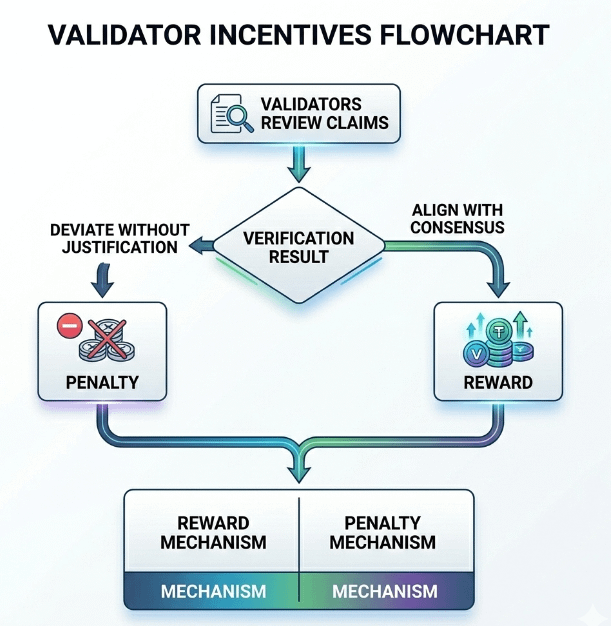

What’s needed is an external mechanism to verify AI outputs before they are used in critical decisions. Decentralized verification networks offer a promising solution. These networks break AI outputs into verifiable claims, which are independently reviewed by validators. Validators who align with consensus are rewarded, while those who deviate without justification face consequences. This incentivizes thoughtful and accurate validation, creating a trust layer that goes beyond the model itself.

Transparency and Accountability Through Web3

The design of decentralized verification is particularly well-suited for Web3 applications. Blockchain-anchored records provide a transparent audit trail, showing who reviewed outputs, when, and what conclusions were drawn. This kind of accountability is essential for industries where trust, compliance, and governance are critical. With verifiable records, organizations can confidently rely on AI outputs, knowing they have passed rigorous scrutiny before execution.

Why the Bottleneck Is Trust, Not Capability

AI models today are powerful enough to add value across many domains, from research to finance. The bottleneck is not capability—it’s trust. Without verification infrastructure, even the most advanced AI outputs cannot be relied upon for high-stakes decisions. Mira is building this missing accountability layer, creating a system where AI outputs are not only intelligent but defensible.

The Future of Reliable AI

The AI infrastructure stack is still developing. Compute power and model sophistication are well-established, but the accountability layer remains underdeveloped. Mira aims to fill this gap, ensuring that autonomous AI can safely power critical workflows. The ultimate question is whether markets and organizations will recognize the importance of verification proactively—or only after a high-profile failure highlights the risks of trusting AI blindly.

Artykuł

Building Trust in Autonomous Finance: How Mira Bridges the AI Verification Gap

Zastrzeżenie: zawiera opinie stron trzecich. To nie jest porada finansowa. Może zawierać treści sponsorowane. Zobacz Regulamin

0

4

52

Poznaj najnowsze wiadomości dotyczące krypto

⚡️ Weź udział w najnowszych dyskusjach na temat krypto

💬 Współpracuj ze swoimi ulubionymi twórcami

👍 Korzystaj z treści, które Cię interesują

E-mail / Numer telefonu