There is an idea behind most Artificial Intelligence deployments: the Artificial Intelligence model is probably correct and if it is not someone will catch the mistake later.

In situations where the stakesre low this idea works. When Artificial Intelligence is used to create content, rank search results or write customer support scripts mistakes are annoying. They are not disastrous. A human reviews the output fixes what is wrong and moves on.

This idea becomes dangerous when Artificial Intelligence is directly embedded into systems that take action.

For example Autonomous DeFi strategies make trades on the blockchain. Research agents summarize amounts of information that guide funding or policy decisions. Decentralized Autonomous Organizations rely on Artificial Intelligence-generated analysis to decide on governance proposals that're worth millions of dollars. In these situations being "probably right" is not good enough.

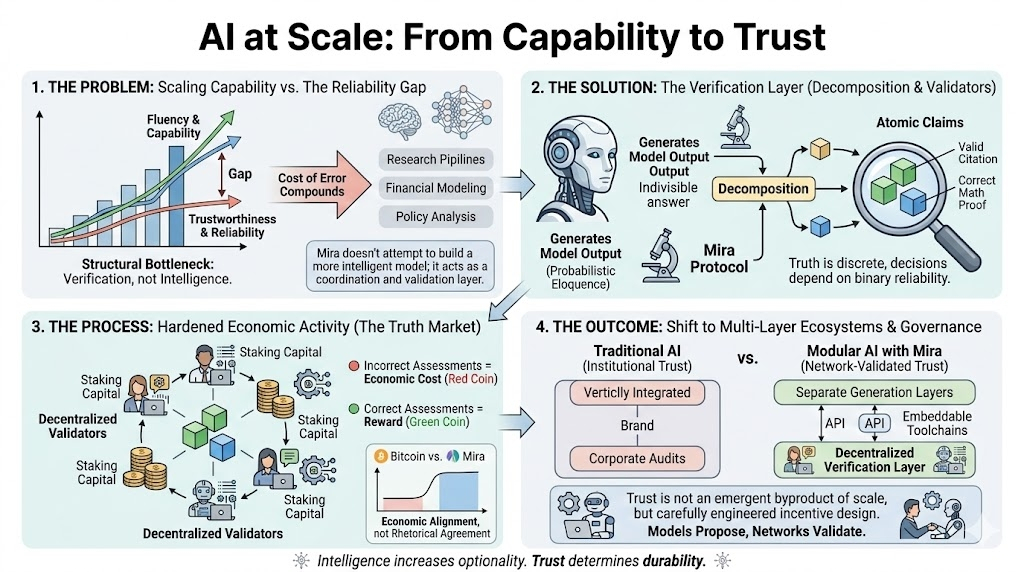

This is what we call the verification gap.. It is getting wider as Artificial Intelligence capabilities improve faster than our ability to hold Artificial Intelligence accountable.

The main problem is not that Artificial Intelligence models are inherently unreliable. It is that it is hard to measure how reliable they are in situations. When a language model produces an output there is no built-in signal that tells you how confident the system should be or whether the reasoning behind the output is sound.

For use this is acceptable.. For critical systems that touch financial systems, governance or autonomous agents this is a major weakness.

What is missing is a way to review Artificial Intelligence outputs before they are trusted acted on or embedded into workflows.

Decentralized verification networks try to solve this problem by changing how Artificial Intelligence outputs are treated. Of accepting a single response as authoritative outputs are broken down into claims that can be verified. These claims are reviewed by validators. Validators who agree with the consensus are rewarded. Those who disagree without a reason face penalties.

The way the incentives are designed matters. Validators are not rewarded for being fast or for agreeing with others. They are rewarded for validating the outputs. Over time the system encourages participants to review the outputs rather than just rubber-stamping them.

This model is particularly appealing for Web3 applications because it provides a record of who reviewed an output when the review happened and how the validators assessed it. This record is important in high-stakes governance, financial automation and regulated environments where accountability's crucial.

The real obstacle to Artificial Intelligence adoption is not the capability of the models. The models are already powerful enough to create value in domains. The obstacle is the lack of trust infrastructure. Whether Artificial Intelligence outputs can be defended, audited and relied upon when something goes wrong.

Verification layers do not make Artificial Intelligence perfect. They make Artificial Intelligence defensible. They allow Artificial Intelligence outputs to withstand scrutiny of collapsing under it.

The Artificial Intelligence infrastructure stack is still incomplete. We have the compute layers and the model layers. The accountability layer is still underdeveloped.

Mira Network is positioning itself to fill this gap by treating verification as a part of the infrastructure rather than an afterthought.

Infrastructure projects that become essential, to our workflows tend to be invisible until they fail.. Until they become indispensable.

The question is not whether Artificial Intelligence verification matters. It is whether the market recognizes its importance before a major failure forces us to pay attention.

The timing of this may ultimately decide which trust layers become the foundation and which remain experimental. Artificial Intelligence is a component of this and we need to get it right.

@Mira - Trust Layer of AI #Mira $MIRA #mira