Artificial intelligence has advanced quickly in recent years, but reliability remains a persistent and uncomfortable problem. Modern AI systems can generate persuasive explanations, analyze large datasets, and automate complex tasks. At the same time, they are known to produce incorrect statements with confidence, invent information that does not exist, and reflect biases embedded in their training data. These weaknesses are often summarized by the term “hallucination,” but the issue runs deeper than simple mistakes. It raises questions about whether AI systems can be trusted in situations where accuracy matters.

The project known as Mira Network approaches this problem from an unusual angle. Rather than attempting to build a single model that never makes errors, the protocol treats verification itself as a decentralized process. Its goal is to transform AI outputs into statements that can be examined, checked, and validated across a distributed network of independent models and participants. In this framework, reliability does not depend on trusting one system. It emerges from collective verification.

The concept reflects a broader shift in how developers are beginning to think about artificial intelligence infrastructure. Instead of focusing entirely on generating answers, some projects are beginning to focus on proving that those answers are correct. Mira Network sits firmly in this emerging category. Its architecture attempts to merge AI reasoning with cryptographic verification, using blockchain coordination to record and validate the process.

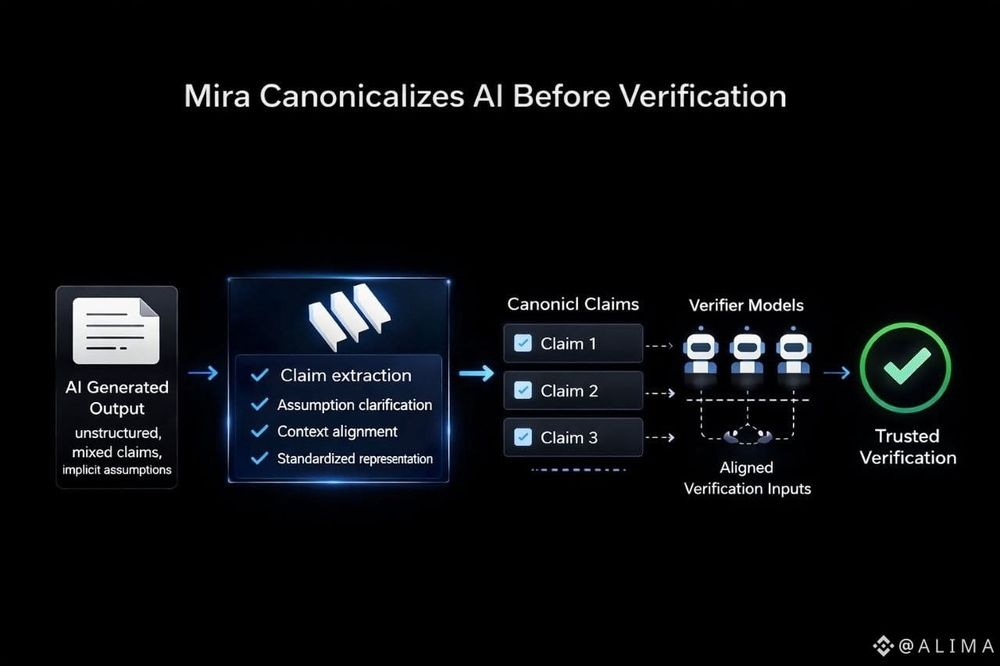

At the center of this approach is the idea that complex AI outputs can be broken down into smaller claims. When a model produces a paragraph of analysis or a structured response, that output can be decomposed into individual statements that can be evaluated independently. Some claims may involve factual references, others logical relationships, and others procedural steps in reasoning. By separating the content into discrete elements, verification becomes possible in a way that traditional AI systems do not attempt.

Within Mira’s framework, each claim can be examined by multiple AI models or verification agents across the network. These participants attempt to confirm whether a statement is consistent with available data, reasoning rules, or other models’ outputs. The process resembles peer review more than conventional machine learning inference. Rather than a single model deciding the answer, many independent checks converge on a result.

Blockchain infrastructure plays a critical role in coordinating this activity. Verification steps, model responses, and consensus results are recorded on a shared ledger. This creates a transparent record of how a particular output was evaluated. Instead of presenting an answer without context, the system produces an audit trail that shows how verification occurred.

This design reflects a philosophical difference in how reliability is defined. Traditional AI research often focuses on improving model accuracy through better training data and architecture improvements. Mira Network acknowledges those efforts but places emphasis elsewhere. The protocol treats correctness as something that can be verified after generation rather than guaranteed beforehand.

The implications of this shift are subtle but important. In conventional AI applications, users often receive answers that appear authoritative but cannot easily be checked. Even when models provide references, those citations may themselves be fabricated or misinterpreted. Verification is left to the user, who may not have the time or expertise to investigate.

Mira attempts to shift that responsibility from individuals to the network itself. Verification becomes part of the infrastructure surrounding AI rather than an afterthought. If a model produces a claim, that claim enters a verification pipeline where multiple agents examine it. The result is not merely an answer but a consensus about whether the statement can be considered valid.

The token associated with the network, MIRA, functions within this system as a coordination mechanism. Participants who contribute verification work interact with the protocol through token-based incentives and governance structures. Rather than acting as a simple payment unit, the token represents participation in the verification ecosystem.

In practice, this means the token becomes part of the mechanism that motivates independent models and operators to check each other’s outputs. Verification is computational work, and the network must coordinate who performs it and how consensus is reached. The token provides the structure through which that coordination occurs.

This model introduces an interesting parallel between decentralized computing and the scientific process. In scientific research, claims are rarely accepted simply because one author states them. Evidence must be reviewed, experiments replicated, and conclusions debated before consensus forms. Mira’s architecture borrows elements of this pattern and attempts to translate them into a machine-readable environment.

The result is an AI verification layer that resembles distributed peer review. Individual models generate claims, while others attempt to confirm or challenge them. The blockchain ledger acts as the record of this interaction, preserving the reasoning steps involved in validating the information.

One of the most notable design decisions in Mira Network is its reliance on multiple AI models rather than a single system. Diversity of models becomes a feature rather than a complication. Different models may interpret data differently, apply reasoning in distinct ways, or rely on different training corpora. When several models independently confirm a statement, the likelihood of correctness increases.

This concept mirrors ideas from ensemble learning, where combining multiple models often produces more reliable results than relying on one. Mira extends that principle beyond prediction accuracy and into the domain of verification. Instead of simply averaging outputs, the network evaluates claims across a distributed set of evaluators.

Another aspect of the protocol involves claim decomposition. Complex reasoning is often difficult to verify in a single step. A long explanation may contain several assumptions and intermediate conclusions. By dividing outputs into atomic claims, the system allows each step to be checked independently.

This process also helps reveal where uncertainty exists. If most claims within an output are verified but one remains contested, the system can highlight the specific point of disagreement. That transparency contrasts with conventional AI systems that present conclusions without showing where reasoning might be fragile.

From a technical perspective, this layered verification architecture raises interesting questions about scalability and efficiency. Verification requires computational resources and coordination among participants. The network must determine how many models should examine a claim, how consensus thresholds are defined, and how conflicting results are resolved.

Mira’s architecture addresses these issues through structured verification rounds and cryptographic commitments that ensure participants cannot alter their evaluations after seeing others’ responses. These mechanisms help maintain fairness and prevent manipulation within the verification process.

The presence of a public ledger adds another dimension to the system. Because verification records are stored on-chain, they become auditable artifacts. Anyone examining a verified output can inspect the chain of checks that led to the consensus result. This transparency attempts to address a longstanding criticism of AI systems, which are often described as opaque or difficult to interpret.

Interpretability has traditionally been approached through model introspection techniques such as attention visualization or gradient analysis. Mira Network approaches interpretability differently. Rather than explaining how a model reached its conclusion internally, the protocol shows how multiple agents externally evaluated the claims within that conclusion.

This distinction may appear subtle, but it reflects a different philosophy about trust. Instead of requiring users to understand the inner workings of a model, the system provides evidence that independent verification occurred. Trust arises from process rather than insight into internal parameters.

Another notable feature of the network is its attempt to remain model-agnostic. The protocol does not require participants to use a specific AI architecture or training method. Any model capable of evaluating claims can theoretically contribute to the verification process. This flexibility allows the network to incorporate a wide variety of AI systems over time.

The design also recognizes that no model is perfectly reliable. By treating each participant as a potential verifier rather than an unquestioned authority, the network distributes responsibility across many agents. Errors from one system can be caught by others, reducing the impact of individual mistakes.

In many ways, Mira Network represents an attempt to build infrastructure around AI rather than replacing existing models. Large language models, specialized reasoning engines, and domain-specific AI tools can all generate outputs that enter the verification pipeline. The protocol acts as a layer that examines those outputs rather than producing them directly.

This layered approach reflects the growing complexity of the AI ecosystem. As models become more capable, the consequences of their errors also become more significant. Systems used in fields such as research, policy analysis, or technical design may require stronger guarantees of correctness than traditional AI interfaces provide.

Mira’s response is to treat verification as a shared responsibility across a decentralized network. Instead of relying on a centralized authority to certify AI outputs, the protocol attempts to coordinate verification through distributed consensus mechanisms.

The architecture does not claim that AI errors can be eliminated entirely. Rather, it proposes that errors can be systematically identified and filtered through collaborative verification. The distinction is important. Reliability emerges from repeated checking rather than perfect generation.

Viewed through a broader lens, Mira Network illustrates how blockchain infrastructure continues to intersect with artificial intelligence in unexpected ways. Early discussions of blockchain often focused on financial applications or digital ownership. In this case, the ledger functions as a coordination layer for evaluating machine-generated knowledge.

This combination of technologies highlights a shared theme between decentralized systems and AI governance debates. Both fields grapple with questions about trust, accountability, and transparency in complex digital environments. Mira attempts to address these questions by embedding verification directly into the architecture of AI outputs.

Whether this approach becomes widely adopted remains an open question, but the conceptual shift is notable. Instead of assuming that intelligence systems must eventually become perfectly reliable, the protocol acknowledges uncertainty and builds mechanisms to manage it.

In practical terms, this perspective reframes how artificial intelligence might operate within collaborative digital networks. Outputs are no longer isolated responses generated by a single model. They become structured claims that pass through layers of scrutiny before being accepted as verified information.

For observers of AI infrastructure, Mira Network provides an example of how verification can be treated as a first-class component of intelligent systems. The protocol suggests that reliability may not come solely from building better models, but also from designing systems that continuously examine and challenge their outputs.

Within that framework, the role of the MIRA token becomes intertwined with the network’s verification logic. It supports the coordination of participants who perform the work of checking claims and maintaining consensus. The token does not define the network’s purpose, but it helps sustain the processes that allow verification to occur.

Ultimately, the significance of Mira Network lies less in a single technical innovation and more in the perspective it represents. Artificial intelligence systems have become powerful generators of information, but generation alone does not guarantee reliability. By focusing on decentralized verification, Mira attempts to transform how trust is established in machine-generated knowledge.

The protocol’s architecture invites a broader reflection on the future of AI systems. If intelligent models continue to expand their role in decision-making environments, mechanisms for verifying their outputs may become just as important as the models themselves. Mira Network positions verification as the foundation upon which trustworthy AI interactions might be built.

$MIRA #Mira @Mira - Trust Layer of AI