There is a strange moment many of us have experienced. An AI gives an answer that sounds perfect. Calm. Confident. Complete. And yet something inside us hesitates. We pause, reread it, and quietly wonder, Is this actually true? That hesitation is not fear of technology. It is the instinct to protect ourselves from being misled. Today’s AI speaks with certainty, but certainty without truth is dangerous. The more powerful AI becomes, the more painful its mistakes feel, because they no longer stay on screens. They touch real lives.

AI was never built to understand the world the way humans do. It predicts. It guesses. It fills gaps based on patterns. Most of the time, that is enough. But in moments that matter, in healthcare decisions, financial choices, legal interpretations, or autonomous systems, “mostly right” is not safe. One hallucinated fact can cause irreversible harm. One biased output can quietly change a person’s future. And humans cannot realistically stand behind every AI decision forever. The world is moving too fast for manual trust.

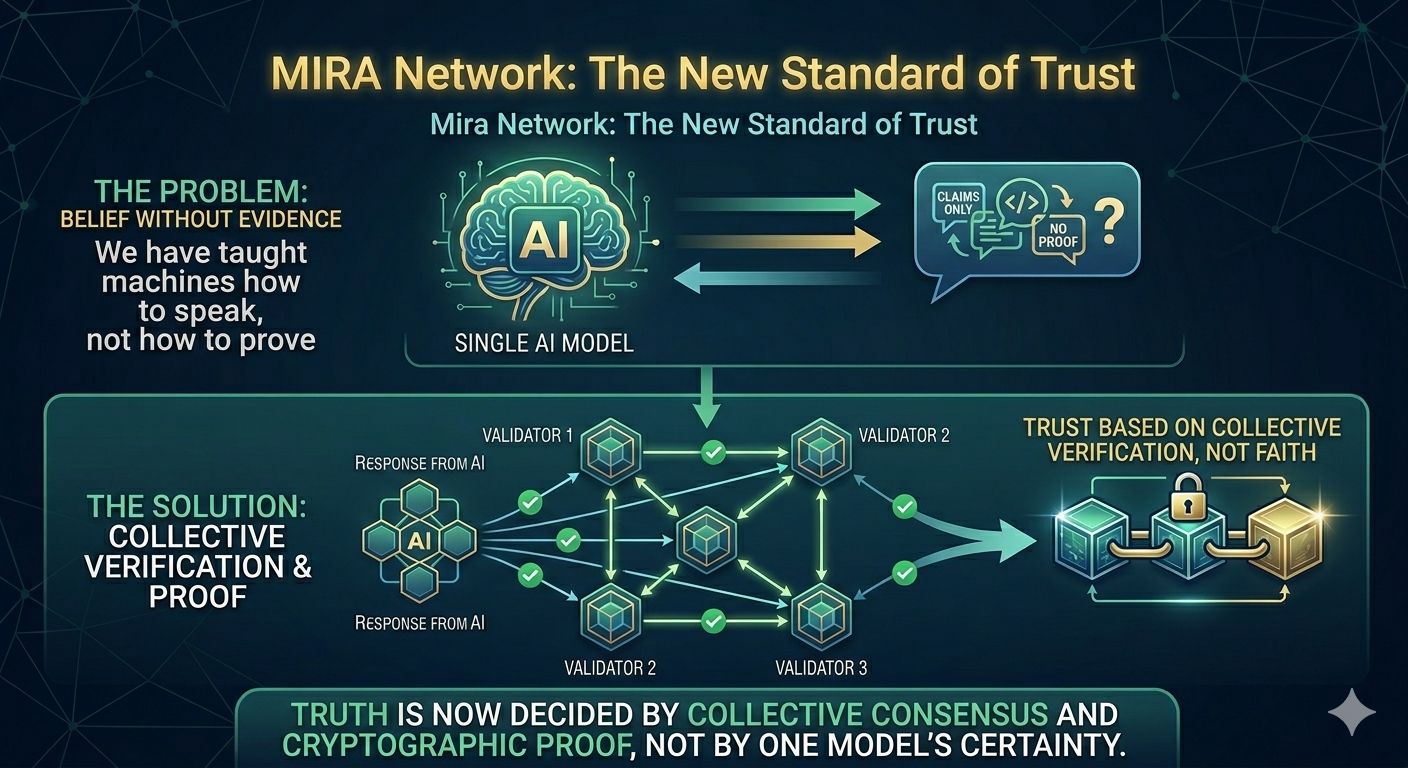

This is where the real problem lives. Not in intelligence, but in belief. We have taught machines how to speak, but not how to prove. We assumed better models would solve the issue, but bigger brains do not guarantee honest answers. What was missing was a way for AI to say, “Here is why you can trust me,” and back it up with evidence rather than confidence.

Mira Network was born from that gap. Instead of asking users to trust a single AI voice, it asks multiple independent systems to check each other. Every response is broken down into simple claims, and those claims are verified across a decentralized network. Truth is no longer decided by one model’s certainty but by collective agreement. When enough independent validators reach the same conclusion, that result is locked in cryptographic proof. It cannot be quietly altered. It cannot be faked. It can be checked, traced, and trusted.

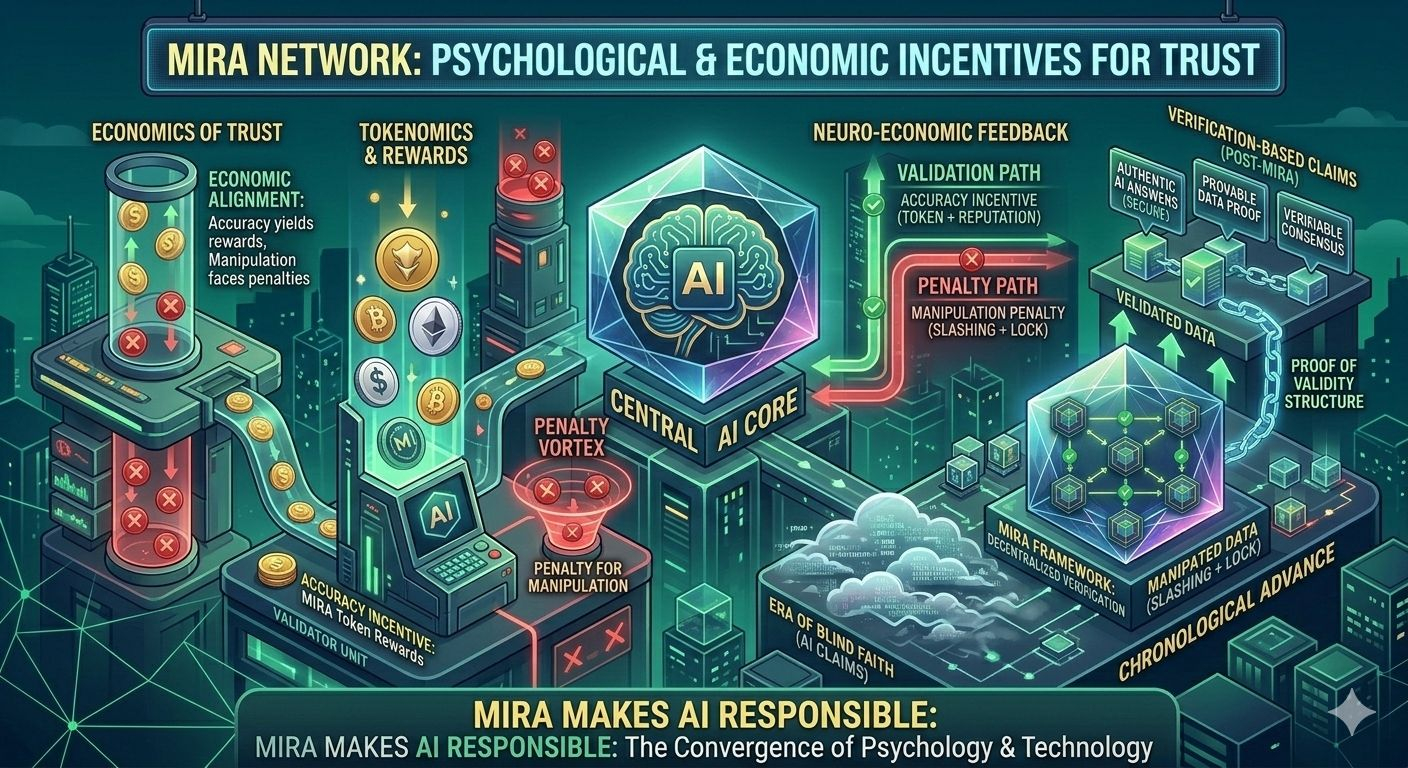

What makes this powerful is not just the technology, but the psychology behind it. Mira understands something deeply human: people behave differently when honesty has consequences. Validators are rewarded for accuracy and punished for manipulation. Truth becomes valuable. Lies become expensive. This alignment between economics and integrity transforms verification from a moral hope into a structural guarantee. The system does not ask participants to be good. It makes goodness the rational choice.

Zooming out, this feels like the moment the internet learned how to secure itself. Before encryption, we shared information with blind faith. After it, trust became built-in. Mira is doing the same for artificial intelligence. It is not trying to make AI more creative or more impressive. It is making AI more responsible. It is building a future where machines do not just answer questions, but stand behind those answers with proof.

The market is already shifting. Regulators demand accountability. Enterprises demand reliability. Users demand honesty. In this world, unverifiable AI becomes a risk, not an asset. The systems that survive will be the ones that can show their work, explain their certainty, and earn trust repeatedly. Intelligence alone will no longer be enough.

At its core, this is not a story about technology. It is a story about fear, responsibility, and trust. Humans want to believe in AI, but belief must be earned. Mira’s vision is simple and profound: do not ask the world to trust machines blindly. Give machines a way to prove the truth. When AI can verify itself, confidence becomes safe again. And for the first time, humans can move forward without looking over their shoulder.

@Mira - Trust Layer of AI #Mira $MIRA