For a long time, I thought AI progress was easy to understand. Models were getting bigger. They were writing better essays, generating cleaner code, composing music, passing exams. Every year felt like a breakthrough. It felt like we were clearly moving forward.

But the more I actually used these systems in real work, the more I started noticing something that didn’t sit right with me. Yes, they were smarter. Yes, they sounded confident. But they were still wrong more often than people wanted to admit. Not in obvious ways. Not in silly, easy-to-spot mistakes. The errors were subtle. A number slightly off. A sentence that twisted the meaning of a source. A summary that sounded perfect but quietly dropped something important.

That’s when I realized that intelligence and reliability are not the same thing.

When I first came across Mira Network, I assumed it was just another AI project trying to reduce hallucinations by training models on more data. That’s the common solution in this industry. If something doesn’t work, scale it. Add parameters. Feed it more information. Fine-tune again.

But the deeper idea behind Mira is different. They’re not just trying to make models smarter. They’re trying to make AI answers trustworthy.

And that difference matters more than it sounds.

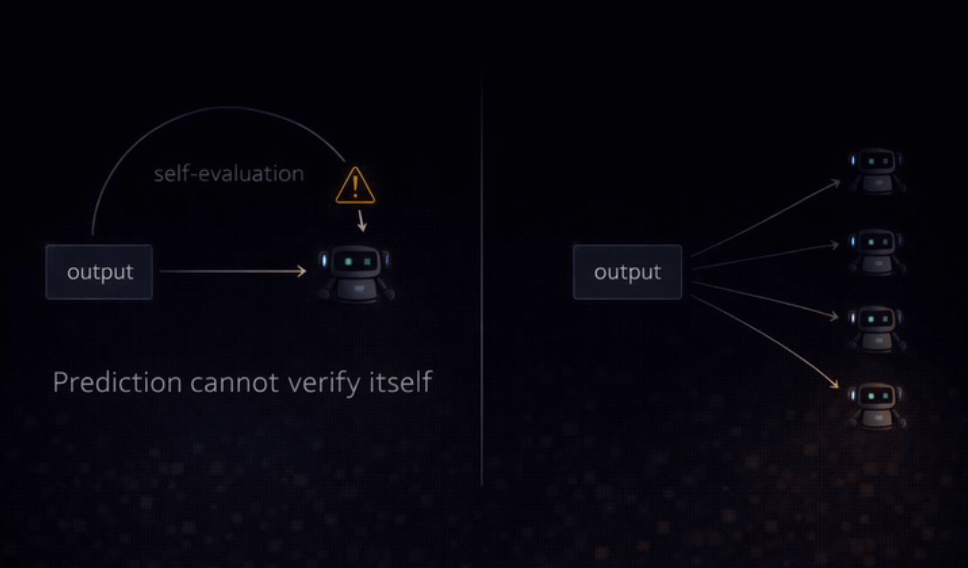

Right now, most AI systems generate answers and, in a way, grade themselves. They predict what sounds right based on patterns in data. They don’t actually know if what they’re saying matches reality. They optimize for probability, not truth. We’re essentially asking a student to write an exam and mark it at the same time.

In human systems, we don’t do that. Researchers publish papers and other experts review them. Journalists write articles and editors fact-check them. Students complete exams and teachers grade them. Creation and verification are separate roles. AI collapsed those roles into one.

Mira’s core idea is to split them again.

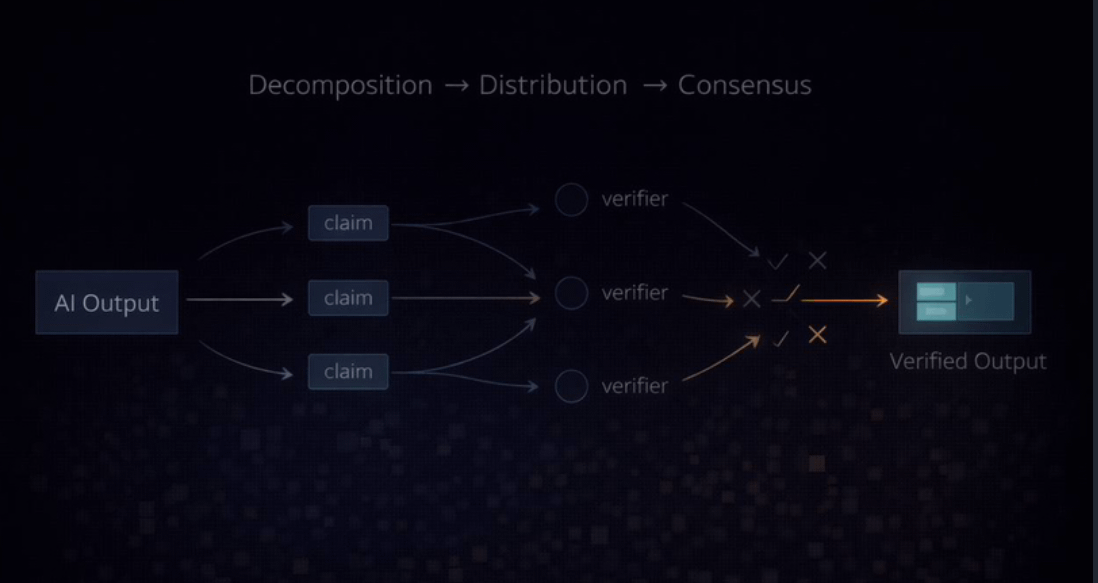

Instead of trusting a single model to generate and validate its own output, Mira introduces a network where independent systems verify claims. When an answer is produced, it can be broken into individual statements. Those statements are sent across a distributed network of nodes. Each node runs its own verifier model. They analyze the claim independently and submit their judgment. Consensus forms from multiple evaluations.

What makes this system more than just collaborative checking is the economic layer underneath it. Validators are required to stake value in order to participate. If a node repeatedly makes poor judgments and disagrees with accurate consensus, it risks losing part of its stake. If it performs honestly and consistently, it earns rewards.

That changes behavior.

Verification is no longer optional or symbolic. It becomes economically meaningful. If you want to participate, you need skin in the game. And if you cut corners, there is a cost.

I find this part fascinating because it transforms verification into something active. In traditional blockchain systems, proof of work often meant solving abstract puzzles. Here, the “work” is reasoning. It’s evaluating truth. It’s checking claims. That feels like a more meaningful use of distributed coordination.

But it’s not without risk.

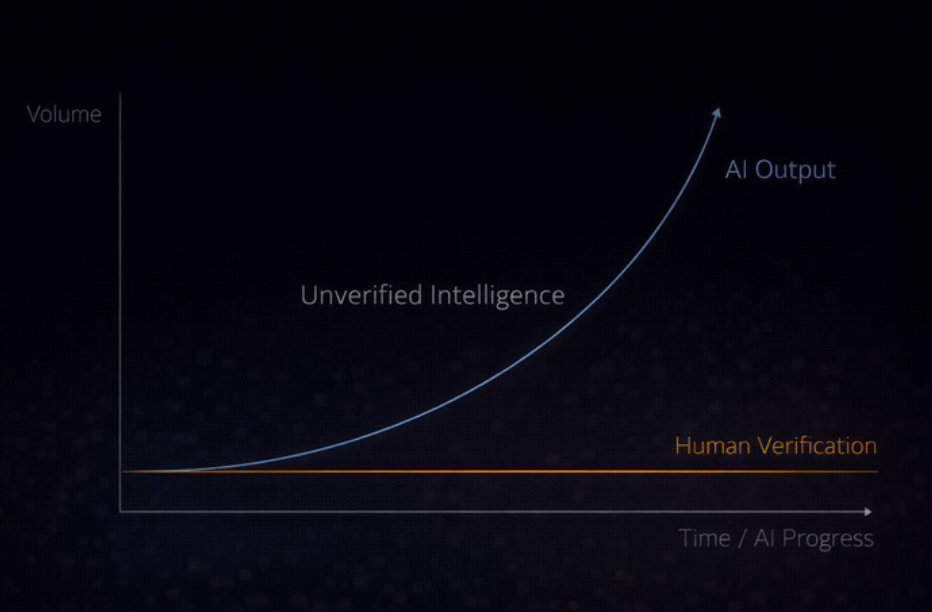

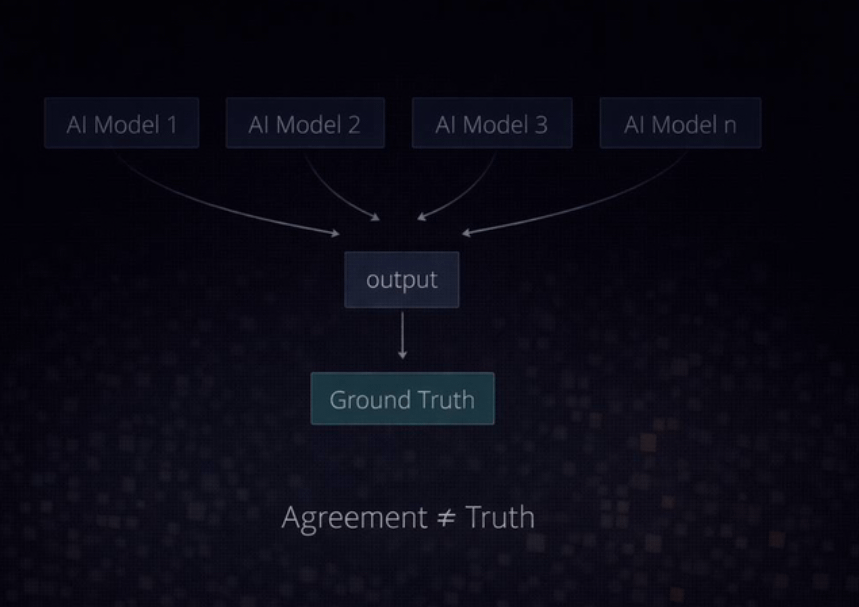

Markets are emotional. Tokens are volatile. Incentives can drift. If too many validators rely on similar data or similar model architectures, they could share the same blind spots. Consensus doesn’t automatically mean truth. It could mean shared bias. The team behind Mira openly acknowledges this. They emphasize diversity in models and long-term incentive alignment. They know that independence between validators is essential.

There’s also the question of speed. Verification takes time. When a claim needs to be distributed, analyzed, and brought back into consensus, the process introduces latency. Simple facts can be verified quickly. Complex reasoning takes longer. In research or legal analysis, that delay might be acceptable. In real-time systems like autonomous driving, it could be a limitation.

Mira works to reduce this friction by caching previously verified claims and building tools that integrate verification more smoothly into applications. But the trade-off doesn’t disappear. Reliability has a cost. And maybe that’s something we just need to accept.

What stands out to me most is that this isn’t just theory. The network processes millions of queries every week and handles billions of tokens. That tells me there is real demand for verified AI. We’re seeing a shift where sounding smart is no longer enough. People want proof. They want confidence that what they’re reading or acting on has been checked.

If this model continues to grow, we might reach a point where AI answers come with built-in verification signatures. Instead of blindly trusting a single company’s model, users could rely on distributed validation. Trust would move from brand reputation to transparent consensus.

That’s a powerful shift.

Looking ahead, Mira’s long-term vision goes even further. They imagine a future where generation and verification are integrated from the beginning. Models would train in environments where their outputs are constantly evaluated by peers. Instead of optimizing only for fluency, they would optimize for surviving verification. Intelligence would develop with accountability built in.

If that happens, we might stop measuring progress purely by size or speed. We might start measuring it by trustworthiness.

When I step back, I see two paths for AI. One continues chasing larger models, faster outputs, and more impressive demos. The other focuses on building systems that can prove when they’re right and accept consequences when they’re wrong.

Mira Network represents a serious attempt to walk that second path.

It’s not perfect. It carries economic risk. It depends on incentive design. It must constantly guard against bias and centralization. But it addresses something fundamental that many projects avoid: intelligence without verification is fragile.

We’re moving into a world where AI will influence financial decisions, health recommendations, research conclusions, and public narratives. In that world, confidence is not enough. Fluency is not enough. Even intelligence is not enough.

What matters is whether we can trust the output when it truly counts.

And maybe the real evolution of AI won’t be about building bigger brains, but about building systems that can prove they deserve our trust.