@Mira - Trust Layer of AI The first time I read about Mira Network, I didn’t feel impressed. I felt cautious. After watching multiple waves of excitement around both crypto and artificial intelligence, I’ve developed a habit of slowing down whenever something claims to “fix” a big problem. Over time, you learn that most systems don’t fail because they lack ambition. They fail because they misunderstand what actually needs repairing. Mira didn’t feel like it was chasing ambition. It felt like it was quietly acknowledging a flaw that many people prefer not to talk about.

Artificial intelligence today is remarkably articulate. It answers questions with confidence, explains complex topics smoothly, and rarely hesitates. But anyone who has used it seriously knows that confidence does not equal accuracy. The mistakes are subtle. Sometimes they are small factual errors. Sometimes they are assumptions presented as truth. And because the language sounds so certain, the human reader often relaxes. That relaxation is where the real risk lives. In low-stakes situations, a mistake is inconvenient. In higher-stakes environments financial analysis, research, automated decisions it becomes something else entirely.

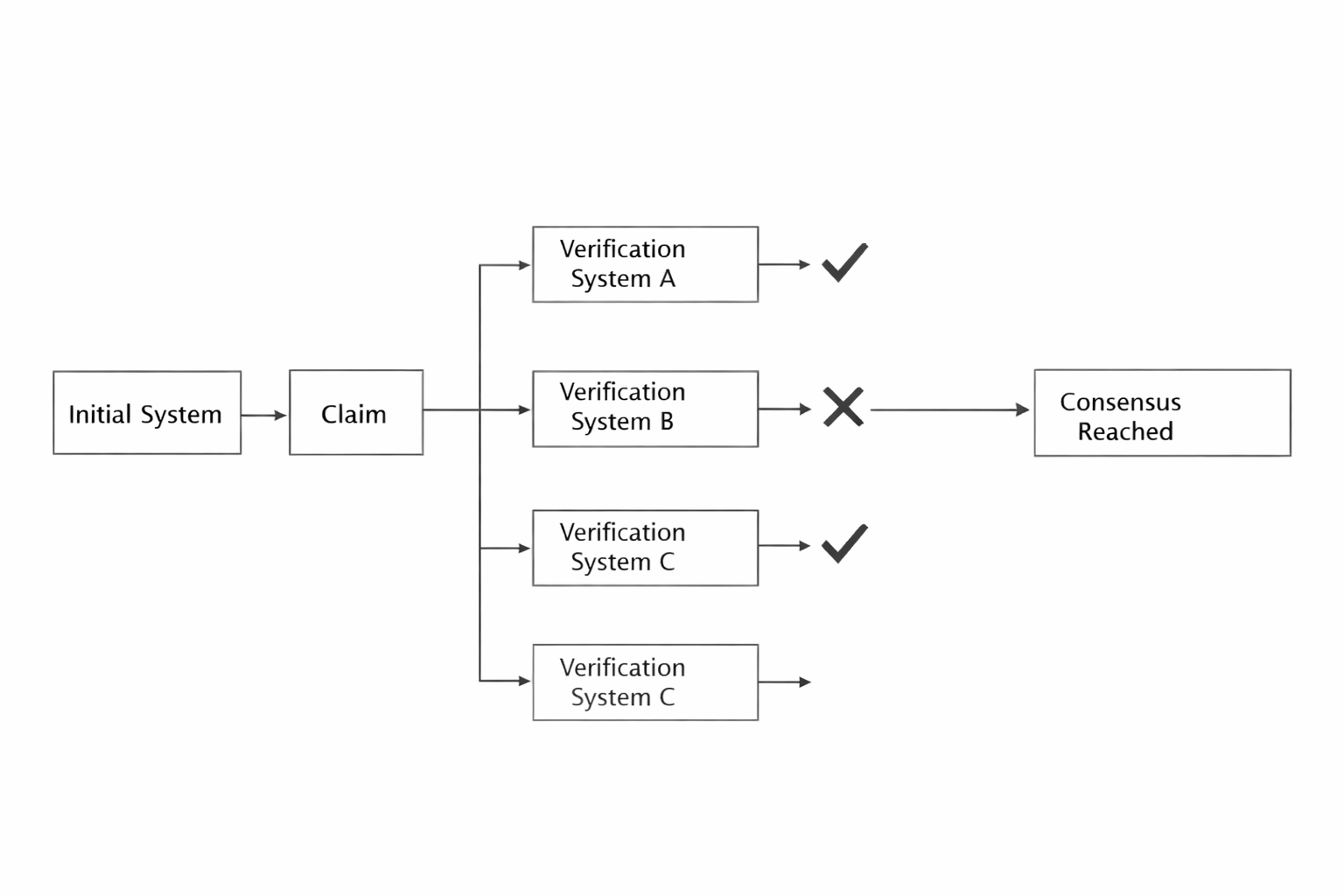

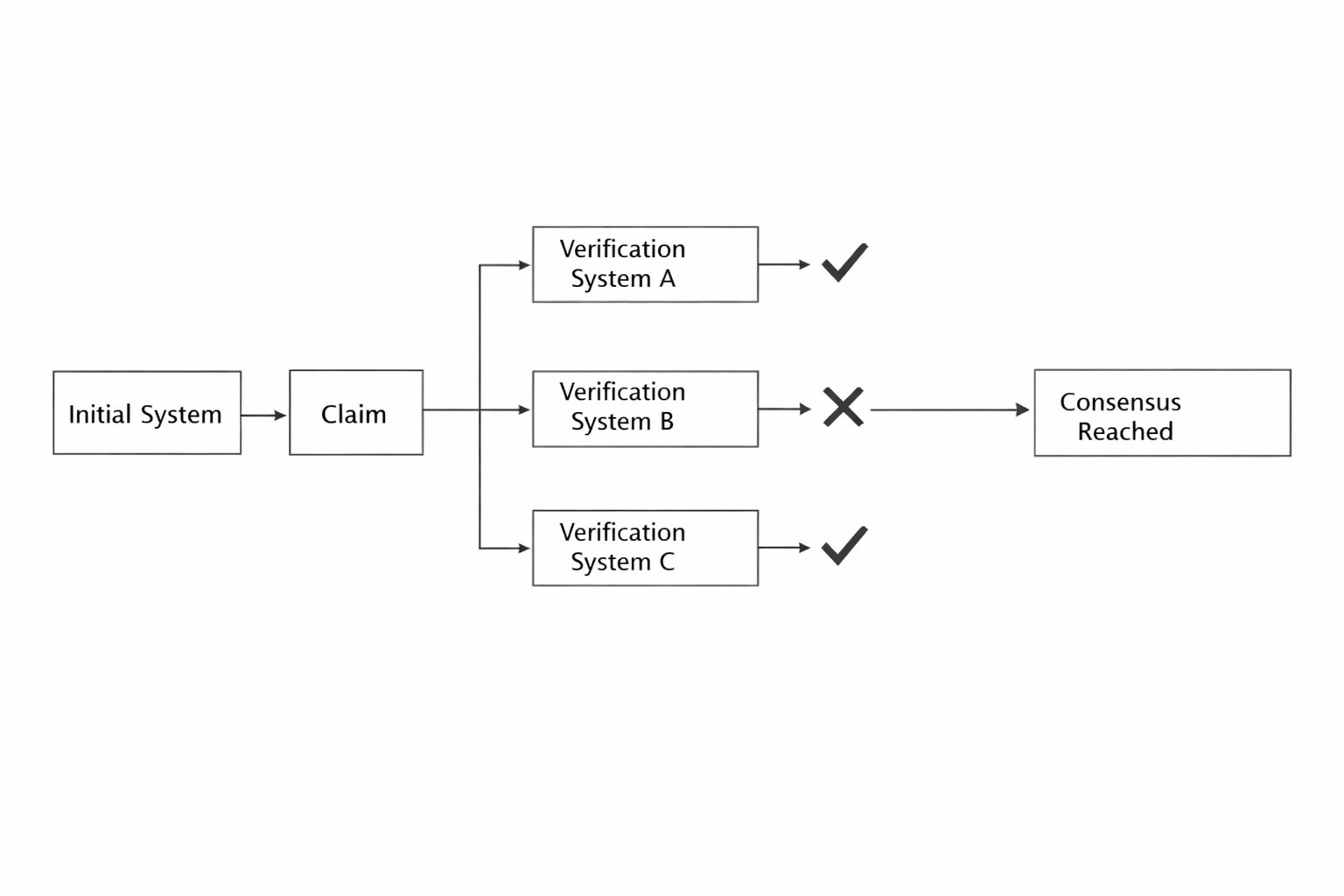

What struck me about Mira is that it doesn’t try to make AI smarter. It accepts that intelligence, at least in its current form, will remain imperfect. Instead of pushing for bigger models or louder claims, it focuses on a quieter idea: verification. The concept is simple in spirit. If one system makes a claim, others should examine it. If agreement forms through an open process, that agreement carries more weight than a single voice speaking alone. It feels less like a race for brilliance and more like an attempt to build a habit of double-checking.

There is something very human about that instinct. In real life, we rarely rely on one perspective when something important is at stake. We ask for second opinions. We compare notes. We look for disagreement before we feel comfortable. Mira tries to bring that social behavior into a digital structure. Instead of trusting a centralized authority to decide what is correct, it spreads responsibility across participants who have incentives to be careful. The design is not glamorous, but it reflects lived experience: trust is built through process, not assertion.

Of course, this approach introduces friction. Verification takes time. It adds cost. It makes things slower. In a technology culture that celebrates instant results, choosing slowness can seem counterproductive. But maturity often involves recognizing that speed is not always the highest value. Financial audits are slow. Scientific review is slow. Legal appeals are slow. They are slow because they protect against irreversible mistakes. Mira appears willing to accept that trade-off, even if it means sacrificing immediate excitement.

I don’t see this as a perfect solution. Consensus does not automatically produce truth. Groups can share blind spots. Incentives can be misunderstood or manipulated. And adoption will depend on whether people truly feel the pain of unreliable AI strongly enough to pay for additional assurance. These are real uncertainties. Systems like this are shaped as much by human behavior as by code.

Yet there is something grounded about the direction. Mira does not position itself as revolutionary. It feels more like an adjustment ofa recognition that as machines grow more influential, their outputs cannot simply be taken at face value. In a world increasingly comfortable with automated answers, building structures that pause, check, and verify may become less optional over time.

I am not certain where Mira Network will stand in a few years. Many thoughtful ideas remain peripheral because the world moves unpredictably. But I do sense that the conversation it represents is maturing. Instead of asking how intelligent our systems can become, it quietly asks how accountable they should be. And after watching cycles of overconfidence correct themselves again and again, that question feels both timely and necessary.