I’ve learned that trust gaps are rarely closed by ambition alone. They close when incentives hold under stress. In crypto infrastructure, durability shows up when rewards normalize and participation does not. That is where I tend to focus, not on narratives about revolutionizing AI, but on whether validators remain engaged when the marginal upside compresses.

@Mira - Trust Layer of AI recent architectural refinements are subtle but worth examining. Updates to its claim routing logic and validator sequencing improved how outputs are decomposed and distributed for review. SDK adjustments reduced integration friction for developers embedding verification into workflows. None of this was framed as a breakthrough. That restraint is appropriate. Structural improvements in verification networks are usually incremental, not theatrical.

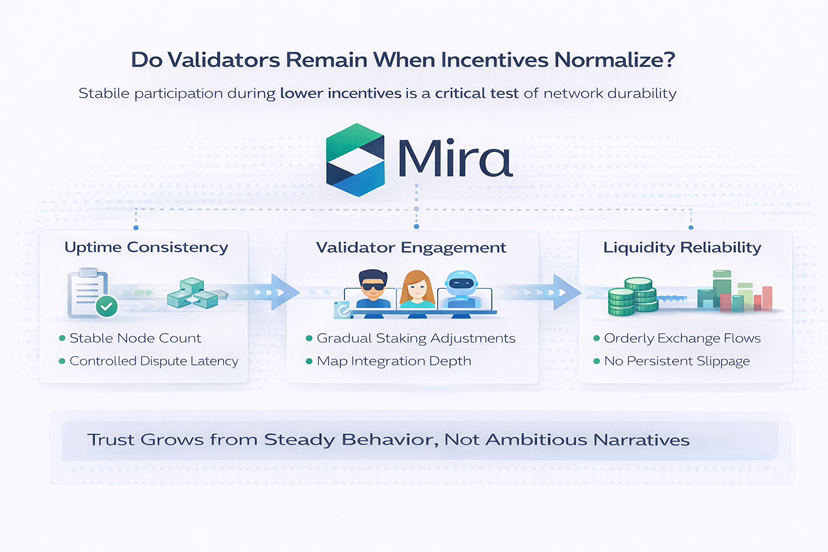

What matters is how participants responded. Following reward normalization phases, validator participation has not shown abrupt contraction. Active nodes have remained within a relatively stable band. Staking balances adjusted gradually rather than collapsing in synchronized withdrawals. Dispute latency, by available on-chain observation, has remained contained rather than expanding under throughput fluctuations. These are not dramatic signals. They are steady ones.

Liquidity behavior offers additional context. Exchange flows did not spike disproportionately after visibility cycles, which reduces the probability that short-term speculation is dominating turnover. Depth has fluctuated with broader market conditions, but without disorderly gaps or persistent slippage expansion.

If #Mira mission is to close the AI trust gap through decentralized verification, the real test is economic. Validators stake capital against correctness. That exposure imposes discipline. Incentives reveal network quality because they impose cost on error and opportunity cost on participation. If validators persist when emissions taper and narrative momentum fades, verification may be economically rational rather than subsidy-driven. If they exit quickly, the trust layer is thinner than advertised.

Predictable liquidity supports execution reliability for integrators embedding verification into compliance or research pipelines. The question is whether usage becomes routine. Infrastructure matures when verification calls are integrated by default rather than triggered by attention cycles.

I remain cautious. AI verification introduces semantic complexity that is harder to standardize than simple ledger consensus. Mispricing at the claim validation layer could surface as throughput scales. Integration depth may lag ambition. And decentralized consensus does not automatically eliminate coordination risk. The system must demonstrate resilience across multiple compression cycles, not just one.

Still, I view $MIRA less as a speculative instrument and more as a coordination experiment. As networks mature, they often grow quieter. Tools that work recede into background infrastructure. The spectacle fades; the function remains. The absence of volatility spikes or validator exodus during normalization is not proof of success, but it is a prerequisite for credibility.

The broader question lingers: can decentralized incentives meaningfully enforce truth claims at scale, or will verification remain partially institutional? That answer will not emerge from press releases. It will emerge from retention curves, staking depth, dispute frequency, and developer integration patterns over time.

Trust is not declared. It is observed in behavior under constraint. If Mira continues to show disciplined coordination when incentives compress again, its mission may be structurally plausible. If not, the trust gap will remain. I am less interested in whether the system sounds convincing than whether it remains intact when the rewards narrow. That is the only test that compounds.