Jako operator, domyślnie nie ufam etykietom "wysokiej pewności". Ufaj książce operacyjnej z twardymi warunkami zatrzymania.

Kotwica betonowa: w systemach produkcyjnych jedna niezweryfikowana roszczenie może wywołać łańcuch działań następczych. Rynki mogą debatować narracje, ale zespoły produktowe potrzebują innego wskaźnika: oczekiwana strata, gdy to nierozwiązane roszczenie zostanie zrealizowane.

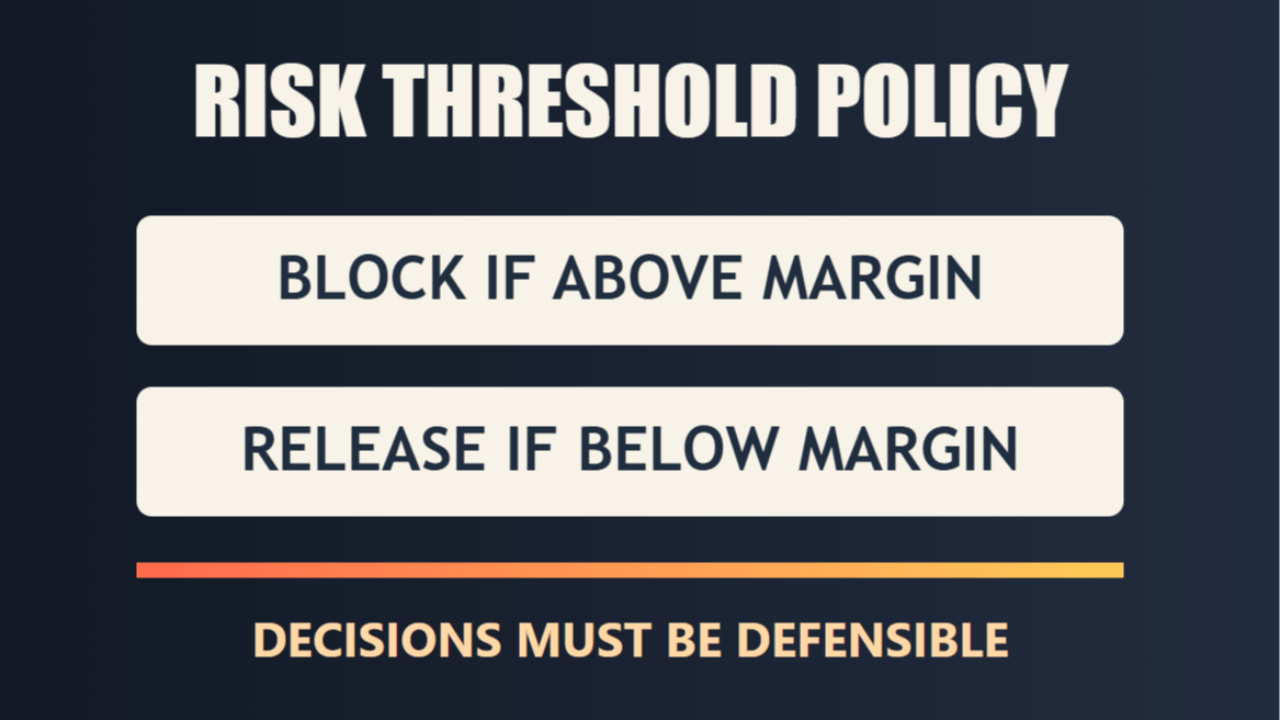

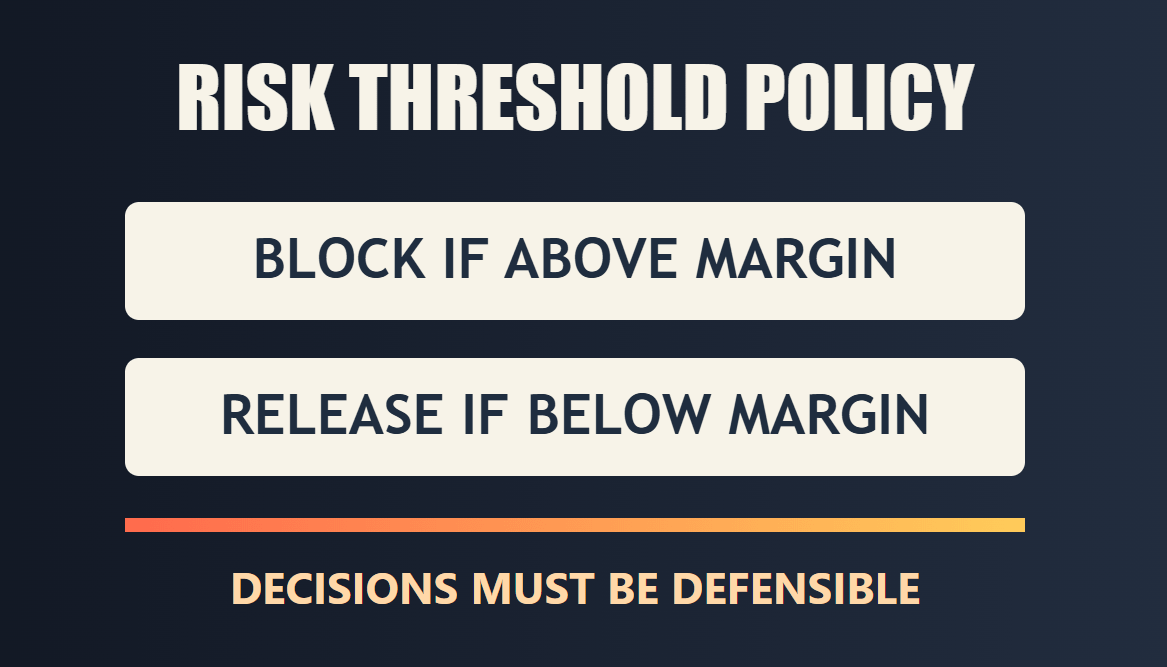

Moje podejście do produkcji jest proste i jasne:- Zdefiniuj wyraźny próg ryzyka przed wdrożeniem.- Utrzymuj blokadę wykonania, gdy nierozwiązane prawdopodobieństwo pozostaje powyżej tego progu.- Uwalniaj działania tylko po redukcji nierozwiązanego ryzyka pod presją niezależnej weryfikacji.

Dlatego Mira jest dla mnie interesująca. Popycha zespoły w kierunku odpowiedzialnych operacji zamiast teatru pewności. Wartość nie polega na "idealnej AI." Wartość to powtarzalna brama, która utrudnia wprowadzenie złych decyzji.

Nie twierdzę, że ryzyko jest zerowe. Weryfikacja zwiększa opóźnienia i koszty operacyjne. Jednak niezarządzana szybkość jest zazwyczaj droższym wyborem, gdy na szali są prawdziwe pieniądze, odpowiedzialność prawna lub zaufanie klientów.

Zatem decyzja jest prosta: czy optymalizujesz pod kątem szybkości demonstracji, czy budujesz system, który może uzasadnić swoje decyzje podczas audytu?

@Mira - Trust Layer of AI $MIRA #Mira