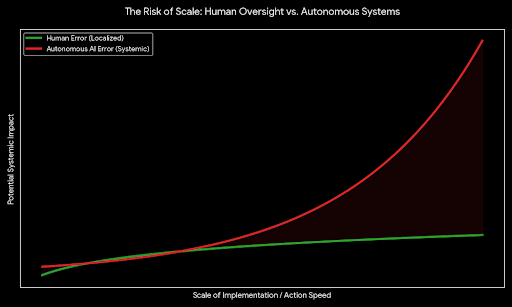

We are entering a moment where AI is no longer just assisting humans it is beginning to act on our behalf. Autonomous systems are approving loans, flagging medical risks, optimizing energy grids, and shaping public information flows. The shift from suggestion to decision is subtle, but the consequences are enormous. When machines act in the real world, trust cannot be assumed. It must be earned and proven.

The real danger is not that AI makes mistakes. Humans do too. The danger is scale. An unverified trading agent can trigger financial instability in seconds. A hallucinated medical recommendation can quietly misguide treatment. An infrastructure optimization error can ripple across entire regions. When human oversight is limited, blind trust becomes systemic risk.

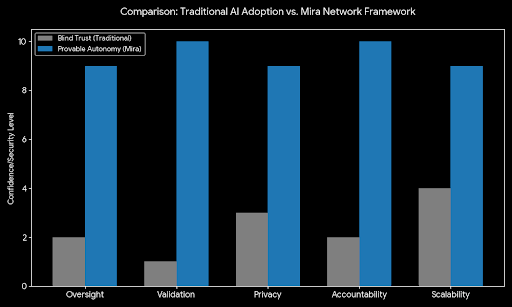

This is where decentralized verification becomes essential. Instead of simply accepting an AI’s output, the system verifies the action itself. Did the trade actually execute as claimed? Was the medical analysis derived from valid inputs? Was the infrastructure decision based on authenticated data? By anchoring claims to cryptographic proof and distributed validation, actions become auditable realities not just convincing responses.

Open networks introduce another challenge: incentives. If anyone can verify, some will try to game the system. A strong protocol makes verification costly to fake and rewarding to perform honestly. Stake-backed attestations, challenge mechanisms, and reputation systems ensure that validation is meaningful, not spammed. Accountability becomes embedded in the economics of the network.

At the same time, privacy cannot be sacrificed for transparency. Financial records, patient histories, and civic data are too sensitive to expose. A privacy-first approach allows validation without revealing raw information. Through selective disclosure and advanced cryptographic proofs, systems can confirm truth while keeping personal data protected. Trust grows without compromising confidentiality.

Neutrality is equally important. In a fragmented AI ecosystem, no single provider should define truth. A model-agnostic protocol verifies claims regardless of which system generated them. This prevents lock-in, encourages interoperability, and creates a shared accountability layer across platforms. The focus shifts from who built the model to whether its actions can be proven.

Verification must also be continuous. One-time audits are not enough for systems that learn and evolve. Ongoing validation produces clear trust metrics that stakeholders can monitor in real time. When anomalies appear, they are detected early before small issues scale into irreversible damage. Trust becomes dynamic, measurable, and responsive.

As misinformation tactics and adversarial attacks grow more sophisticated, defenses must evolve alongside them. A decentralized verification layer adapts, incorporating new safeguards and community-driven threat intelligence. It does not assume perfection. It assumes constant vigilance.

The future of autonomy is not about choosing between innovation and safety. It is about building systems where innovation is accountable by design. With verified actions, aligned incentives, preserved privacy, and continuous oversight, AI can move from something we cautiously tolerate to something we confidently rely on. The shift is profound: from blind trust in machines to provable, responsible, and reliable autonomy.