A few weeks ago I noticed something small while scrolling through Binance Square. Two posts were making bold market predictions using AI models. One had charts, clean formatting, confident numbers. The other was rougher, less polished, but oddly more convincing because the author showed how the model had been tested over time. Same topic. Same coin. Very different feeling. And I realized the difference wasn’t the prediction. It was the proof behind it.

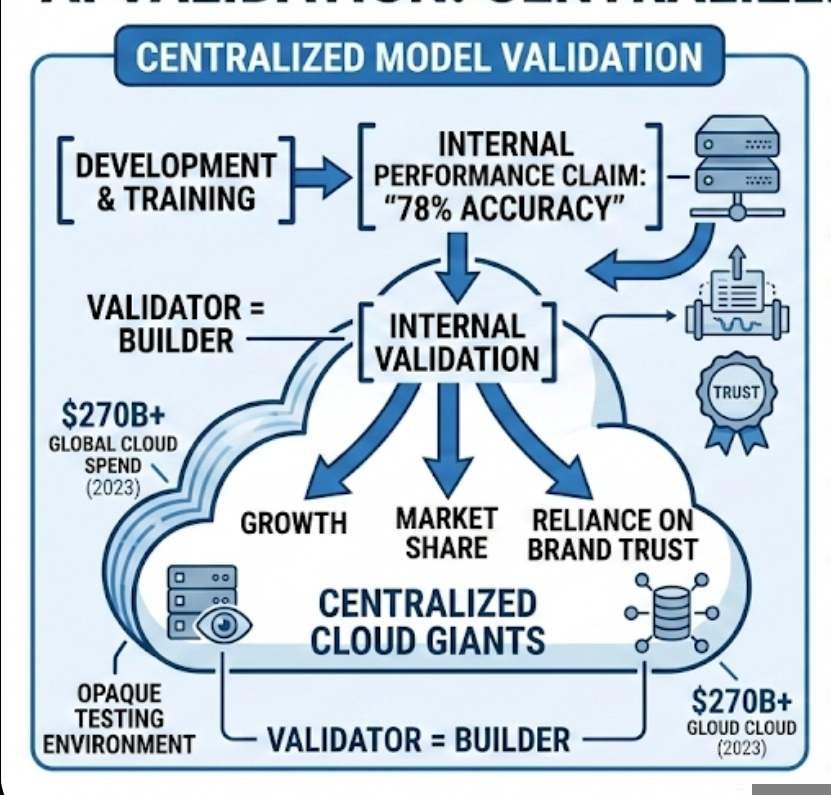

We’ve gotten used to AI outputs the way we got used to cloud storage. It’s just there. Invisible but assumed reliable. Most large AI systems today run on centralized cloud infrastructure. That means a single company controls the servers, the data pipelines, and the testing environment. When they publish performance metrics—say a model achieves 78% accuracy on a benchmark—we don’t really see the full testing setup. We see the result. We trust the brand. That trust has been earned over years, but it’s still a form of soft power.

Here’s where things start to feel slightly uncomfortable. The same company that builds the model usually validates it. Validation sounds technical, but it simply means checking whether the model actually performs as claimed. If an AI says it can detect fraud, someone needs to test it on real fraud cases. If it claims to predict short-term price direction with 60% accuracy over a 90-day period, that number should come from a clear dataset and timeframe. Otherwise it’s marketing dressed up as math.

Centralized cloud giants have scale on their side. Global cloud spending passed roughly $270 billion in 2023. That number reflects what businesses paid for computing services—servers, storage, network capacity. The scale allows fast deployment and massive training runs. But scale also concentrates control. When validation is internal, incentives align in one direction: growth, market share, stronger model performance claims. It doesn’t mean manipulation. It means there is no structural separation between builder and auditor.

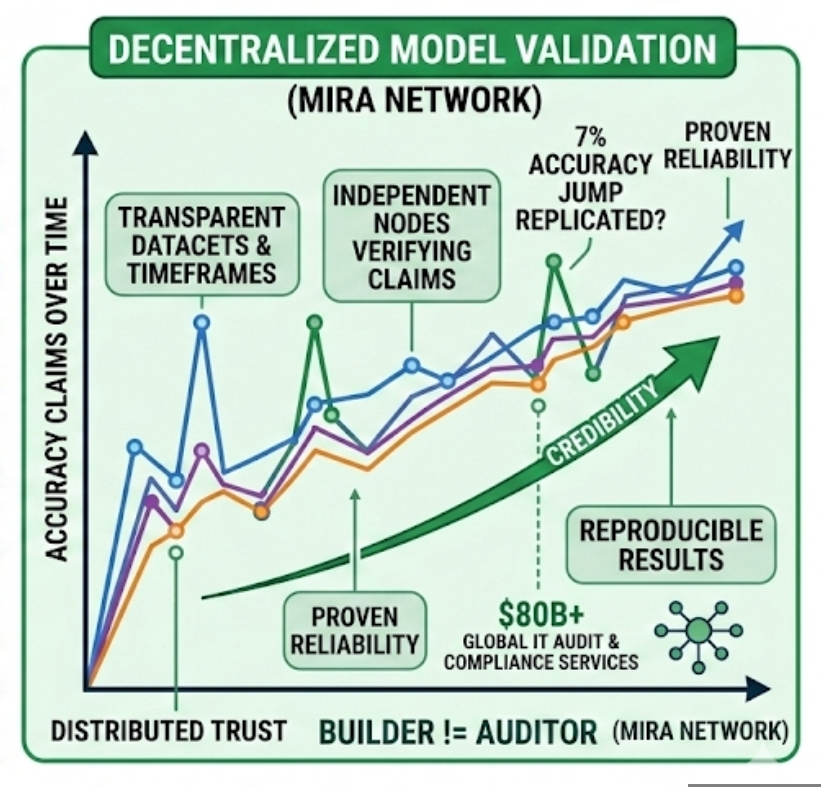

Mira Network approaches this from a different angle. Instead of assuming validation should live inside the same walls as model development, it distributes that process. Decentralized AI validation, in simple terms, means independent nodes on a network test and verify model claims. These nodes aren’t owned by the same central entity that profits from the model’s success. They operate under shared rules and economic incentives.

I’ll be honest—when I first heard about decentralized validation, it sounded like blockchain logic pasted onto AI. Validate everything, distribute trust, remove the middleman. But the more I thought about it, the more I realized AI is actually a better candidate for decentralization than simple financial transactions. Blockchain validates whether a transaction happened. AI validation is more subtle. It checks whether a claim about performance holds up across conditions.

If a model says it improved prediction accuracy from 55% to 62% over six months of market data, that 7% jump matters. In trading terms, even a few percentage points can change profitability entirely. But that improvement only means something if the dataset, time window, and testing method are transparent and reproducible. A decentralized network can replicate those tests across multiple nodes. If results converge, credibility strengthens. If they don’t, the claim weakens quickly.

And here’s something I find interesting from a behavioral angle. On platforms like Binance Square, visibility often depends on engagement metrics—likes, reposts, comments. Those are social validation signals, not technical ones. A post that “looks” intelligent can outperform one that’s quietly accurate. If decentralized validation becomes embedded into AI tools, the social layer and the proof layer could start to diverge. That shift alone might change how credibility forms online.

Of course, decentralization is not magic. Validators can collude. Incentives can misalign. If token rewards favor speed over depth, validators might rush reviews. Governance becomes a real issue. Who defines the benchmark datasets? Who updates them? If the benchmark is outdated, models might optimize for tests that no longer reflect real-world conditions. We’ve seen similar effects in education systems where students optimize for exams rather than understanding. AI systems can do the same.

There’s also the performance question. Centralized cloud environments are optimized for speed and coordination. A decentralized network, by definition, involves multiple independent participants. That can introduce latency. For applications like high-frequency trading—where trades happen in milliseconds—real-time decentralized validation may not be practical. But periodic audits, model scoring, and performance certification? That seems more realistic.

What makes this potentially disruptive to centralized cloud giants isn’t that it replaces their infrastructure. It doesn’t. Training large models still requires massive computing resources. But if validation becomes external, their grip on trust weakens slightly. And trust is not a small asset. Companies spend billions annually on compliance, auditing, and certification. Global IT audit and compliance services exceed $80 billion per year. That number exists because verification matters.

I keep circling back to one thought: in the next phase of AI, proving reliability may become more valuable than raw capability. Training costs are gradually decreasing as hardware improves and open-source frameworks mature. But independently verified performance? That might end up being the part that’s hard to find.And scarcity creates leverage.

Still, disruption rarely arrives in dramatic fashion. Cloud giants are adaptable. They can integrate decentralized components. They can partner with networks like Mira. They can open parts of their evaluation systems to external validators. The relationship might become hybrid rather than adversarial.

What feels different this time is the social context. AI outputs are shaping financial decisions, public discourse, even reputation systems. On Binance Square and similar platforms, traders already react quickly to AI-driven insights. If those insights begin to carry independently verified performance records—timestamped, reproducible, economically staked—it changes the psychological equation. Popularity becomes secondary to provability.

Maybe that’s the real shift. Not decentralized infrastructure replacing centralized clouds, but decentralized credibility sitting beside them. A second layer. Quieter. Less flashy. Harder to fake. And over time, the systems that can show their work instead of just presenting results may slowly earn the deeper kind of trust—the kind that doesn’t depend on brand power alone.

Artykuł

Mira Network and the Structural Shift in AI Validation: Rethinking Cloud Dominance

Zastrzeżenie: zawiera opinie stron trzecich. To nie jest porada finansowa. Może zawierać treści sponsorowane. Zobacz Regulamin

0

4

78

Poznaj najnowsze wiadomości dotyczące krypto

⚡️ Weź udział w najnowszych dyskusjach na temat krypto

💬 Współpracuj ze swoimi ulubionymi twórcami

👍 Korzystaj z treści, które Cię interesują

E-mail / Numer telefonu