There's a moment recen.tly where I caught myself trusting an AI output a little too quickly.

It wasn’t a big decision. Just research. Numbers. A structured explanation that sounded clean and confident. But when I double-checked it, parts of it were subtly wrong. Not absurd. Not obviously fabricated. Just… slightly off.

That’s when it hit me. The problem with modern AI isn’t that it’s dumb. It’s that it’s confidently probabilistic.

And that’s the gap Mira Network is trying to close.

Mira doesn’t compete in the intelligence race. It doesn’t promise a smarter model or better prompts. It focuses on something more foundational — what happens after AI generates an answer but before we treat it as truth.

Most systems today operate on a single-source trust model. One model outputs a result. You either believe it or manually verify it. That structure works when humans are reviewing everything. It breaks when AI starts operating independently.

Mira’s approach is different.

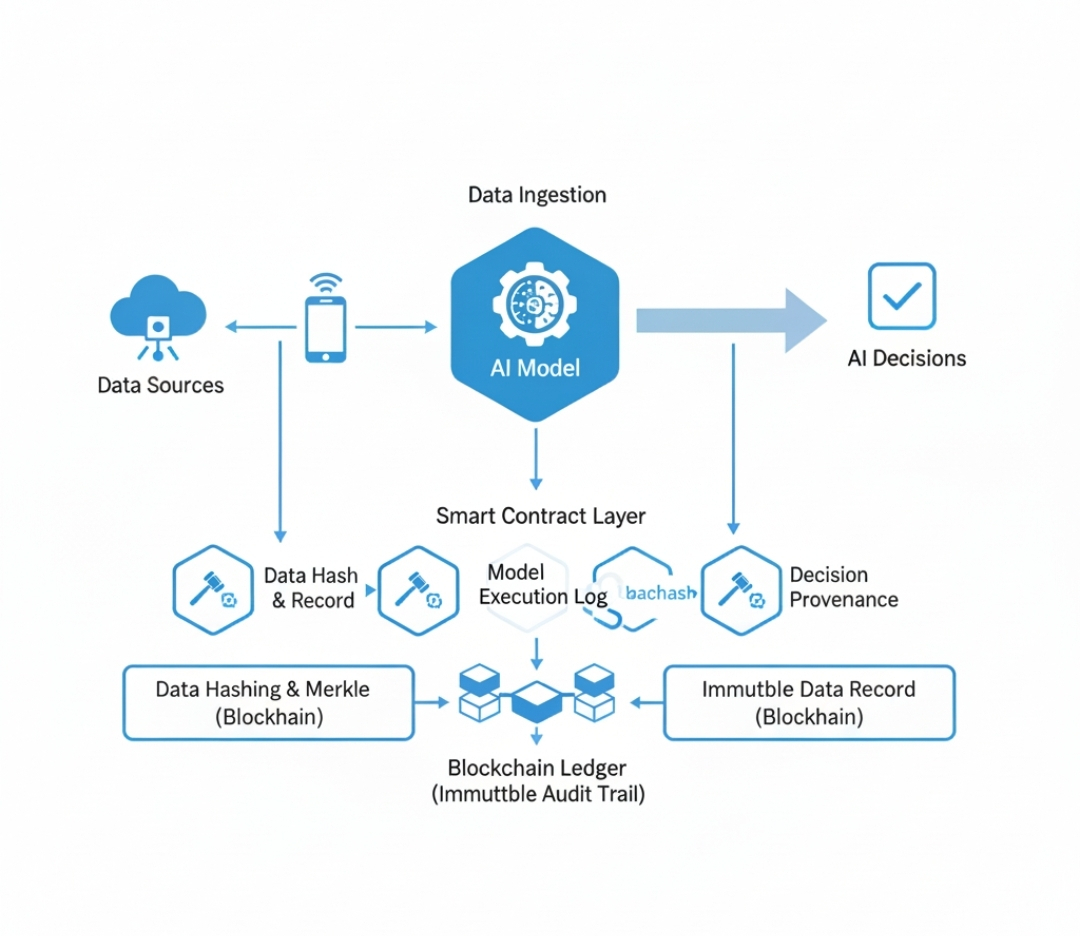

Instead of treating an AI response as one indivisible block, Mira decomposes it into smaller, verifiable claims. Those claims are distributed across independent validators within the network. Validators assess them separately, and consensus is reached using blockchain coordination combined with economic incentives.

You’re no longer trusting a single model’s authority. You’re trusting distributed agreement under stake-backed conditions.

That shift matters more than it sounds.

The blockchain layer provides transparency. Validation outcomes are recorded immutably. Validators have economic exposure, which means approving false claims carries cost. The system doesn’t rely on reputation alone. It relies on incentive alignment.

What makes this particularly relevant is where AI is heading.

We’re already seeing early forms of AI agents interacting with financial systems, executing trades, managing treasury operations, and influencing governance decisions. Once AI moves from generating drafts to executing actions, accuracy becomes infrastructure.

Mira assumes hallucinations are structural, not accidental. It doesn’t promise to eliminate them. It builds around them.

That’s a mature stance.

Of course, this introduces real engineering challenges. Claim decomposition must be precise. Validator diversity must be maintained to prevent shared bias. Verification adds overhead and latency. Collusion risks must be managed.

But the thesis feels directionally correct.

Intelligence alone doesn’t create trust. Verification does.

As AI systems become more autonomous, the demand for cryptographically verifiable outputs will increase. Centralized moderation won’t scale. Brand reputation won’t scale. Manual review certainly won’t scale.

Mira positions itself as the trust infrastructure layer that sits beneath AI autonomy — turning probabilistic outputs into consensus-backed information.

It’s not loud. It’s not flashy.

But if AI keeps moving toward real economic and governance roles, this kind of layer won’t be optional.

It will be foundational.