After spending time reading through how Mira Network is structured, I’ve started to see it less as another blockchain project and more as an attempt to address something deeper: the reliability gap in AI systems.

Most AI models today, no matter how advanced, still produce hallucinations. They generate answers that sound correct but aren’t. In centralized systems, the responsibility for fixing that sits with the company running the model. Internal audits, fine-tuning, and safety layers all happen behind closed doors. Users have to trust the provider.

Mira Network takes a different route.

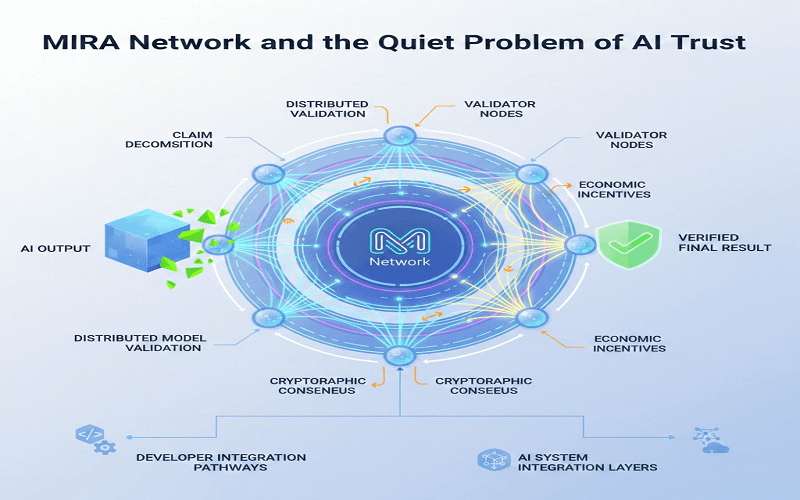

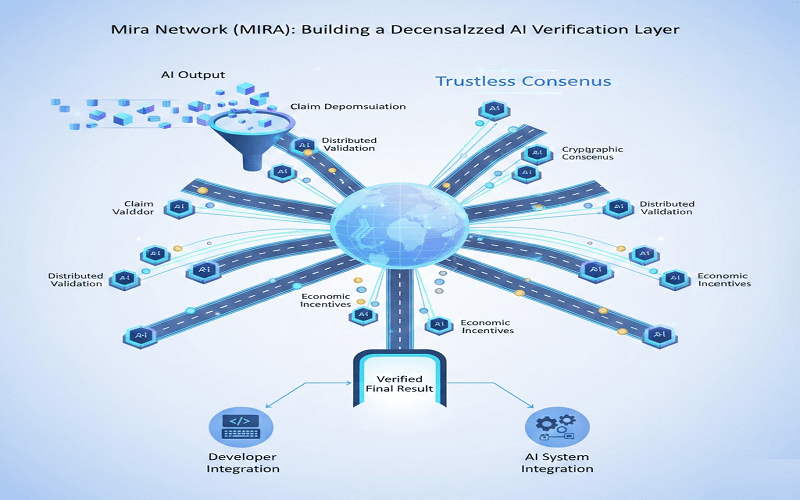

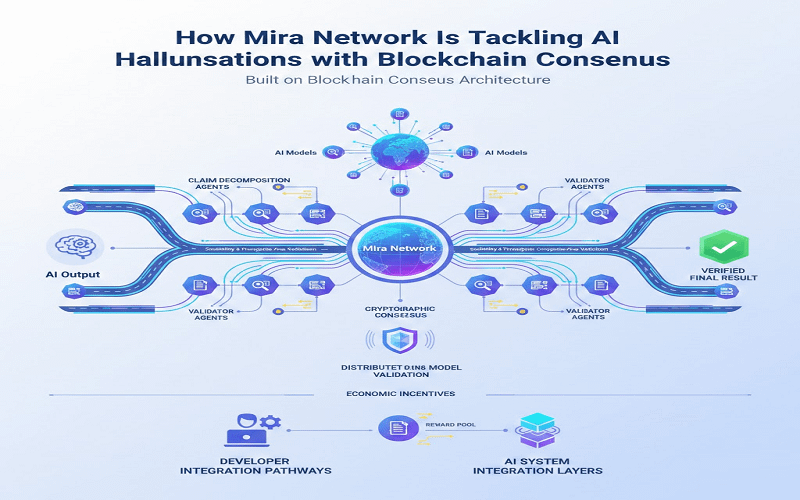

Instead of assuming one model can be made perfectly reliable, it treats AI outputs as claims that need verification. When an AI generates an answer, Mira’s protocol breaks that output into smaller factual statements. Those claims are then distributed across independent models in the network for cross-validation. If enough of them agree, the claim gains confidence. If they diverge, it’s flagged.

It reminds me of how fact-checking works in journalism. A single reporter might write a story, but editors and independent sources review it before publication. Mira tries to formalize that process into a protocol layer.

The important difference is that this validation doesn’t rely on a central authority. It uses blockchain-based consensus and cryptographic proofs to record the validation process. The result isn’t “trust us.” It’s verifiable evidence that multiple independent systems reached similar conclusions.

That’s where the blockchain element becomes practical rather than symbolic.

Instead of storing large AI outputs directly on-chain, Mira records verification results and proofs. Validators are economically incentivized through the $MIRA token. If they provide accurate validations, they’re rewarded. If they behave dishonestly, the system can penalize them. The goal isn’t perfection; it’s alignment of incentives toward honest verification.

When I looked at @Mira - Trust Layer of AI updates and documentation, what stood out wasn’t grand promises. It was the emphasis on structured validation. The protocol doesn’t try to replace large AI models. It sits beneath them, like a verification layer.

In traditional AI deployment, a company releases a model and maybe adds a monitoring tool. In #MiraNetwork , validation becomes decentralized infrastructure. Any application that relies on AI—financial analytics, research tools, content generation could theoretically plug into this layer to check outputs before acting on them.

That separation is subtle but important.

Instead of asking, “Is this AI smart?” Mira asks, “Can we verify what it just said?”

Of course, the system isn’t without challenges. Breaking AI outputs into structured claims adds computational overhead. Running multiple models for cross-validation increases cost. Coordination between validators introduces complexity. And the broader decentralized AI space is becoming competitive, with other protocols exploring similar verification or compute-sharing models.

There’s also the reality that the ecosystem is still early. Tooling, developer adoption, and standardization will take time. A verification layer only becomes meaningful when applications integrate it consistently.

Still, I find the framing compelling.

Rather than chasing bigger models, #Mira focuses on accountability between models. It accepts that hallucinations won’t disappear overnight. Instead, it builds a distributed system that reduces blind trust and replaces it with recorded consensus.

That shift from centralized assurance to trustless validation feels like the real experiment here.

And whether it scales or not, it reflects a growing recognition that intelligence alone isn’t enough; verification matters just as much. #GrowWithSAC