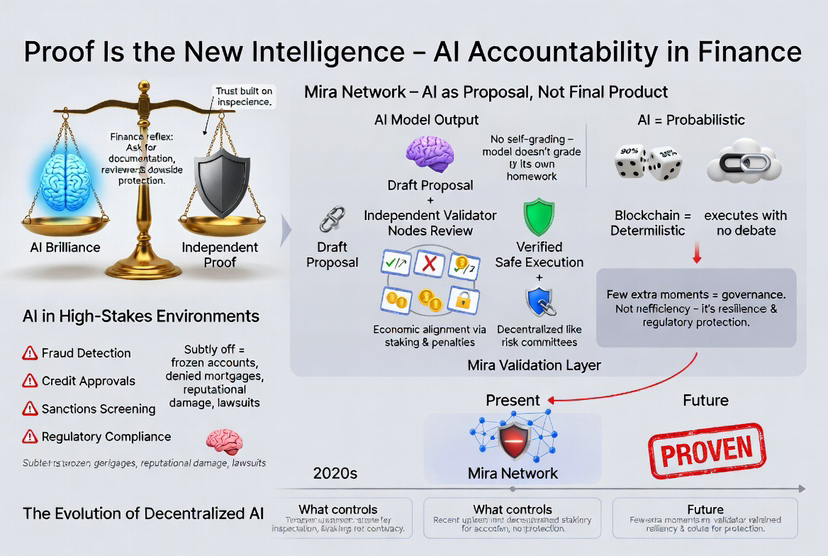

After enough years in finance, you develop a reflex. When someone makes a big claim, you don’t lean forward — you lean back. You ask for documentation. You ask who reviewed it. You ask what happens if it’s wrong. That instinct isn’t cynicism. It’s survival. In this industry, trust isn’t built on how confidently something is said. It’s built on whether it can withstand inspection.

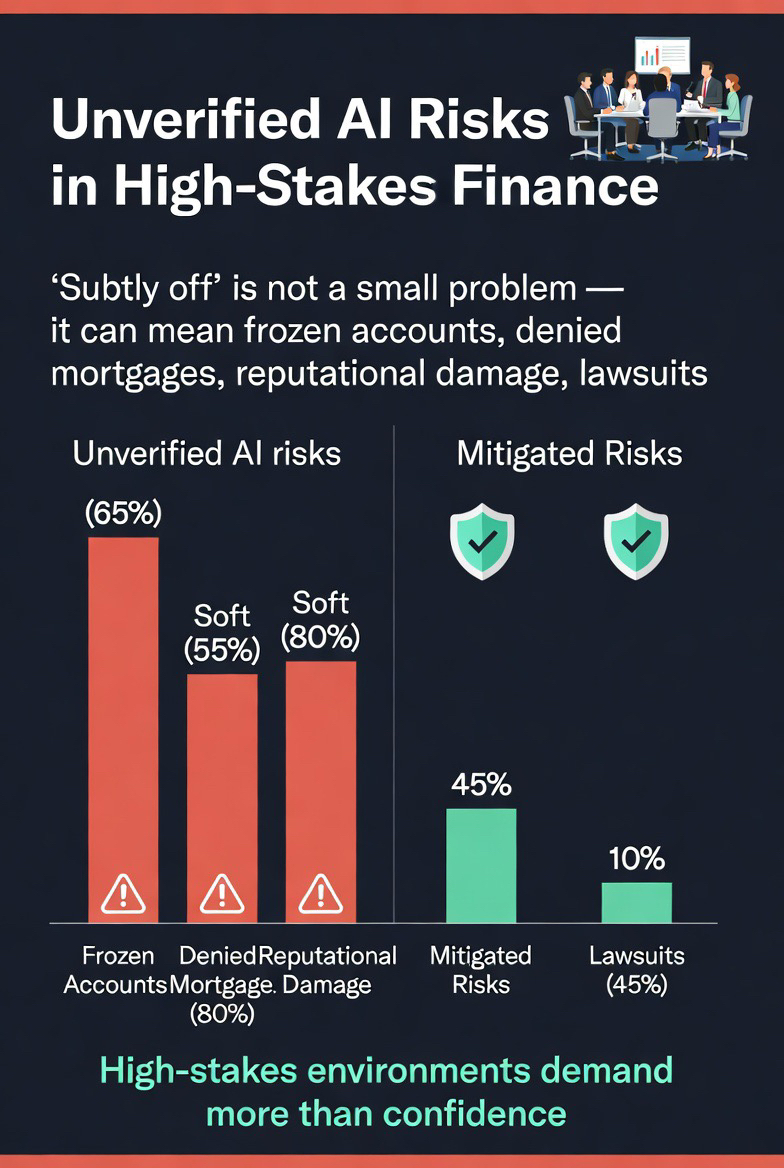

That’s why artificial intelligence, for all its brilliance, still feels unfinished in high-stakes environments. It can summarize a 200-page report in seconds. It can draft policy memos, model projections, even simulate legal reasoning. But it often delivers answers with the same tone whether it’s correct or subtly off the mark. And in areas like fraud detection, credit approvals, sanctions screening, or regulatory compliance, “subtly off” is not a small problem. It can mean frozen accounts, denied mortgages, reputational damage — sometimes lawsuits.

This is where Mira Network becomes interesting in a very different way. Not flashy. Not theatrical. Just quietly structural.

Instead of treating an AI output as a finished product, Mira treats it as something closer to a proposal. A draft decision. A claim that still needs scrutiny. Before that output can move forward or trigger action, independent validator nodes review it. The model doesn’t get to grade its own homework. That separation matters more than it sounds.

If you’ve ever sat through a risk committee meeting, you know why. The person who makes the trade isn’t the one who settles it. The team that builds the model isn’t the team that signs off on it. Finance learned — sometimes painfully — that independence is protection. You don’t prevent mistakes by hoping they won’t happen. You build structures that assume they will.

What makes this approach compelling is that verification isn’t layered on top as a marketing feature. It’s embedded in the architecture. The system assumes outputs need checking. That feels far more aligned with regulated industries than the usual “trust our model, it’s state-of-the-art” narrative.

There’s also a deeper tension playing out between AI and blockchain. Blockchains are deterministic. If the conditions are met, the transaction executes. No debate. AI, on the other hand, is probabilistic. It works in likelihoods, not absolutes. When you connect those two worlds without friction, you’re essentially allowing probabilities to harden into irreversible outcomes. That’s not always wise.

By inserting a validation layer between AI output and final execution, Mira slows the process just enough to make it safer. In finance, that’s not inefficiency — it’s governance. The few extra moments spent verifying can be the difference between operational resilience and regulatory fallout.

Over the past year, the conversation in decentralized AI has started shifting. Early enthusiasm revolved around bigger models and more compute power. Now the discussion is maturing: validator incentives, cryptographic attestations, economic penalties for bad actors. Recent updates within Mira’s ecosystem have focused on strengthening validator decentralization and refining staking mechanisms to ensure that those reviewing outputs are economically aligned with accuracy. That’s a subtle but important evolution. It suggests the goal isn’t just innovation — it’s durability.

And durability is what institutions actually care about.

Because when something goes wrong — and eventually, something always does — the question won’t be how advanced the model was. It will be: What controls were in place? Who verified the decision? Was there an independent process? Was it reproducible?

Those are not technical questions. They are human ones. They are the kinds of questions lawyers ask, regulators ask, boards ask.

There’s something almost philosophical here. The first generation of AI chased eloquence. It wanted to sound intelligent. The next generation may need to prove it. In environments shaped by compliance manuals and audit trails, intelligence without verification feels incomplete — like a beautifully written contract that nobody countersigned.

For someone shaped by years of reconciliations and risk reviews, this shift feels natural. You don’t want AI to be louder. You want it to be accountable. You want it to leave footprints. You want to know that if it makes a decision affecting someone’s credit, livelihood, or legal standing, there was more than just an algorithm behind it. There was oversight.

Maybe that’s the real evolution underway. Not smarter machines in the theatrical sense, but wiser systems in the structural sense. Systems that understand that confidence is not the same as correctness — and that in the real world, correctness is the only thing that lasts.

In finance, trust compounds slowly. It is earned in decimals, lost in headlines. If AI is going to operate in that world, it has to learn the same discipline.

Proof, in the end, is not a constraint on intelligence. It’s what makes intelligence worthy of trust.