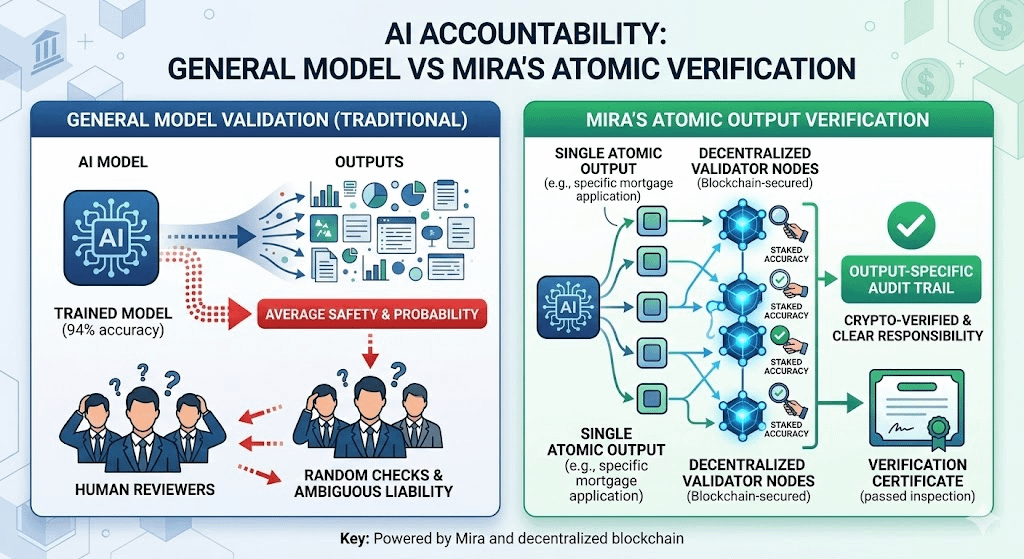

In an era where artificial intelligence is permeating every corner of financial and governance systems, the industry is facing a daunting question it has long avoided: when an autonomous system makes a flawed decision with severe consequences, who bears the ultimate responsibility. This ambiguity regarding accountability is not just a legal hurdle; it is the single largest bottleneck preventing AI penetration into institutional sectors where every margin of error is not merely a technical glitch but a massive financial and reputational risk. Most current solutions focus on model management through bias dashboards or explainability cards; however, these only indicate that a problem exists rather than guaranteeing the reliability of a specific output. This is where Mira’s decentralized verification model changes the game by shifting the focus from "average model safety" to "individually audited results."

The core problem of modern AI is that outputs are often treated as suggestions rather than legally binding decisions; this creates a "gray zone" where organizations reap the benefits of machine speed while evading liability when things go wrong. A credit model may be correct ninety-four percent of the time, but the remaining six percent of errors represent a catastrophe for those unfairly denied a loan. Mira addresses this by establishing a verification infrastructure where every AI output must undergo a rigorous auditing process before execution. This process is akin to a manufacturer labeling each specific product as "passed inspection" rather than just claiming their general production line meets standards; it creates a transparent audit trail—the very thing regulators and courts demand in sensitive industries like insurance or banking.

The most innovative aspect of Mira lies in its blockchain-based economic incentive mechanism; validators are rewarded for accuracy and penalized financially for negligence or fraud. This creates a trust market where accountability is no longer an abstract concept but a clear financial commitment. While challenges regarding latency and the balance between security and performance remain, there is no denying that an AI system capable of proving the authenticity of every decision will hold a vastly different status compared to systems based on mere probability. In the context of twenty-twenty-six, trust is no longer granted; it is built through every transaction and every transparent verification step to ensure that when an error occurs, we always know exactly where the responsibility lies. @Mira - Trust Layer of AI $MIRA #Mira