Artificial intelligence is no longer a futuristic concept , it’s part of our everyday reality. It writes reports, analyzes markets, assists doctors, supports researchers, and even helps governments interpret data. The speed is impressive. The scale is unmatched. But beneath all that capability lies a simple question that still doesn’t have a universal answer: Can we fully trust it?

AI systems are designed to predict patterns and generate responses based on probabilities. They are incredibly good at sounding confident. The challenge is that confidence does not always equal correctness. A response can look polished and convincing while quietly carrying subtle factual errors, reasoning flaws, or contextual misunderstandings. In low risk environments, that might be manageable. In high-stakes sectors like finance, healthcare, or infrastructure, even a small mistake can trigger significant consequences.

The Hidden Gap in Modern AI Design

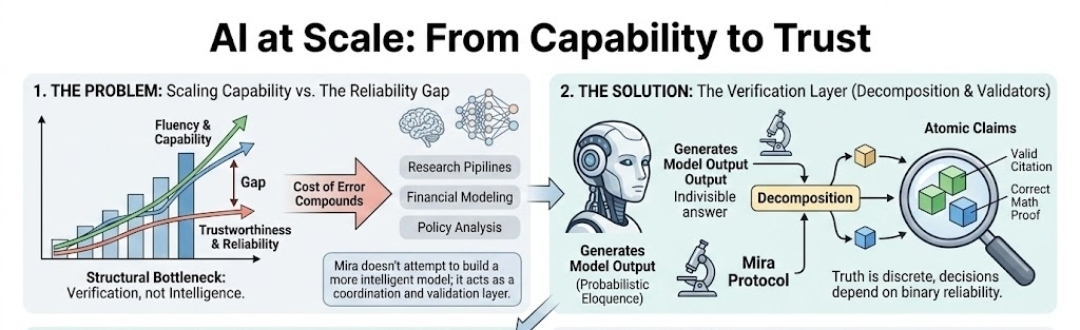

Most advanced AI models are optimized for efficiency faster outputs, larger datasets, better pattern recognition. These goals make sense from a performance standpoint. However, the architecture often prioritizes generation over verification. Once the output is produced, it is typically accepted at face value unless manually reviewed.

That gap between generating intelligence and validating it is where uncertainty lives.

As organizations integrate AI deeper into decision-making pipelines, this structural limitation becomes harder to ignore. Automation increases productivity, but without a mechanism to confirm accuracy independently, it also increases risk exposure.

A Different Philosophy: Verification Before Trust

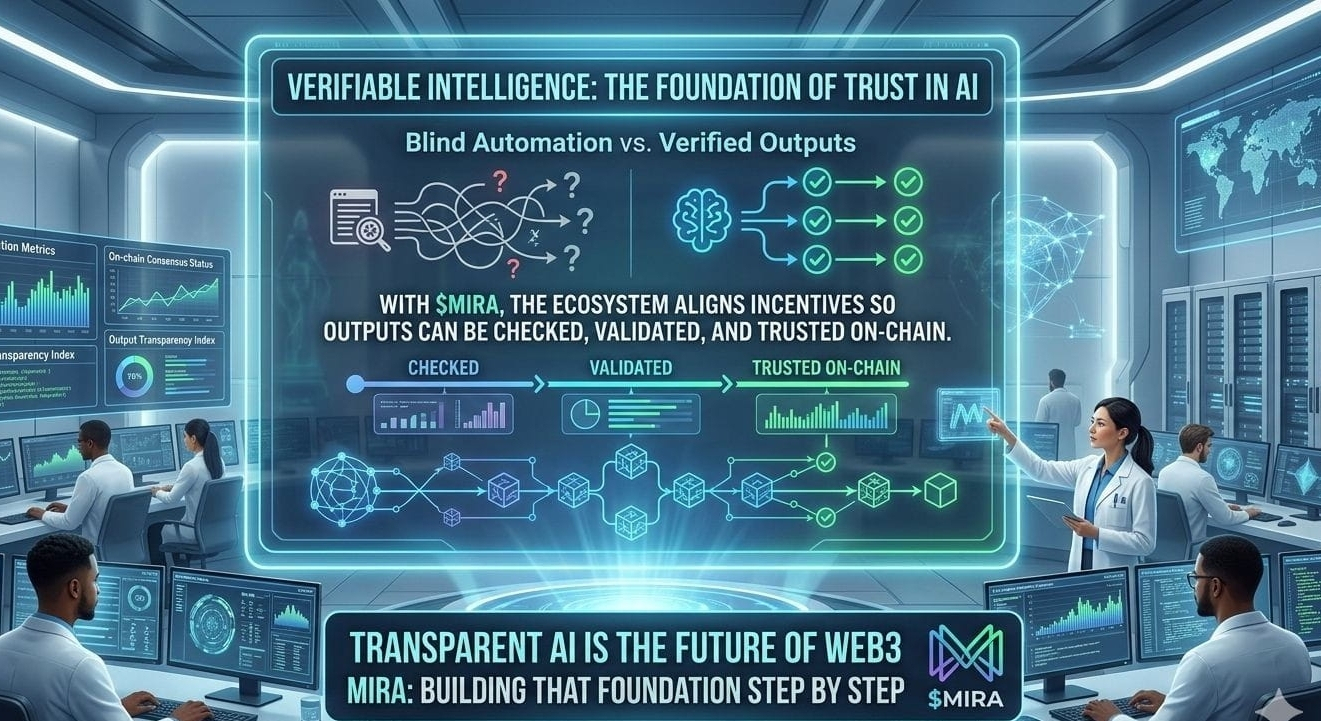

This is where $MIRA Network introduces a shift in perspective.

Instead of competing to build the biggest or fastest model, Mira focuses on something equally critical: validating what AI systems produce. The idea is simple yet powerful separate creation from confirmation.

In this architecture, AI can generate insights, but those insights are not immediately treated as final truth. They pass through a decentralized verification layer that evaluates claims before they are relied upon or executed. This separation creates a clear boundary between output and approval.

Turning Complex Answers Into Checkable Claims

One of the most innovative aspects of the system is how it handles AI responses. Rather than reviewing a large block of generated content as a whole, it breaks the output into structured, testable assertions.

Each statement becomes a claim that can be individually examined.

This granular method reduces the risk of hidden inaccuracies slipping through. If one part of a response is flawed, it doesn’t invalidate or contaminate everything else. Precision improves because verification happens at the smallest meaningful unit of information.

Decentralized Review, Not Single Point Authority

Traditional validation systems often rely on a central authority. While efficient, centralization can introduce bias, shared blind spots, or single points of failure.

Mira Network distributes the verification process across independent participants. Validators assess claims separately, applying diverse reasoning and perspectives. A conclusion is accepted only when consensus is reached.

This distributed model enhances resilience. It reduces dependence on any single reviewer and minimizes systemic blind spots that may arise within one analytical framework.

Transparency Through Immutable Records

Trust is strengthened when processes are transparent. To achieve this, verification outcomes are recorded on blockchain infrastructure. Once documented, results cannot be altered retroactively.

This creates a permanent audit trail an essential feature for enterprises operating in regulated industries. Whether for compliance, internal accountability, or external review, organizations can demonstrate that AI , driven conclusions were independently validated before deployment.

Incentives That Reward Accuracy

A system is only as strong as the incentives behind it.

Mira integrates economic mechanisms that reward validators for precise and responsible assessments. Participants who consistently provide accurate evaluations build reputation and earn rewards, aligning financial motivation with system integrity.

Instead of assuming accuracy as a default, the network makes it measurable and incentivized.

Preparing for Autonomous AI Systems

Artificial intelligence is steadily moving toward greater autonomy. From algorithmic trading and automated logistics to clinical decision support and scientific discovery, AI systems are beginning to act with minimal human intervention.

As autonomy increases, the margin for unchecked error shrinks.

Verification can no longer be optional it must become foundational infrastructure. Mira positions itself as the reliability bridge between powerful AI engines and real-world accountability. It ensures that as machines grow more capable, their outputs remain grounded in verifiable truth.

From Possibility to Proof

The future of AI will not be defined solely by how intelligent systems become. It will be defined by how trustworthy they are.

By introducing decentralized validation, structured review processes, and transparent consensus mechanisms, $MIRA Network aims to move artificial intelligence beyond probability driven responses toward something stronger verified digital certainty.

In an age where data drives decisions and algorithms influence outcomes, trust is not a luxury. It is infrastructure.