I tried to measure trust as a product and Mira changed the equation. At first it sounded strange even in my own head. We are used to thinking that AI is the product. Faster answers are the product. Better prediction is the product. Cleaner interface is the product. Trust is just a side effect. But when I looked deeper I realized something uncomfortable. Intelligence without trust has limited value. It can impress you but it cannot anchor serious decisions.

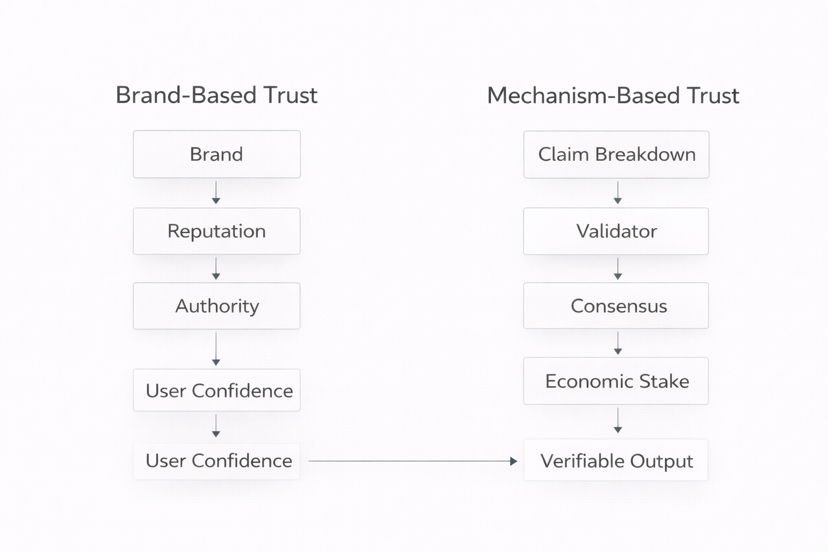

In the traditional world trust normally comes from brand. A famous company logo creates psychological comfort. Or it comes from reputation built over many years. Or from a central authority that promises oversight and control. In each case trust lives outside the system itself. You believe the output because you believe the institution behind it. Trust is social capital. It is slow to build and fragile under pressure.

Mira approaches it from a different direction. Here trust is not a promise. It is a mechanism. Output is not just delivered. It is decomposed into claims. Each claim can be challenged. Validators review those claims with economic stake at risk. Consensus is formed before a proof record is finalized. The process itself becomes the source of credibility. Instead of trusting a logo you trust a transparent validation path. That feels very different.

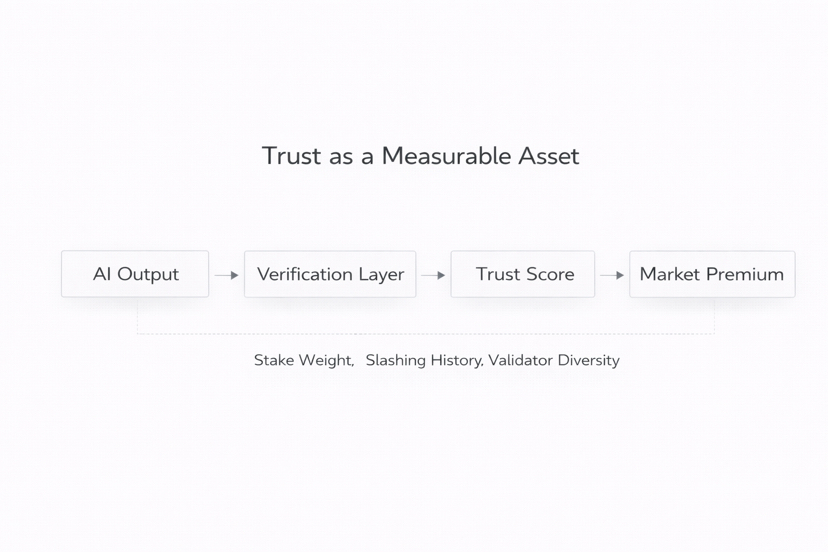

What surprised me most was this. In Mira trust is measurable. You can count validators. You can measure stake. You can see consensus strength. You can analyze slashing events. Trust is no longer a vague feeling. It becomes a quantifiable property of the system. It has inputs. It has costs. It has visible enforcement.

That raised a deeper question in my mind. If trust becomes measurable can it become a standalone product. Today companies sell AI capability. They market model size. Speed. Benchmark scores. But what if in the future the real premium is not accuracy alone. What if companies begin to sell proof of AI. Structured validation. Economic backing. Transparent consensus history. In that world AI intelligence becomes common infrastructure while verified intelligence becomes premium infrastructure.

Think about financial contracts. Legal automation. Insurance underwriting. Capital allocation decisions. These domains do not just require intelligence. They require defensibility. They require something that can be audited later. If a model makes a decision worth millions no board will accept it without traceability. Proof becomes more valuable than polish. Accountability becomes more important than fluency.

If that shift happens then we are no longer competing on who has the smartest model. We are competing on who has the strongest trust architecture. That is a different battlefield. It rewards economic design. It rewards distributed incentives. It rewards systems that can prove their own reliability under stress.

This leads to the final uncomfortable thought. If trust becomes measurable could it become tradable. Imagine institutional buyers evaluating verification strength like they evaluate credit ratings. Imagine pricing premiums for higher consensus thresholds. Imagine markets where stronger proof commands higher fees. Trust would not just be felt. It would be priced.

I started this exploration thinking AI was the hero. I ended it realizing trust might be the real product. If intelligence becomes abundant then proof becomes scarce. And in markets scarcity usually defines value. Maybe the future is not about selling smarter answers. Maybe it is about selling accountable answers. And if trust can be measured then one day it might be traded like any other asset.