Mira Network starts from an idea most AI teams avoid saying out loud: the generator is the least trustworthy part of the system. Not because it’s “bad,” but because it’s doing what it was trained to do—produce plausible language fast. Mira’s bet is that the next wave of AI won’t be won by the model that talks the smoothest, but by the stack that can attach a receipt to what the model says.

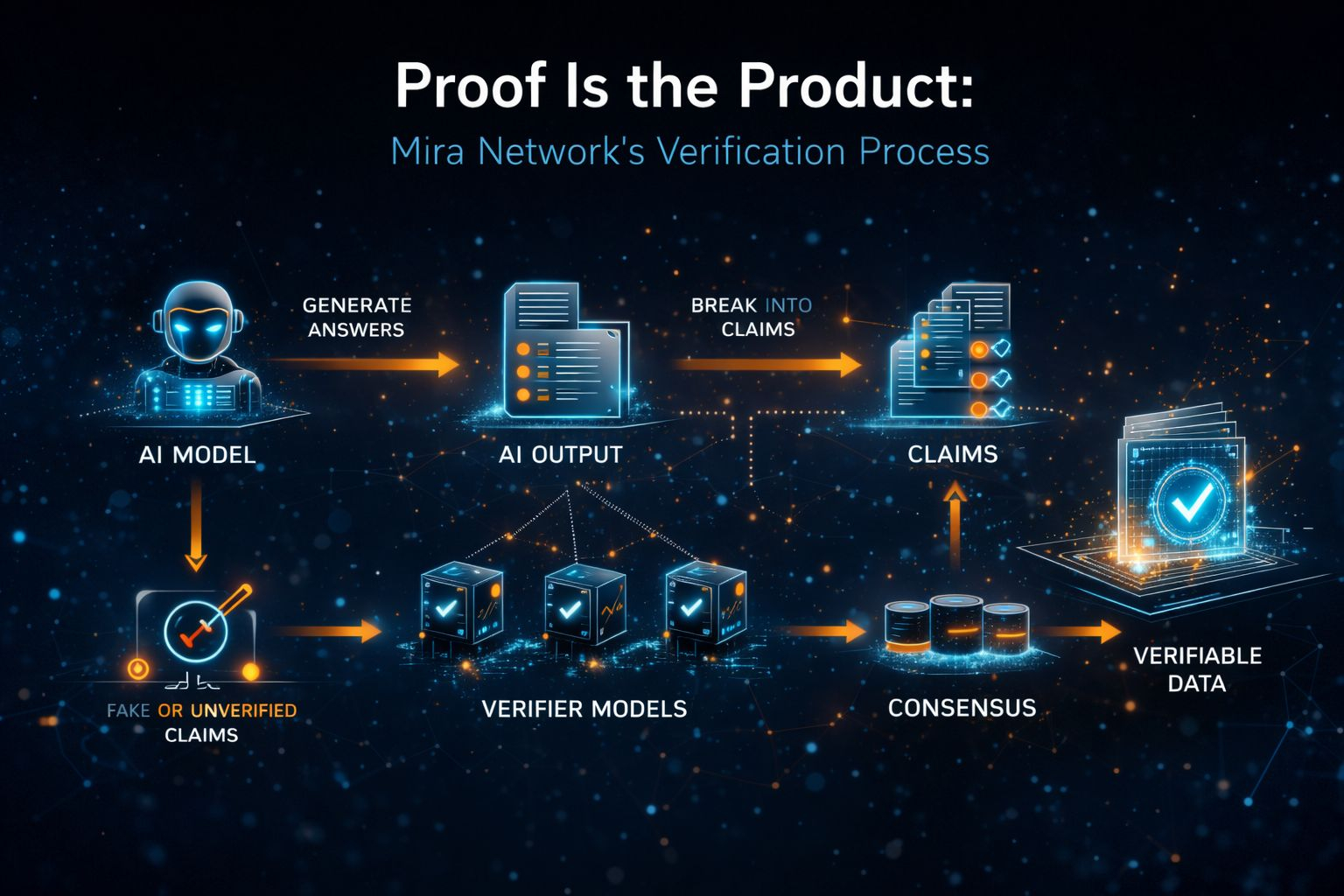

The way Mira frames it is blunt: turn output into claims, run those claims through independent verifiers, and produce a cryptographic artifact that records what was checked and what the network agreed on. That artifact isn’t “truth.” It’s something more practical: proof that a verification process happened, under specific rules, with an auditable trail. In a world where everyone is selling confidence, Mira is selling accountability.

If you’ve ever watched a model hallucinate in a setting that actually matters, you understand why this exists. The legal world found out the loud way when fake citations showed up in court filings and the entire story wasn’t “AI is evil,” it was “people will trust a fluent paragraph the way they trust a professional.” The scariest part wasn’t the mistake. The scariest part was how normal it looked until someone bothered to verify it.

That’s the real shape of the risk. Models don’t fail like servers. They don’t crash and throw errors and wake you up at 3 a.m. They fail like a coworker who sounds competent while getting the details wrong. Quiet failure. Professional failure. The kind that makes you ship something you shouldn’t have shipped because it felt finished.

In normal software, we already learned how to live with untrusted components. We don’t “trust” a deployment because the engineer is confident; we trust it because there’s a pipeline, tests, change logs, rollbacks, and monitoring. If the change breaks production, there’s a paper trail and a path back. AI output today mostly skips that entire discipline. It goes straight from generation to decision, sometimes with a “sources” box stapled on as decoration.

Mira is basically trying to turn AI output into something that can pass through the same kind of operational rigor. The core move is boring in the way real infrastructure is boring: take a paragraph and split it into discrete claims that can be checked. Not “does this feel correct,” but “is this claim supported,” “does this conflict with policy,” “is this fact consistent with known references.” When that decomposition is clean, verification becomes mechanical. When it’s messy, verification becomes a debate, and debates don’t scale.

That claim-formatting step is where most “trust” projects die, because language is slippery. People compress ideas, imply things, hedge, blend facts with framing. If you break an answer into claims poorly, verifiers will disagree not because reality is unclear, but because the question you asked them was unclear. The difference between a verification layer and a chaos generator is often just the quality of that transformation.

The part that feels most honest in Mira’s design is that it assumes adversaries. If you create rewards for verification, you will attract people who optimize for rewards. Not “might.” Will. That’s why Mira leans on incentive design that makes lazy guessing expensive and collusion harder—staking mechanics, repeated checking, patterns that look suspicious when participants converge too neatly. It’s not a romantic “wisdom of the crowd” story. It’s a “we expect gaming, so we engineer against it” story.

There’s also a reason Mira talks about decentralization without making it a religion. If one company controls verification, then verification becomes just another vendor promise. Vendor promises don’t survive pressure. The day verification becomes economically inconvenient—too slow, too costly, too politically awkward—someone will quietly lower thresholds, hide uncertainty, or “simplify” the process until it’s basically a rubber stamp. A network of independent verifiers isn’t magic, but it makes capture harder. It distributes the power to decide what gets labeled as verified.

But it’s worth being precise here: consensus is not truth. A network can agree and still be wrong. A network can reflect cultural bias. A network can be skewed by whoever shows up. Mira’s own framing gets close to the uncomfortable point—some things are contextual, some “truths” are perspective-bound, and verification has to deal with that rather than pretending everything is a multiple-choice quiz. The best verification systems won’t just output “verified” or “not verified.” They’ll output uncertainty in a way humans and systems can act on.

That’s where the “proof artifact” becomes the important product, not a vanity badge. A badge tells you “trust me.” An artifact tells you “here’s what happened.” It turns questions from philosophical to operational. Who verified this? Under what threshold? How many verifiers agreed? What did they disagree on? Can I audit it later? If a regulator asks why your system made a decision, “the model was confident” is not an answer. A verifiable trail is.

You can feel why this matters most when you stop thinking about chatbots and start thinking about agents. A chatbot that hallucinates is annoying. An agent that can act on your behalf is a liability if it’s unverified. If an agent can approve refunds, submit paperwork, trigger a trade, change infrastructure, or move funds, then a single hallucinated claim can become a real-world action that’s difficult to unwind. At that point, verification is no longer about “accuracy.” It’s about permissioning. What is the minimum proof required before this system is allowed to execute?

That’s the future Mira is implicitly building toward: verified output as a gate for action. Not every action needs the same threshold. Some things can be “draft,” some things can be “human review,” some things can be “automatable.” But you need a ladder of trust that isn’t based on how persuasive the language sounds. You need a ladder based on evidence, agreement, and auditable process.

There’s a version of this future that’s ugly, though, and it’s important to say it plainly. A verification layer can become a lie machine with extra steps if it’s engineered to always return “verified” because the business wants throughput. If Mira—or anyone—turns verification into a feel-good label instead of a principled system that sometimes says “we don’t know,” then it’s not infrastructure. It’s marketing with cryptography.

So the real test for Mira won’t be how elegant the concept sounds. It’ll be measurable and, honestly, a little unsexy. How often does it refuse to verify? How does it represent uncertainty? What does disagreement look like? How does it detect coordinated manipulation? How expensive is verification per claim, in practice, at scale? What happens when verifiers drift or degrade? How does it handle edge cases where claims are entangled and can’t be cleanly separated without losing meaning?

If Mira gets those hard parts right, the impact isn’t “AI becomes safe.” That’s not how the world works. The impact is that AI becomes governable. It becomes something you can integrate into high-stakes workflows without betting the company on a model’s tone of voice. It becomes less like a magician and more like a component you can measure, constrain, and audit.

And that’s the shift I think we’re heading toward whether people like it or not. The last couple years trained everyone to worship generation. The next few years will punish anyone who can’t prove what they’re generating. The winners won’t be the teams that can produce the most output. They’ll be the teams that can answer the one question that actually matters once AI starts touching money, law, health, and infrastructure: how do you know?

Mira is one attempt to make “how do you know” something a system can answer, not a human’s gut feeling. If that idea lands, the hype cycle doesn’t disappear—it just gets forced to grow up. Proof stops being a luxury. It becomes the price of admission.

@Mira - Trust Layer of AI $MIRA #Mira