Discussing the future of AI, one tends to refer to the increasing intelligence of models. However, the issue of intelligence is not the biggest anymore. The bigger issue now is trust. Is it trustworthy of what AI systems are telling us? In such a serious setting where can they be safely used? It is at this point that the interest of the idea behind Mira begins.

The main idea on which Mira Network is founded is very straightforward: do not trust AI results blindly, check them. The network instead of accepting a response on face value breaks such a response down into smaller claims and authenticates them against independent validators. These validators may be alternative AI models, and when the two arrive at a specific degree of consensus, then and only then is something considered to be verified. They are then captured in a non-secretive and cryptographic manner and this provides another level of trust.

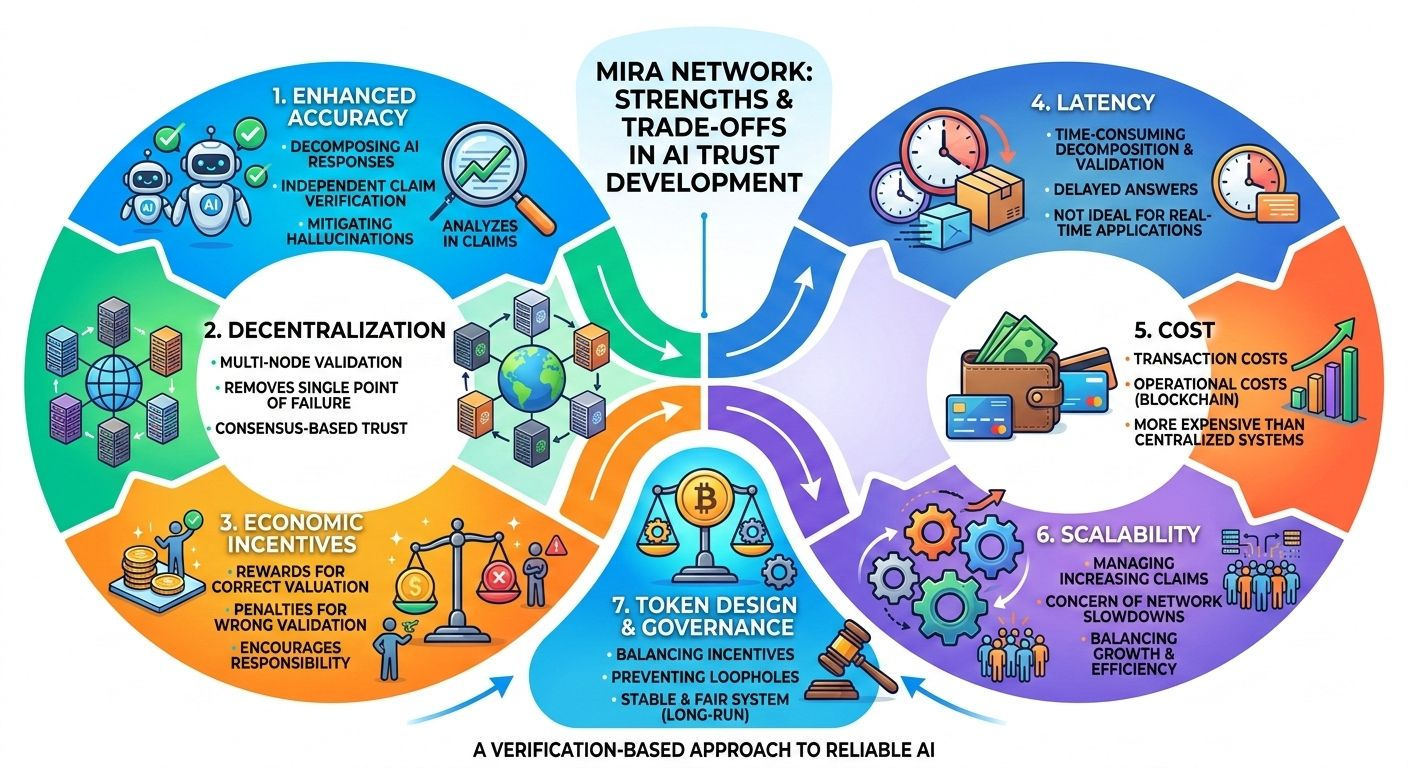

Among the greatest benefits of this system, there is enhanced accuracy. Some AI models are prone to hallucinations. The answers produced by them are not factual but sound and correct. Breaking the outputs down into smaller statements and verifying the statements individually, Mira mitigates the risk of false information making its way into the system without being detected. It is a pragmatic approach of transforming AI responses into something that is more reliable.

The other significant advantage is decentralization. In the majority of AI systems nowadays, trust is based on a single company, single model, or single authority. That puts it at one point of failure. In case that system is wrong or biased or simply manipulated, there is no mechanism to question it. The configuration of Mira propagates validation to a lot of independent nodes. That makes the errors of errors or manipulation to take the day. Trust is something that is shared rather than concentrated.

A major strength is also economic incentives. Validators are also given incentives of fair and correct evaluation and can be penalized in case of wrong validation. This produces a system in which the participants are encouraged to be responsible. In cases where real value is involved, the verification is more serious and less symbolic. Critical use cases, e.g. finance, healthcare, or autonomous AI agents, are particularly important to critical structures, and reliability is not an option.

However, no system is perfect. Mira Network is also associated with trade-offs. Latency is also one obvious drawback. The process of decomposing the content into claims and processing them by a variety of validators will inherently require more time than merely producing a quick answer with one model. This additional delay may be counterproductive in applications where speed is of more importance than precision.

Cost is another factor. The network has transaction costs and operational costs since this is a network utilizing blockchain infrastructure. The system, which comprises running decentralized validation nodes, consumes resources. This setup may cost more as compared to the normal AI systems that can run on the inside servers of a company.

There is also the aspect of scalability. The network has to deal with more claims, more validators and consensus processes as the demand grows. Otherwise, the risk is that the active use of the system will slow it down or cost even more. The decentralized systems will always face a challenge in managing growth without compromising with efficiency.

It also depends upon the token design and control. The balance has to be well maintained since the network is based on economic incentives. In case the incentives are lazy formed, participants may attempt to bypass loopholes. Governance decision making will contribute significantly so as to make the system stable and fair in the long run.

Despite such limitations, Mira Network is a significant change in the way we perceive the reliability of AI. Neither does it guarantee ideal good health. Rather it is concerned with verifying and holding AI outputs accountable. That difference matters. With the increased future participation of AI in the actual decision making, verification will be equally significant as ability.

Ultimately, Mira is strong since it is not deceptive about the short comings of AI. It accepts the fact that the models may be mistaken and rather than ignoring this fact it develops a system to deal with that fact. The adjustments are not imaginary, nor is the improvement. When the objective is to evolve the impressive AI into a reliable AI, it is something that networks such as Mira can do.

@Mira - Trust Layer of AI #Mira $MIRA