I’ll be honest. When I first heard about Mira Network, I didn’t react with excitement. AI and blockchain together is not a new idea. Most of the time, it sounds impressive on the surface but falls apart when you look at the mechanics. So instead of focusing on the narrative, I tried to understand the problem it is solving.

The real issue is not that AI exists. The issue is that AI sounds confident even when it is wrong. If you use AI casually, small mistakes don’t matter. But if AI is used in trading systems, research automation, compliance checks, or autonomous decisions, a wrong answer becomes expensive. And the scary part is that the model doesn’t know it is wrong.

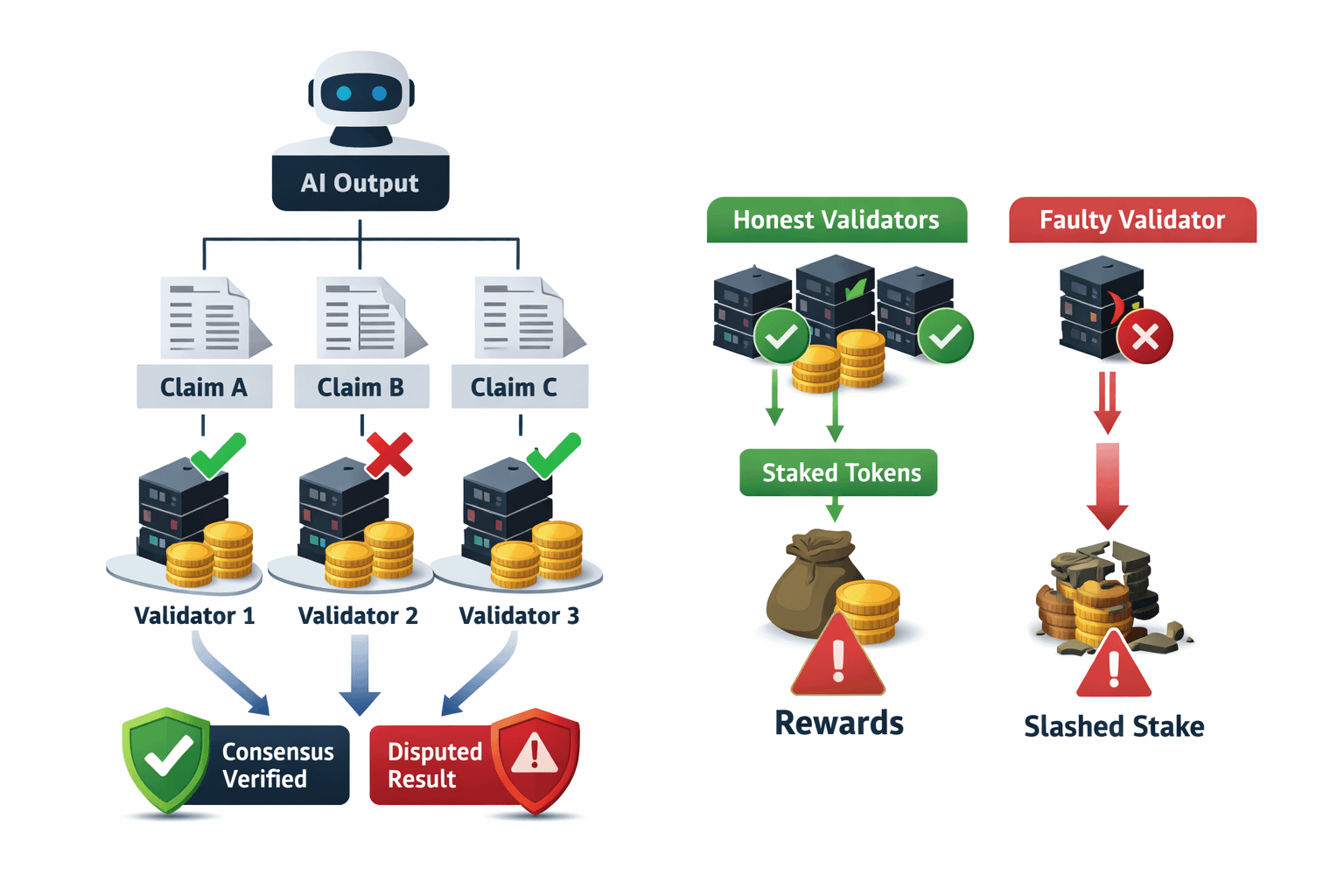

Mira approaches this from a practical angle. It does not try to “fix” AI intelligence itself. Instead, it wraps a verification layer around AI outputs. When an AI produces an answer, that answer is broken into smaller claims. Those claims are then distributed across independent participants in the network for validation.

That detail matters.

Instead of trusting one model, Mira turns validation into a distributed process. Multiple validators check the claims and stake tokens behind their answers. If they validate honestly and align with the correct consensus, they earn rewards. If they validate incorrectly, they risk losing part of their stake.

This changes the nature of trust. It is no longer about believing a single model. It becomes an economic game where correctness has financial consequences.

But I don’t look at this from a philosophical angle. I look at the operational pressure points.

For example, what happens when validators disagree? The network may require retries or additional verification rounds. That adds time and cost. Under light demand, this may not feel like a problem. Under heavy demand, latency increases. Fees increase. Execution friction becomes real.

If verification becomes too expensive, users may avoid using it. If rewards are too low, validators may not find it profitable to stay honest. So the balance between fees, staking rewards, and slashing risk becomes critical.

People often say the system is open and permissionless. In theory, yes. In practice, there are natural barriers. You need capital to stake. You need reliable infrastructure. If the network grows, those with larger bonded positions and better uptime will dominate more tasks.

Over time, that can lead to concentration. Not because the protocol blocks anyone, but because economics favors scale. That is something I always watch. True decentralization is not a slogan. It is measured by stake distribution and validator diversity.

From a trading perspective, the token is not just something to speculate on. It functions as bonded collateral. When staking demand rises, more tokens get locked. That can reduce circulating supply. But if rewards shrink or risks increase, tokens can unlock and hit the market. That creates cycles.

During CreatorPad participation, I noticed how incentives temporarily shift this balance. Campaign rewards can increase engagement and staking in the short term. But long-term sustainability depends on real usage. If verification demand is strong and fees are meaningful, the system holds. If it depends too heavily on incentives, pressure builds once rewards decrease.

Another important trade-off is speed versus certainty. Multi-layer verification increases reliability. But it also adds delay. In some use cases, that delay is acceptable. In others, especially real-time systems, it becomes a constraint. Mira has to position itself carefully between being fast enough and secure enough.

Liquidity is another layer people ignore. If fees are paid in the native token and liquidity is thin, volatility increases friction. Validators and users both feel that. Smooth liquidity routing and stable fee design are not small details. They shape adoption.

What I appreciate about Mira is that it accepts a simple truth: AI will always be probabilistic. Instead of pretending to eliminate uncertainty, it prices uncertainty and distributes the cost of resolving it.

As someone who studies market structure and protocol mechanics, I do not base my view on promises. I watch staking ratios, validator growth, fee stability, and real transaction flow. If those metrics remain strong under stress, that is when confidence becomes justified.

In my view, Mira Network is not trying to make AI perfect. It is trying to make AI accountable. And accountability only works if incentives, penalties, and infrastructure hold up when demand increases. That is the real test.

@Mira - Trust Layer of AI #Mira #mira $MIRA