I have learned to watch incentives before I listen to narratives. Networks rarely fail because of weak branding; they fail because participants quietly adjust behavior when the reward logic no longer justifies the risk. In AI infrastructure especially, output can look impressive while accountability remains structurally thin. That gap becomes visible only when incentives are tested.

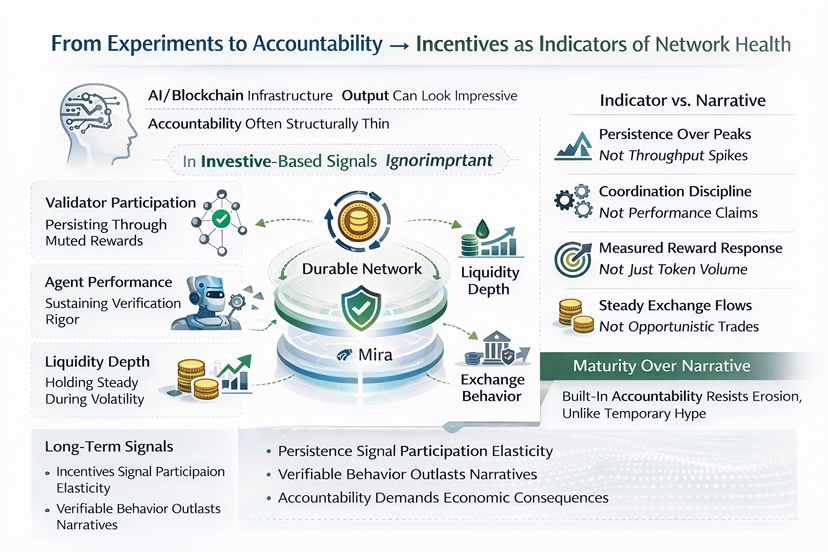

In my experience, incentive design reveals more about network quality than roadmap announcements ever will. If validators remain active through periods of muted rewards, if agents continue to perform under tighter verification standards, and if liquidity does not immediately flee when volatility rises, that suggests durability. Participation elasticity is the real signal. Not throughput. Not model benchmarks.

With #Mira , what I observe is less about performance claims and more about coordination discipline. Validator participation appears tied to measurable verification standards rather than discretionary trust. Reward adjustments seem responsive to latency and accuracy thresholds, not just volume. Liquidity patterns show steadier depth relative to issuance, and exchange flows do not dominate token movement during governance shifts. Retention timing matters here. Nodes that remain through recalibration phases signal structural commitment rather than opportunistic yield farming.

From a long term capital perspective, this is what separates experimental AI agents from trustable machines. Accountability requires economic consequences. If underperformance triggers predictable correction mechanisms, and if rewards align with verifiable contribution, the token functions as a coordination constraint, not a speculative instrument. The question is not whether output improves. It is whether behavior stabilizes under stress.

I do not see this as a feature set. I see it as infrastructure attempting to encode responsibility. That does not eliminate risk. Incentive systems can still be gamed, and governance can drift. But durability begins where participation persists without constant narrative reinforcement.

In the end, mature systems are not defined by how loudly they promise intelligence, but by how consistently they enforce discipline. The distinction between AI agents and trustable machines may simply be this: are incentives shaping behavior in ways that endure when attention fades?