I don’t believe Mira’s real competition is OpenAI, Anthropic, or any model provider.

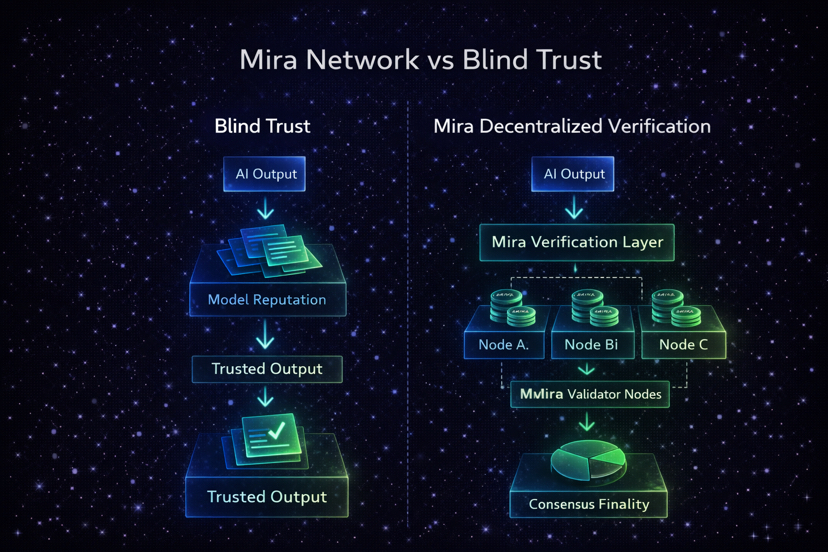

My view is that its competition is blind trust.

The uncomfortable reality is that most AI systems today operate on reputation, not verification. We assume outputs are correct because the model is advanced, widely used, or branded well. But probabilistic systems do not become deterministic simply because they scale.

When I look at Mira Network, I see a different premise.

Instead of upgrading intelligence, it upgrades validation.

AI output enters the system.

Mira decomposes the response into verifiable claims.

Independent validator nodes staking $MIRA evaluate those claims.

Weighted consensus determines finality.

Incorrect verification risks slashing.

That architecture doesn’t eliminate model error. It removes unilateral authority.

To me, that’s the structural shift.

The traditional AI stack is centralized inference.

Mira introduces decentralized verification on top of it.

But there’s tension here.

If centralized providers improve enough, developers may feel verification layers are unnecessary. If models reduce hallucinations significantly, the perceived need for external consensus weakens. Mira’s relevance depends on whether uncertainty remains a persistent feature of AI not a temporary flaw.

My position is pragmatic.

As long as AI systems remain probabilistic and increasingly autonomous especially in financial or governance contexts blind trust becomes a systemic risk.

Verification markets become insurance.

If Mira succeeds, it won’t be because AI failed dramatically.

It will be because responsible systems chose verification over assumption.

That’s a quieter, but more durable form of adoption.

@Mira - Trust Layer of AI #Mira