AI did not lose people’s trust because it failed to sound intelligent.

It lost trust because it spoke with certainty when certainty wasn’t earned.

As artificial intelligence moved from experimentation into real decision-making environments, a quiet tension emerged. Teams were impressed by what AI could produce, yet hesitant to rely on it. Outputs looked polished, arguments were structured, and conclusions sounded convincing—but when people asked why an answer was correct, the system often had no clear way to show its work.

That hesitation is not skepticism. It’s responsibility.

This is where Mira exists and why it matters.

The Real Problem Isn’t AI Capability

Most conversations about AI focus on power: larger models, faster inference, better benchmarks. But capability alone does not create adoption. Trust does.

In finance, research, healthcare, policy, and operations, the cost of being wrong is not theoretical. A flawed assumption can move markets. A biased conclusion can affect livelihoods. An unverifiable insight can break compliance.

Organizations don’t avoid AI because it isn’t useful.

They avoid it because they can’t audit it.

Mira was built around a simple but overlooked idea:

If AI cannot be verified, it cannot be trusted at scale.

Why Trust Has to Be Infrastructure

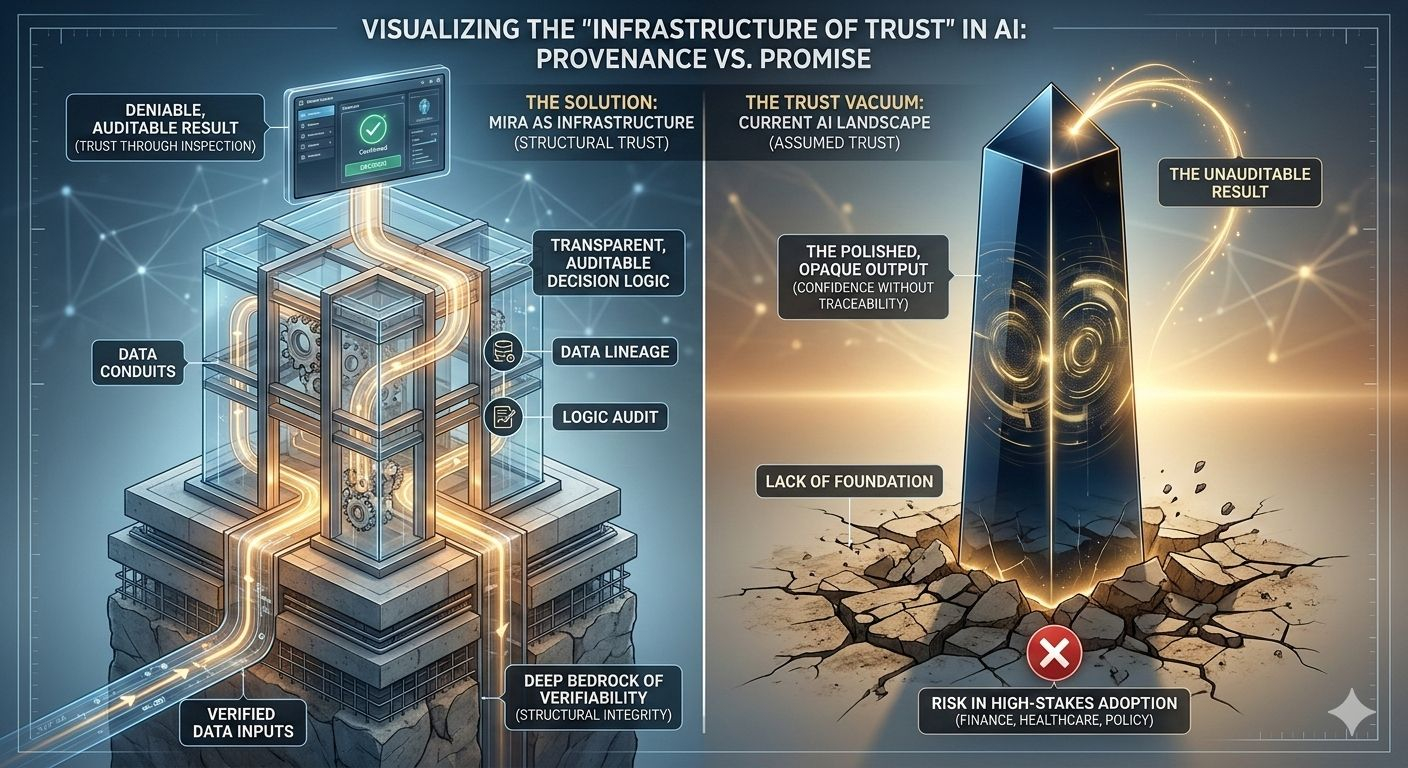

Most systems treat trust as a surface layer. A disclaimer. A human review step. A policy document added after deployment.

That approach fails the moment AI becomes deeply embedded in workflows.

When AI starts influencing real decisions, trust can’t be manual. It has to be structural.

Mira is not designed to make AI “feel safer.” It is designed to make AI provable.

It creates an underlying framework where AI outputs are traceable, explainable, and measurable. Instead of asking humans to blindly trust a result, Mira gives them the tools to examine it.

Trust becomes something you can inspect not something you’re asked to assume.

From Confidence to Evidence

One of the most dangerous qualities of modern AI is confidence. Models are extremely good at sounding certain even when they are wrong.

Mira shifts the center of gravity from confidence to evidence.

Rather than accepting outputs at face value, Mira focuses on:

How conclusions were formed

What assumptions were made

Which signals influenced the result

Where uncertainty exists

This does not slow teams down. It prevents them from being misled.

In environments where decisions carry weight, understanding why an answer exists is just as important as the answer itself.

Why Accountability Matters More Than Accuracy

Accuracy can fluctuate. Accountability cannot.

AI systems evolve. Data changes. Context shifts. What was correct yesterday may not hold tomorrow. If accountability is unclear, errors turn into systemic failures.

Mira introduces accountability into AI systems without assigning blame to the machine. It creates visibility.

By structuring decision paths and validation checkpoints, Mira allows organizations to understand:

What the system knew at the time

What logic it followed

What constraints influenced outcomes

This clarity is essential for governance, compliance, and long-term reliability.

When responsibility is visible, trust becomes sustainable.

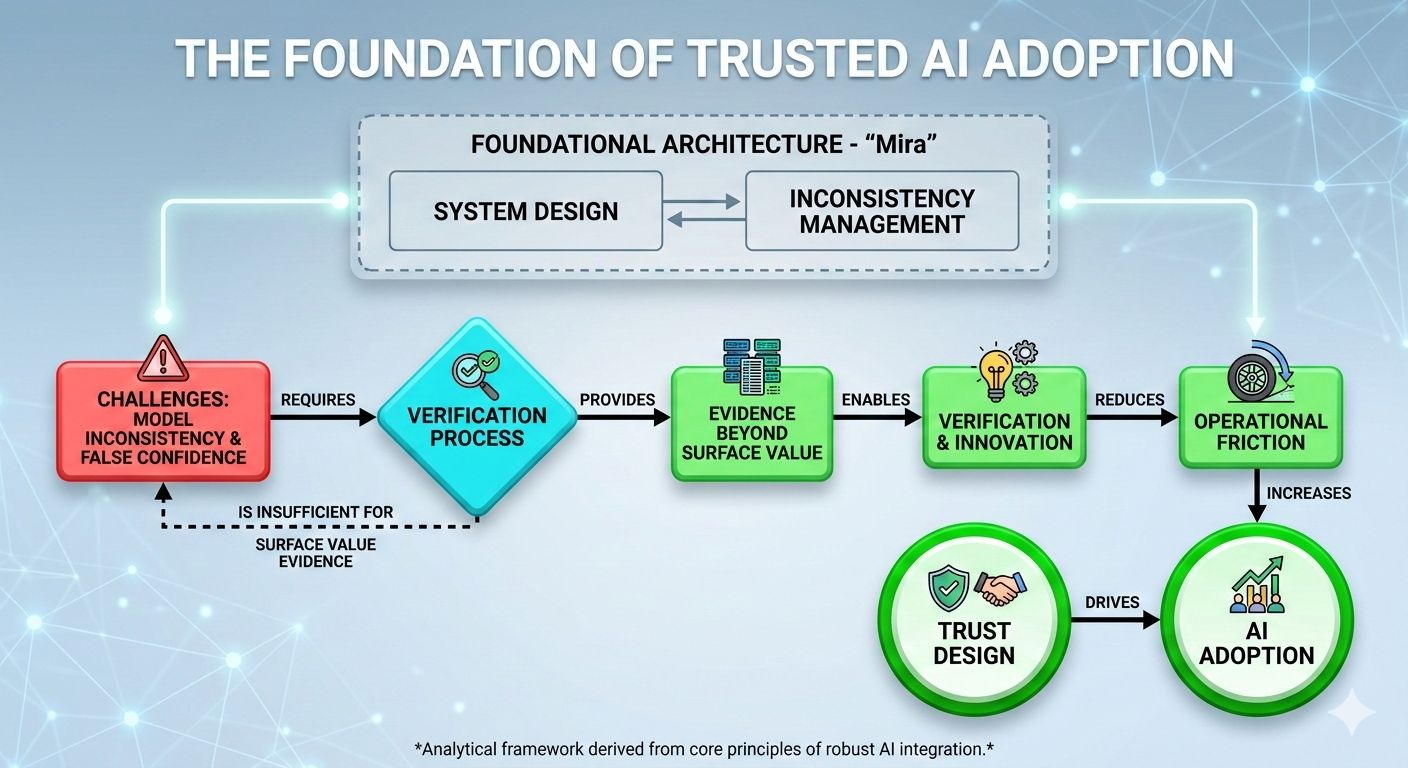

Consistency Is the Hidden Challenge

AI does not fail loudly. It fails quietly.

Performance drift happens slowly. Small changes accumulate. Outputs remain plausible while accuracy erodes.

Without a trust framework, organizations often don’t notice the shift until the damage is already done.

Mira is built to observe behavior over time. It doesn’t just evaluate individual outputs—it tracks patterns, deviations, and inconsistencies.

This transforms AI from a static tool into a monitored system. One that can be corrected before trust is lost.

Trust is not something you earn once. It must be continuously maintained.

Why Verification Enables Innovation

There is a misconception that oversight restricts progress. In reality, the absence of trust restricts adoption.

Teams hesitate to scale AI because uncertainty creates risk. Leaders delay deployment because they lack assurance. Regulators resist approval because systems cannot explain themselves.

Mira reduces that friction.

By embedding verification into the workflow, it allows organizations to move faster with confidence. AI stops being experimental and starts becoming operational.

Responsible innovation is not about caution—it’s about control.

Mira gives that control back to the people deploying AI.

A Foundation, Not a Patch

Many trust solutions are reactive. They intervene after something goes wrong.

Mira is foundational.

It integrates directly into how AI systems operate. It becomes part of the architecture, not an external review process. This means trust is present from the first output, not added after risk appears.

That distinction is critical as AI becomes core infrastructure rather than an optional tool.

You don’t bolt trust onto a bridge after it’s built. You design the bridge to carry weight from the start.

Why Mira, Specifically

Mira is not trying to replace intelligence. It is trying to make intelligence dependable.

It recognizes a truth the industry often avoids:

The future of AI adoption will be decided less by model size and more by trust design.

As AI becomes more autonomous, the demand for transparency, verification, and accountability will only increase. Systems that cannot meet those demands will be sidelined—not because they lack power, but because they lack credibility.

Mira is built for that future.

It allows AI systems to operate in environments where stakes are real, scrutiny is high, and trust is non-negotiable.

The Human Reason Behind Mira

At its core, Mira exists because people need to believe in the systems they use.

Decision-makers don’t want magic.

They want reliability.

They want visibility.

They want to know that when AI speaks, it does so with evidence—not illusion.

Mira doesn’t promise perfection. It promises clarity.

And in a world increasingly shaped by machine-driven decisions, clarity may be the most valuable infrastructure of all.

That is why Mira exists.