@Mira - Trust Layer of AI #Mira

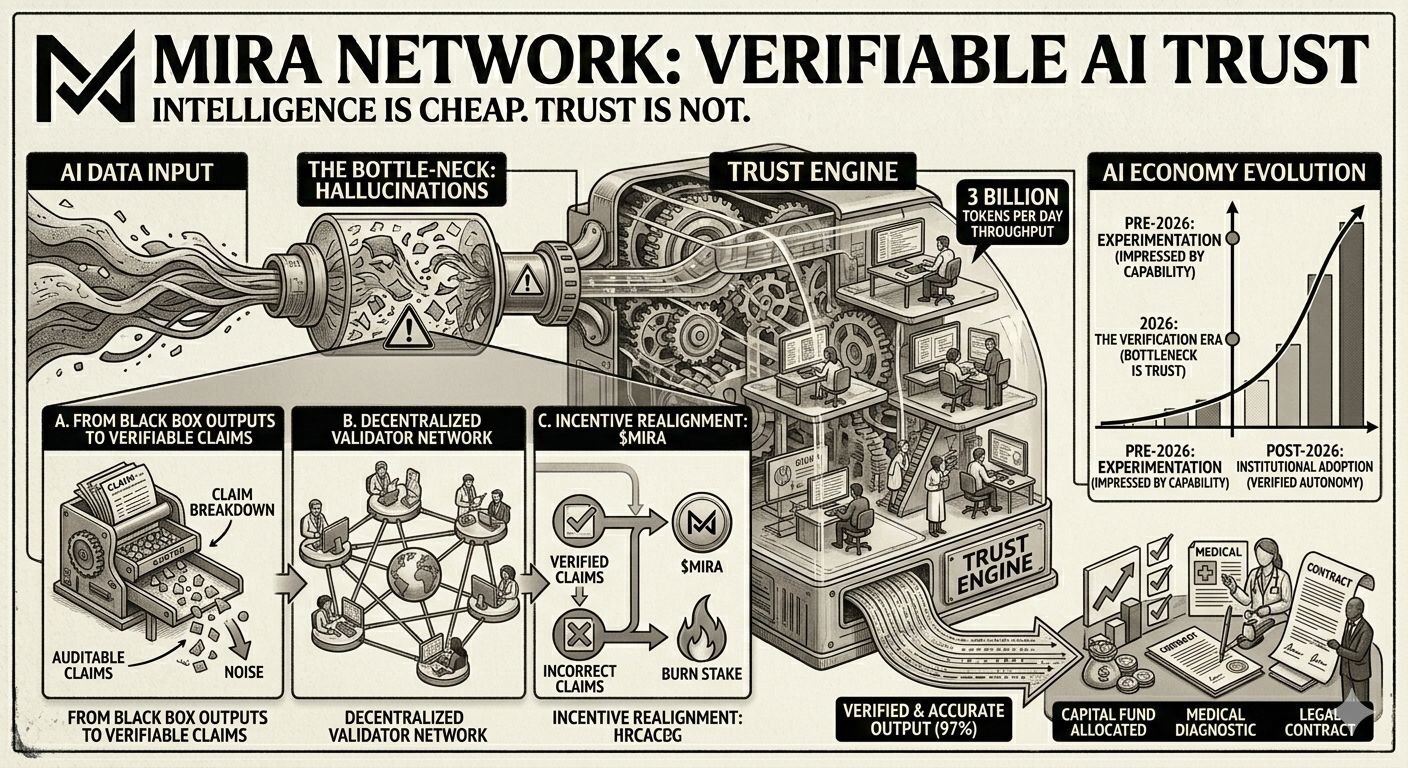

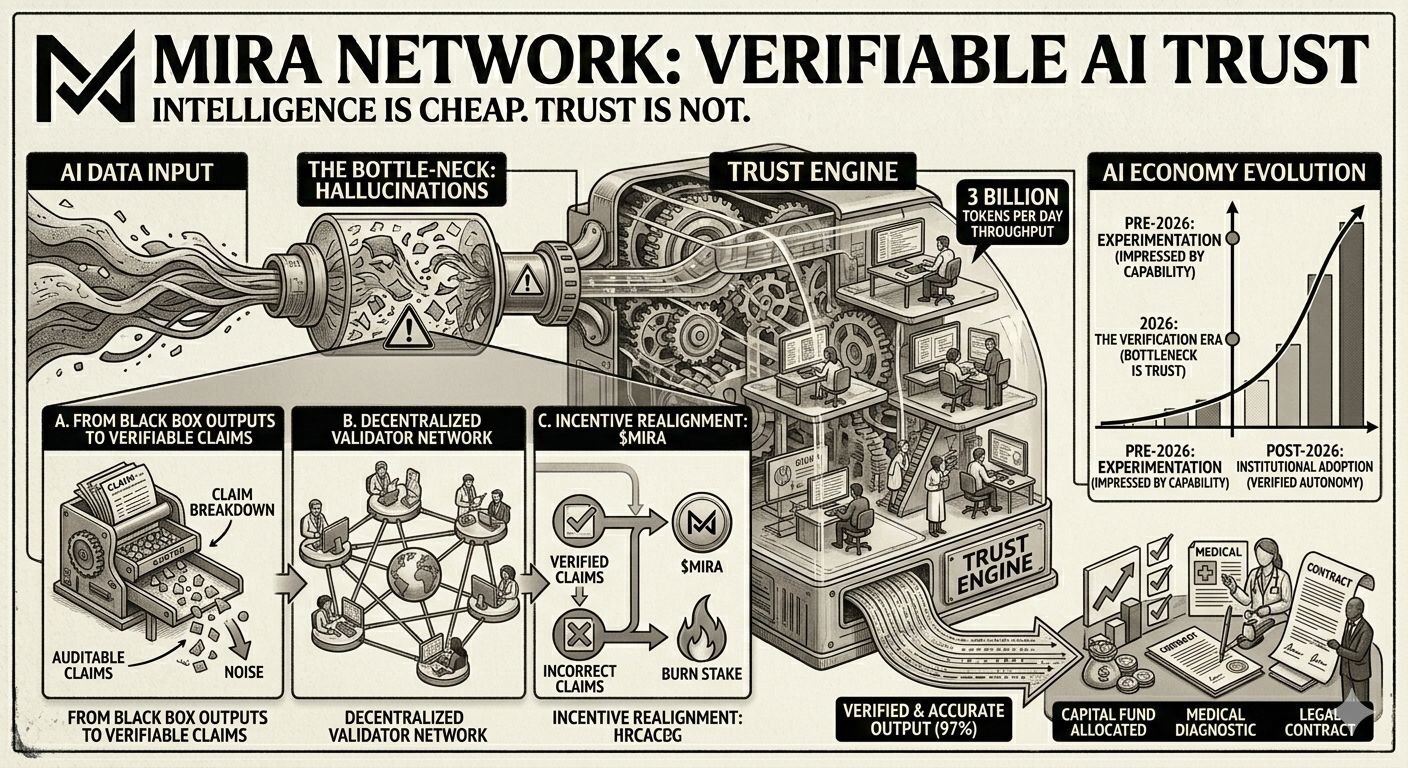

AI’s biggest weakness isn’t capability. It’s credibility.

We’ve moved past the phase of being impressed by what AI can generate.

Now the real question is: can it prove it?

Hallucinations were tolerable when AI was writing captions.

They are unacceptable when AI is allocating capital, assisting medical workflows, or influencing legal outcomes.

The bottleneck of the AI economy is no longer compute.

It’s verification.

That’s where Mira steps in — not as another model, not as another interface — but as the missing trust layer.

From Black Box Outputs to Verifiable Claims

Traditional AI works like a sealed engine.

Input goes in. Output comes out. Confidence sounds convincing.

But confidence is not proof.

Mira breaks AI outputs into granular, auditable claims.

Each claim is independently verified by a decentralized validator network.

Unlike systems such as Bitcoin, where Proof of Work proves computational effort, Mira’s Proof of Verification proves correctness.

Validators cross-check outputs across models and sources.

Correct validation earns $MIRA.

Incorrect validation burns stake.

Incentives are aligned with accuracy — not noise.

Real Infrastructure. Real Scale.

In early 2026, Mira’s mainnet surpassed 3 billion tokens processed per day.

That isn’t marketing. That’s throughput.

Applications like:

Klok — a multi-model AI interface

WikiSentry — an AI-powered fact-checking layer

have demonstrated how verification can push model accuracy from ~70% toward 97%.

That delta changes everything.

It’s the difference between experimentation and institutional adoption.

Verified Autonomy Is the Endgame

AI agents are moving beyond conversation.

They will manage assets. Execute contracts. Coordinate economic value.

At that level, “probably correct” is systemic risk.

Mira isn’t trying to make AI louder.

It’s making AI accountable.

Because in the next phase of the AI economy, the winners won’t be the models that sound the smartest.

They’ll be the systems that can prove they’re right.