When I first started looking into Mira, I thought it was just another AI project trying to reduce hallucinations. That’s what most people focus on. But the more I dug in, the more I realized they’re trying something much deeper. They’re not just fixing AI errors, they’re trying to build an economy around verification itself.

The idea is simple but powerful. AI is fast, creative, and helpful, but it’s often unreliable. One model gives an answer, and we hope it’s correct. That might be fine for small tasks, but when AI starts influencing healthcare, finance, research, or governance, hope isn’t enough. We need a way to measure accuracy and reward it.

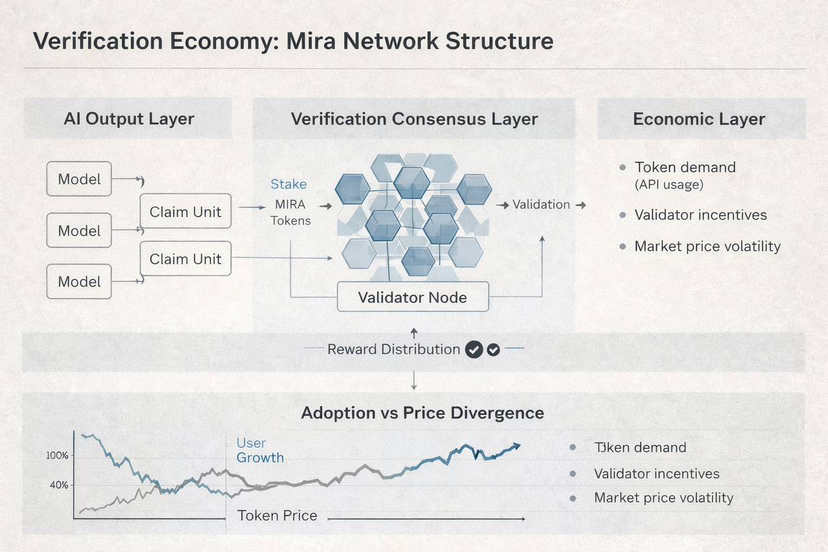

Mira’s solution is a decentralized consensus system for AI outputs. Instead of trusting a single model, every claim is routed through a network of independent verifier nodes. Multiple AI systems evaluate the same output, and a consensus is formed. If enough validators agree, the answer is verified. If not, it’s flagged or challenged. I’m no longer just asking a model a question, I’m activating a verification process.

The economic layer is what makes this sustainable. The MIRA token powers the network. Validators stake tokens to participate. If they act honestly, they earn rewards. If they behave dishonestly, part of their stake is lost. Truth becomes something that is financially incentivized.

The network is already in use. Millions of users interact with applications built on Mira, and the verification layer coordinates over a hundred AI models across distributed nodes. Developer tools make it easy to integrate verification into apps. It’s not just theory, it’s real infrastructure.

There are challenges. Token price volatility can affect incentives. Most validators relying on similar AI models could introduce bias. Verification costs money, so access could become uneven. Regulation could complicate operations. But the system is designed to balance these factors while keeping trust and accuracy at its core.

What excites me is that Mira is not just making AI outputs more reliable. They’re creating a new way to think about value in the AI era. Verified actions, not just raw outputs, become scarce and valuable. If this succeeds, we’re not just getting smarter answers, we’re getting verified intelligence, and that could quietly become some of the most important infrastructure in the AI world.