I’ve spent enough time integrating autonomous systems to know that truth is not a philosophical problem—it’s an operational one. When agents disagree or drift, the cost isn’t abstract. It shows up as deadlocks, corrupted state, and teams waking up at 6 a.m. to patch assumptions that were never enforced in the first place. That’s why when I looked at Mira Network, I didn’t care about how fast the ecosystem was growing. I cared about whether a claim could survive adversarial conditions.

Most crypto narratives still revolve around surface metrics: number of users, transactions per second, or partnerships announced. Those tell you nothing about whether a system can resolve disagreement between autonomous AI agents. Growth doesn’t equal reliability. A network can be busy and still be wrong.

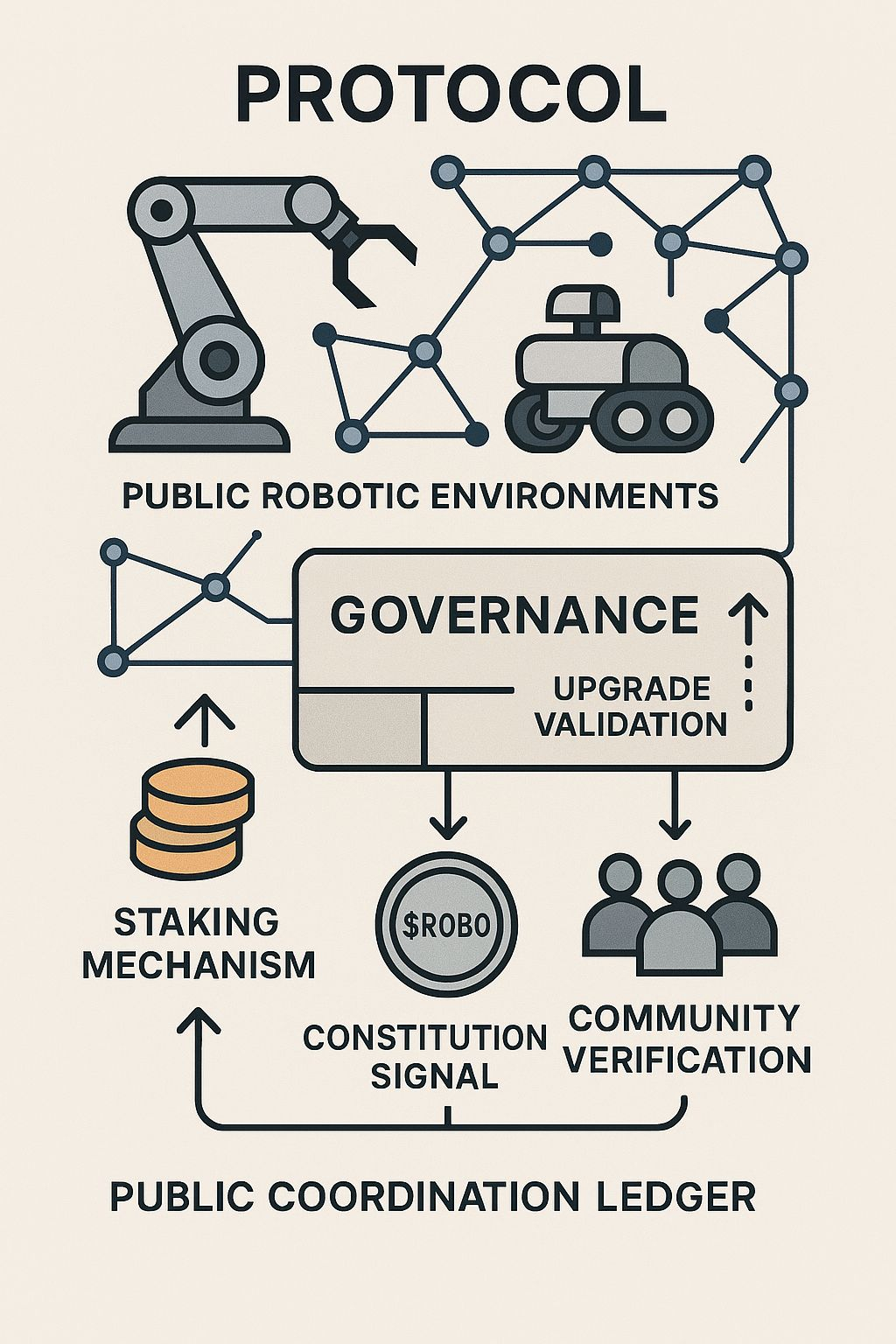

What matters is infrastructure. Mira treats truth as something that must be produced through a game-theoretic mechanism: consensus over claims, not blocks. Instead of assuming that outputs are valid because they exist on a ledger, the protocol forces statements to pass through a distributed AI judgment system. Accuracy becomes an engine, not a hope. Cryptographically proven outputs turn language into something auditable.

This is where participation mechanics reveal their depth. Stake-weighted entry and work bonds matter more than simple fees because they change behavior. Paying a fee only proves access. Posting a bond proves responsibility. If a node submits a false or low-quality claim, it risks capital and exclusion. The protocol doesn’t just record decisions; it enforces consequences.

Fee-based access creates cheap participation and expensive cleanup. Bonded participation creates expensive dishonesty and cheap alignment. That’s the psychological shift most systems miss. You don’t motivate honesty with promises—you design it so that dishonesty is irrational.

Sybil resistance here is not cosmetic. It’s structural. Agents must demonstrate stake, perform verifiable work, and converge on shared meaning. This turns the network into a decentralized epistemology layer, where knowledge is not declared but negotiated and proven. The result is a reliability layer for autonomous AI, one that doesn’t rely on trust in any single model.

After years of watching systems fail in subtle ways, I’ve learned something unromantic: marketing attracts attention, but enforcement keeps networks alive. Mira feels less like a story and more like scaffolding. It doesn’t sell confidence; it manufactures it through cryptography and incentives.

And in environments where AI decisions touch real systems, that difference is not philosophical. It’s survival.