AI is getting insanely good at all sorts of things,but let’s be real it’s still not something you can just blindly trust.Big language models and generative AI make mistakes. They hallucinate facts,get details wrong, show bias,and sometimes just miss the point. That’s maybe fine if you’re using them to draft an email or come up with ideas.But in places where mistakes really matter like robots making decisions on their own, automated trading,healthcare,or defense a simple slip up can lead to serious problems.

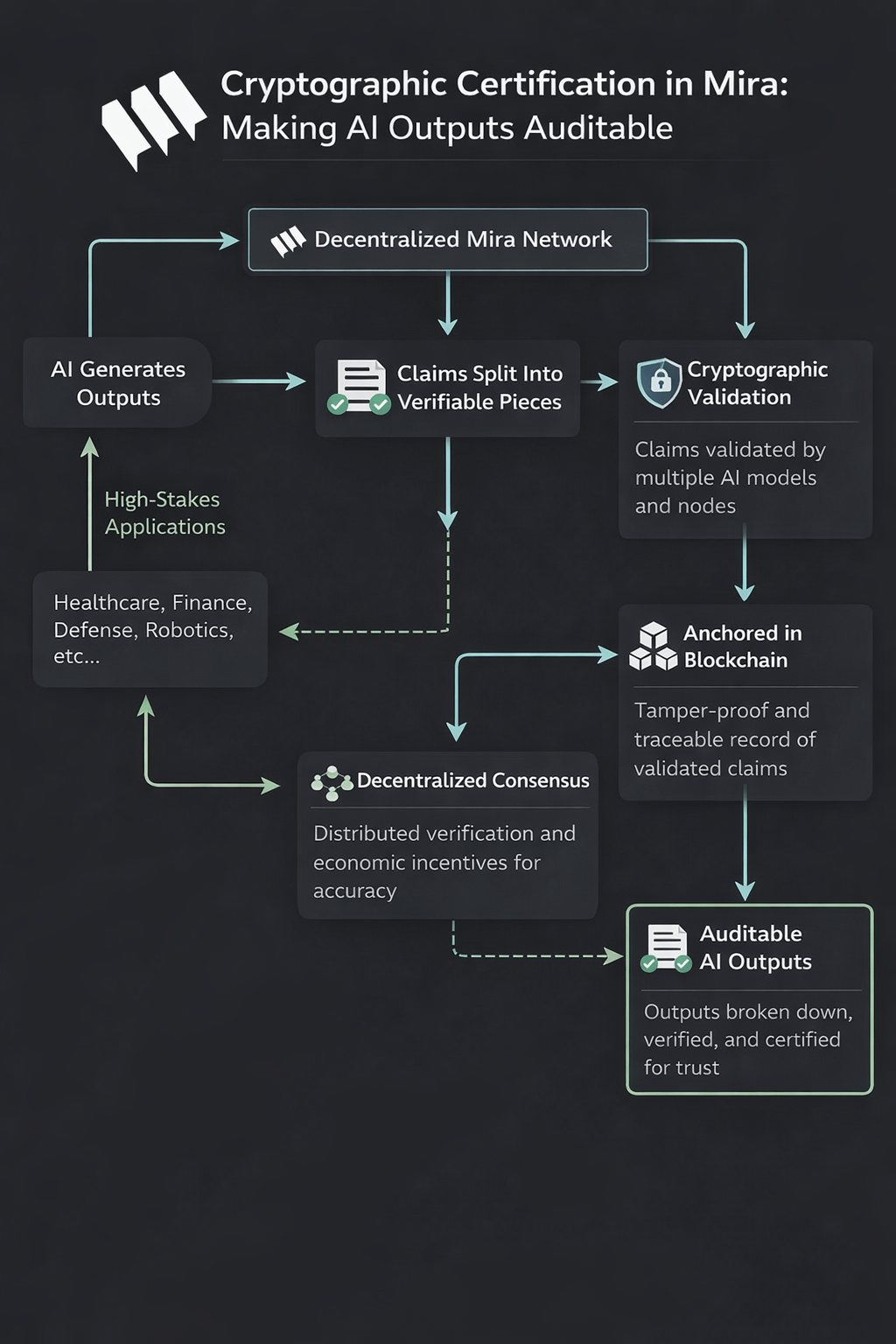

This is where the Mira Network steps in. Instead of chasing some mythical perfect AI, Mira flips the script.It uses a decentralized system to turn AI outputs into something you can actually audit a set of claims that are cryptographically certified.

The Trust Problem with AI

Usually,AI works like a black box.It spits out an answer,and you can either trust it or go through the hassle of checking every detail yourself.There’s no built in way to systematically verify what it says.That’s a huge problem if you want to use AI in anything that needs rock solid accuracy,like self driving cars,factory robots,or compliance systems.

How Mira Changes the Game

Mira doesn’t just take the AI’s answer as a big chunk of text.Instead,it breaks things down. Every complex output gets split into smaller, bite sized claims.Say an AI writes a technical report Mira turns each statement or fact into its own mini claim,something you can test and verify by itself.

Think of it like checking every line in a financial spreadsheet instead of just assuming the total at the bottom is right.By isolating each claim,Mira makes it possible to check each one individually.

Cryptographic Proof,Not Just Promises

Every claim goes through a decentralized validation process and gets anchored to a blockchain.The results are locked in using cryptography,so you get a tamper proof record showing which claims got checked, who checked them,and how the group agreed.This isn’t just “feeling confident” about the AI’s answer this is hard evidence. You can trace every claim back to the process and people (or models) that verified it.

Real Consensus,Not Centralized Control

Mira spreads out validation across lots of different AI models and nodes,not just one authority.Several models look at the same claim,compare notes,and have to agree before it’s accepted.If there’s a disagreement,the protocol demands a closer look or even penalizes the models that mess up.

This setup cuts out the risk of a single point of failure.If one model gets it wrong,others can catch the mistake and call it out.It also helps keep bias in check,since no one model gets the final word.

Incentives That Actually Work

To keep everyone honest,Mira uses economic incentives.Validators have to put up value as a stake.If they verify claims accurately,they get rewarded.If they’re sloppy or dishonest,they lose money.This lines up everyone’s motives with the goal: make sure the information is accurate,just like how blockchain validators keep the ledger clean.

Building Trust You Don’t Have to Guess At

With cryptographic validation,decentralized review,and consensus based certification, Mira creates AI outputs that are verifiable from the ground up.There’s no need to trust a single company or authority everything runs on transparent,open protocol rules.

The big idea?Autonomous trust.AI that can work safely in high stakes,real world situations because every output isn’t just generated it’s provably checked and certified.That’s how you move from hoping your AI is right to actually knowing it.

@Mira - Trust Layer of AI $MIRA #Mira