I have noticed my tolerance for close enough information has dropped and I do not think it is because I suddenly expect perfection. I keep coming back to how quickly a minor error can grow into something bigger when AI generated writing is everywhere. It ends up in internal notes, client communications, and public copy, and the wrong detail often travels faster than the correction. When people talk about fabricated facts, they are pointing to that exact risk. The text can feel settled and authoritative while the underlying claim is simply untrue. Sometimes the model makes something up. Sometimes it repeats something misleading in a smoother form. What makes it dangerous is that confidence reads like credibility, even when it should not. Either way the output arrives as a finished story while the evidence underneath it is missing or too hard to inspect and that gap is where real damage starts.

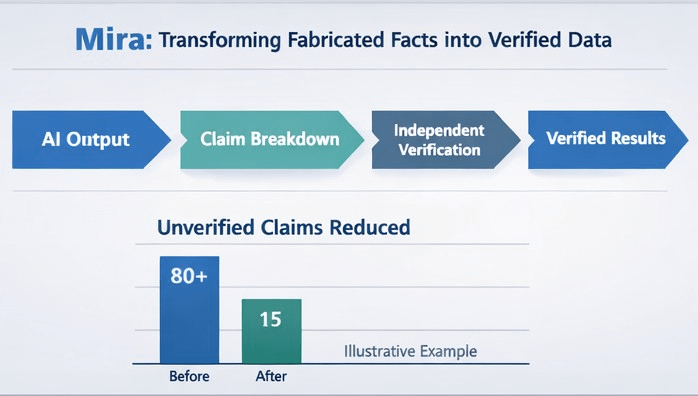

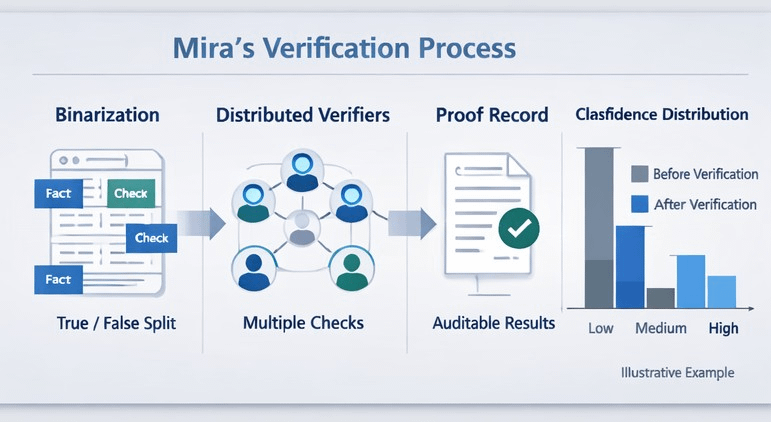

This is where Mira feels relevant in a practical way rather than as a slogan. I find it helpful to treat truth as a workflow rather than a personality trait of a model and Mira is built around that same assumption. Instead of asking a generator to be humble Mira treats generation and checking as different jobs and it makes the checking visible. The idea is to take an AI output and split it into small checkable claims and then run those claims through independent verifiers instead of trusting one system to correct itself. Mira describes an early move as binarization which turns a response into clear statements that can be judged true or false and those statements can then be distributed across verifier nodes. The combined result can return with a verification record that shows which claims passed and which did not and that record is meant to be auditable and resistant to tampering which matters when the stakes are high and the incentives to cut corners are real.

What surprises me is how often the problem is not that people do not care about accuracy but that they do not have time to prove it. In a normal workday you might need to reuse a number for a report or pull a detail into an email or summarize a meeting decision and you want to move on. Mira is relevant because it is built for that exact moment where you want an answer but you also need a way to trust it without doing a full research project. If the system can show which parts of the response were actually supported then it becomes easier to keep moving while still staying honest about what you know and what you do not know. It also changes accountability in a useful way because a later reader can see how the answer was checked rather than guessing what was assumed.

This is landing now because the surrounding world is demanding receipts and regulation is pushing traceability and transparency into the foreground. Rules and expectations are shifting toward documentation logging and oversight and even when you are not in a regulated setting the cultural norm is changing as people get more skeptical of polished language without sources. At the same time the technical ecosystem is moving in the same direction as more teams connect models to external documents before they write and then ask for support at the level of individual statements. Mira fits into that shift because it does not only attach a source list at the end; it tries to make verification part of the output itself in a way that can be inspected and repeated.

Health settings make the tradeoff feel especially concrete because the downside of a smooth lie is obvious and small errors can stack into harmful decisions. In those contexts Mira matters because it can force the answer to reveal its weakest links and it can do it consistently even when the text sounds persuasive. None of this eliminates uncertainty and I do not think it should pretend to since some claims are disputed and some depend on context and some are simply not knowable at the moment. The value of Mira is that it can turn sounds right into here is what we could actually confirm while keeping the unknowns visible so we stop building decisions on top of prose that only looks like evidence.

@Mira - Trust Layer of AI #Mira #mira $MIRA