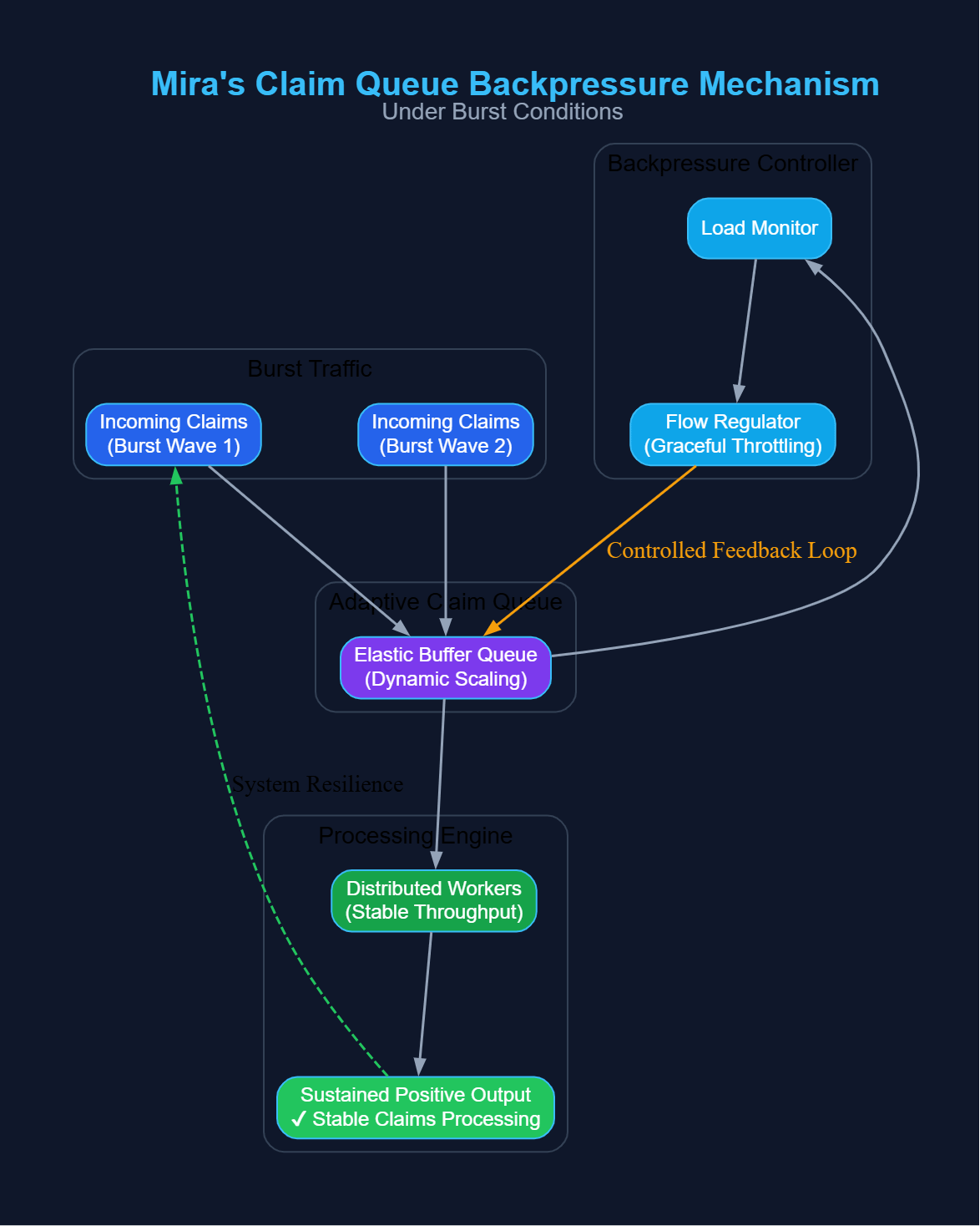

When AI output volume spikes, verification networks face a structural constraint: claims enter faster than validators can process them. Under burst conditions, the limiting factor is not model generation speed but how the verification layer absorbs inflow without destabilizing consensus timing. On Mira, this is handled through an implicit backpressure mechanism inside the claim queue.

Every AI output decomposed into atomic claims enters a distributed verification queue. Validators pull tasks continuously, but processing capacity is finite and variable. If inflow exceeds aggregate validator throughput, the queue expands. The risk is not just delay it is temporal fragmentation. Claims verified too far apart in time can distort confirmation assumptions and create uneven finality pacing.

Mira's architecture avoids this through controlled intake progression. Instead of allowing unlimited real-time claim propagation, queue growth interacts with checkpoint and settlement boundaries. As queue depth increases, confirmation timing naturally stretches, which slows effective intake without halting the network. This acts as a stabilizer rather than a throttle.

The compromise is pretty straightforward: a very aggressive intake allows users to get the message instantly, however, it can also lead to validator overload; a more controlled flow keeps the system stable but it may result in a slight increase in the time needed for confirmation. Mira balances this by allowing backlog expansion while anchoring finalization to structured timing windows. Verification continues in parallel, but economic settlement occurs within defined cadence cycles.

Economic incentives reinforce this dynamic. Validators staking $MIRA capture rewards only upon verified claim settlement. If backlog grows, reward realization shifts accordingly. This discourages passive delay while preventing reckless acceleration. Validators are economically aligned with steady queue contraction, not chaotic intake.

Under stress scenarios, this produces a dampening effect. Instead of oscillating between overload and idle states, the queue absorbs bursts and releases confirmations in measured intervals. Latency may widen temporarily, but consensus progression remains orderly. That distinction matters. A temporarily deeper queue is recoverable; fragmented confirmation logic is not.

From an architectural perspective, Mira's claim queue functions as a pressure regulator. It does not eliminate burst conditions, nor does it prioritize raw speed above all else. It converts unpredictable inflow into paced finality cycles governed by validator capacity and $MIRA aligned incentives.

Here, backpressure is not a bottleneck. It is a coordination mechanic that prevents throughput spikes from ruining the verification integrity.

Backpressure here is not a bottleneck. It's a coordination mechanism that stops throughput spikes from harming the verification integrity.