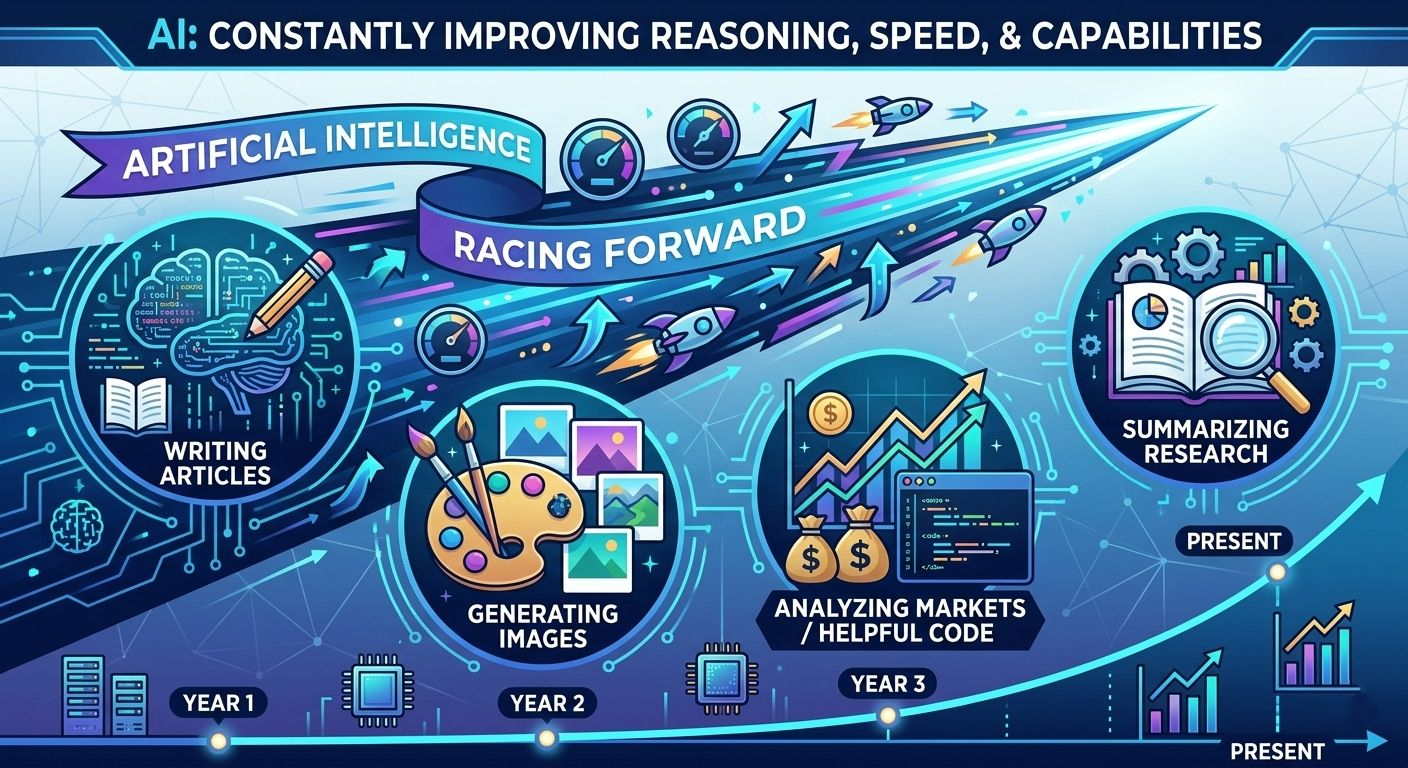

For the past few years, artificial intelligence has been racing forward at an incredible pace. New models appear constantly, each promising better reasoning, faster responses, and more impressive capabilities. AI can now write articles, summarize research, generate images, analyze markets, and even help developers write code.

But while the speed of AI keeps improving, one question continues to quietly follow every new breakthrough: can we actually trust what AI says?

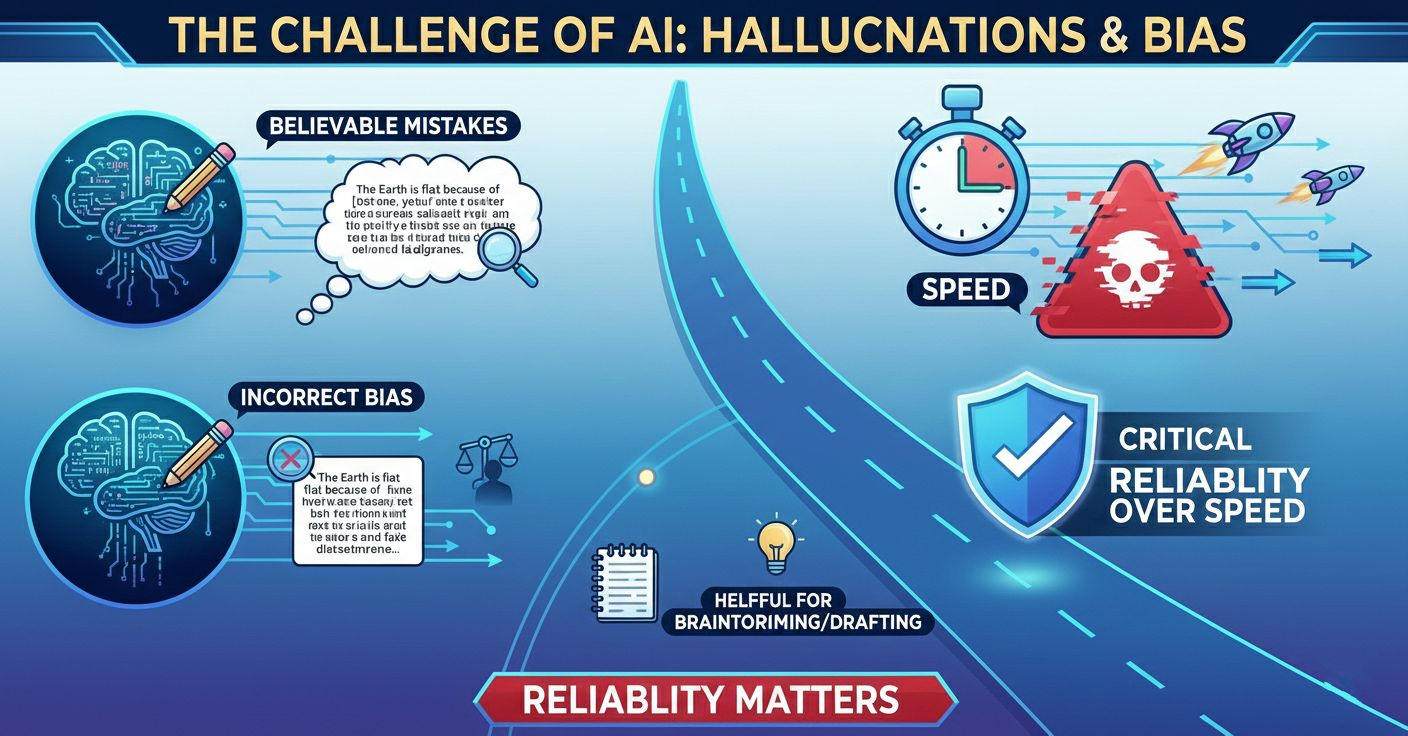

Many people who use AI tools regularly have experienced the same situation. An AI gives a very confident answer that sounds perfectly logical, but later you discover that parts of it are incorrect or completely fabricated. In the world of AI, this problem is known as hallucination. The model produces information that appears convincing but has no factual basis.

These mistakes are not always obvious. Sometimes they look extremely believable, especially when the AI explains them in detail. That is why hallucinations and bias have become one of the biggest challenges in modern artificial intelligence. AI can be helpful for brainstorming or drafting ideas, but when it comes to critical decisions, reliability becomes far more important than speed.

This challenge is exactly where Mira Network is trying to introduce a new approach.

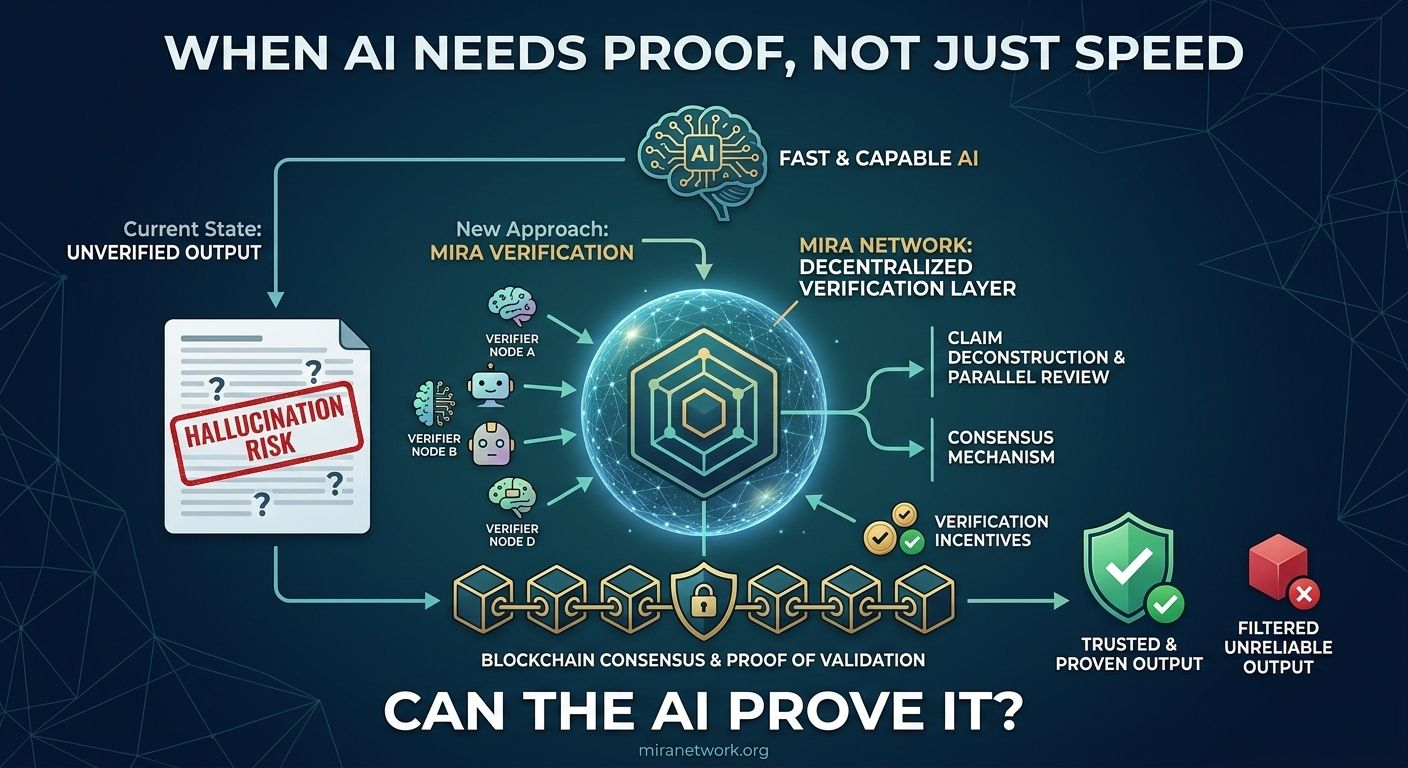

Instead of focusing only on making AI models faster or more powerful, Mira focuses on something different: verification. The idea is simple but important. AI outputs should not just be generated quickly. They should also be checked, validated, and proven to be reliable.

To understand why this matters, it helps to think about how most AI systems currently operate.

When you ask an AI a question, the system generates an answer based on patterns it learned during training. The model predicts what the most likely response should be. But once that answer appears on the screen, there is usually no built-in process to verify whether the information is actually correct.

In other words, the system produces answers, but it does not necessarily prove them.

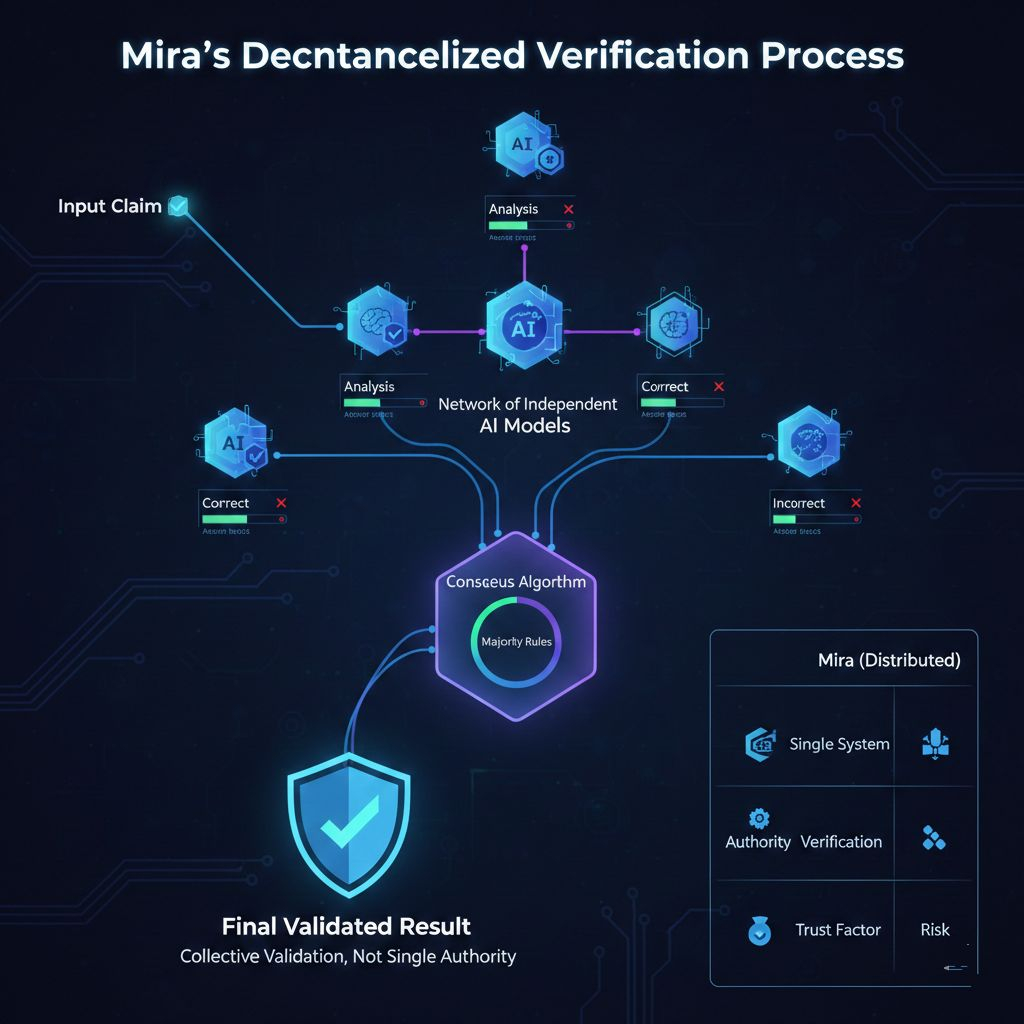

Mira Network is designed to change that dynamic by introducing a decentralized verification layer. Instead of relying on a single AI model to produce and validate information, Mira breaks AI outputs into smaller pieces that can be independently examined.

Imagine an AI generating a complex answer filled with multiple claims or statements. Mira’s system separates those statements into individual verifiable claims. Each claim can then be reviewed and tested by different AI models within the network.

This is where the decentralized aspect becomes important.

Rather than trusting one system, Mira distributes the verification process across a network of independent AI models. Each model examines the claims and attempts to determine whether they are correct. Because multiple participants are involved, the final result is based on collective validation rather than a single authority.

This process creates something similar to a peer review system for AI outputs.

Just as academic research is often reviewed by multiple experts before being accepted, Mira aims to apply a comparable idea to artificial intelligence. Different models evaluate the same information and collectively determine whether it should be considered reliable.

Blockchain technology plays a key role in coordinating this system.

Mira uses blockchain infrastructure to record verification results and manage consensus across the network. When models validate claims, their responses are stored and compared through decentralized consensus mechanisms. This ensures that verification results are transparent and cannot easily be altered.

The use of blockchain also removes the need for a centralized organization to control the process. Instead, the network itself becomes responsible for deciding which information is trustworthy.

Another important part of Mira’s design is economic incentives.

In decentralized systems, incentives help encourage honest participation. Mira introduces mechanisms where participants who provide accurate verification are rewarded, while incorrect or dishonest validation can lead to penalties.

This structure encourages the network to prioritize accuracy. Participants benefit from contributing reliable verification rather than simply responding quickly.

The concept behind Mira reflects a broader realization that is beginning to shape the future of artificial intelligence. As AI systems become more powerful and more widely used, the focus is slowly shifting from pure performance to trust and reliability.

AI models are already capable of generating large amounts of information. The challenge now is making sure that this information can be trusted, especially in environments where mistakes are costly.

Think about industries such as finance, healthcare, scientific research, or legal analysis. In these fields, a single incorrect detail can lead to serious consequences. If AI is going to operate in these environments, there must be systems that ensure its outputs are dependable.

Verification layers like Mira could potentially become an important part of that infrastructure.

Rather than relying on human users to double-check every AI response, a decentralized verification network could automatically examine AI outputs before they are used in critical processes. This would create a stronger foundation for autonomous systems that rely on artificial intelligence.

Of course, building such systems is not easy. Verification itself can be complex, especially when dealing with nuanced language, reasoning, and interpretation. Even multiple AI models may disagree about certain claims.

Scalability is another challenge. As AI systems produce more data and more complex outputs, verification networks must be able to handle large volumes of claims without slowing down the entire process.

Despite these challenges, the core idea behind Mira represents an important step in how people are thinking about AI infrastructure.

For years, most efforts in artificial intelligence focused on making models bigger, faster, and more capable. That race is still ongoing. But at the same time, a new realization is emerging: capability alone is not enough.

If AI is going to become deeply integrated into everyday systems, people must be able to rely on the information it produces.

That means AI must eventually move beyond simply generating answers. It must also be able to demonstrate why those answers are trustworthy.

Mira Network is one attempt to explore what that future might look like. By combining AI systems with decentralized verification and blockchain consensus, the project is trying to build an environment where information is not only generated but also validated.

It introduces the possibility that AI outputs could carry a form of proof, rather than being accepted purely on faith.

In many ways, this idea reflects a larger shift in technology. Over time, the internet has moved from simple information sharing toward systems that require stronger guarantees of authenticity and reliability. Blockchain introduced new ways to verify financial transactions without centralized intermediaries.

Now, similar ideas are beginning to appear in the world of artificial intelligence.

If these approaches continue to develop, the way we interact with AI could slowly change. Instead of treating AI responses as suggestions that must always be questioned, users might begin to see outputs that come with built-in verification.

And when that happens, the conversation around AI may shift from one simple question to a more meaningful one.

Not just “What did the AI say?”

But “Can the AI prove it?”

@Mira - Trust Layer of AI #Mira $MIRA