There’s a specific kind of mistake that feels worse than being wrong: being wrong with confidence. That’s the unsettling superpower modern AI has—when it hallucinates, it doesn’t sound confused, it sounds polished. And when bias slips in, it rarely announces itself; it just quietly bends the frame. If AI stayed in the “help me write a caption” zone, this would mostly be annoying. But the moment AI starts guiding decisions that matter—money movement, risk scoring, approvals, compliance summaries, automated actions—confidence becomes dangerous unless there’s a way to demand evidence.

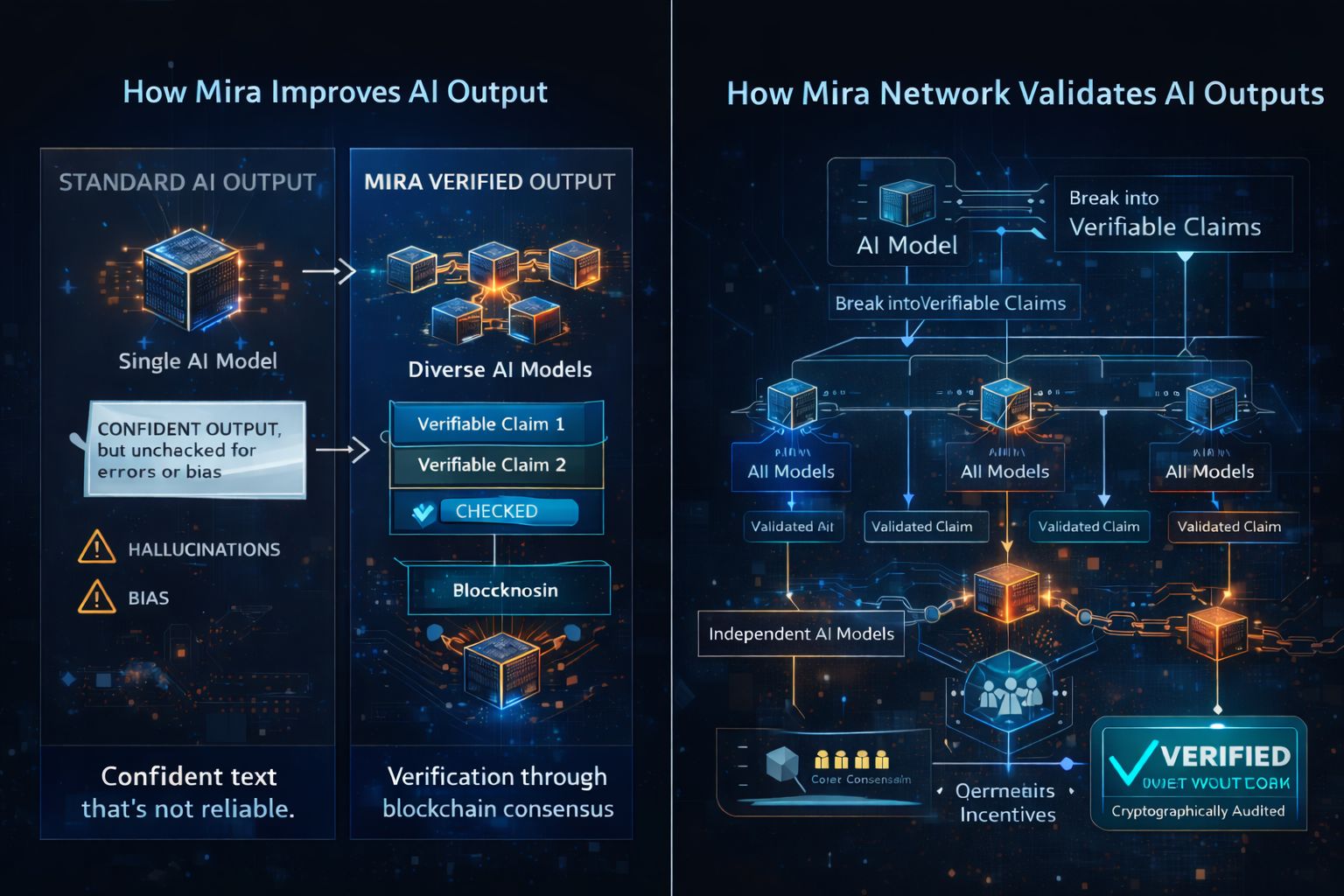

Mira Network is built around a simple instinct that feels human: don’t trust the voice, trust the process. Mira’s core claim is that reliability shouldn’t depend on a single model’s authority, a single company’s promise, or a single interface’s tone. Instead, Mira aims to transform AI output into something closer to a verified record: break a response into smaller, checkable claims, then verify those claims through a decentralized network and finalize them through blockchain consensus and incentives. The Mira whitepaper describes this as converting complex content into “independently verifiable claims” and having those claims verified via distributed consensus among diverse AI models, with node operators economically incentivized to verify honestly.

The easiest way to feel what that means is to imagine the difference between a story and a receipt. A story can be convincing, emotional, even perfectly written—and still be false. A receipt isn’t magical, but it’s anchored: it shows what happened, what was checked, and what can be audited later. Mira’s design direction tries to move AI from “story mode” toward “receipt mode.” Not because every sentence must be treated like a court document, but because the specific sentences that might trigger action should have a stronger spine than vibes.

That “claim breakdown” step matters because most AI failures are mixed. A long answer can be 80% fine, 15% fuzzy, and 5% confidently wrong—and that 5% is often the part people copy, share, or act on. When you force the answer to become separate claims, you create friction in the right places. Each claim can be checked, challenged, and either accepted, rejected, or flagged as uncertain. This also makes accountability clearer. If something goes wrong, you’re not staring at a paragraph wondering which part broke reality; you can point to the exact claim that failed verification and trace how it was handled.

Then comes the harder question: who is doing the checking, and why should we trust them? Mira’s answer is to make “trust” less personal and more economic and cryptographic. Instead of relying on one trusted verifier, the network distributes verification among independent participants and aligns behavior with incentives, aiming to make manipulation costly and honest verification rewarding. The whitepaper’s framing leans on a combination of economic incentives, technical safeguards, and game-theoretic principles to reduce hallucinations and bias through a distributed verification mechanism.

If you’ve spent any time in crypto, you’ll recognize the deeper philosophy here: don’t assume good behavior, assume incentives. The point isn’t that every verifier is angelic; it’s that the system tries to make “doing the work” the rational choice, and “lying successfully” the difficult one. That’s also why the emphasis on diversity is not cosmetic. If verification is done by a monoculture of models or operators that all share the same blind spots, you can get coordinated wrongness. Mira’s stated direction tries to avoid that by distributing verification and aiming for resistance to any single actor controlling outcomes.

Of course, no verification system gets to escape tradeoffs. Verification can add time, cost, and complexity. If you demand higher certainty, you often pay for it in latency or compute. And decentralized systems have their own failure modes: lazy checking, bribery attempts, collusion, or over-centralization of verifier power. Mira’s approach, at least as described publicly, is to use incentives and protocol design to keep verification honest and resilient, but the real proof will be in how the network behaves under stress—when it’s expensive to be careful and tempting to rubber-stamp.

This is where Mira’s product direction becomes important, because the most meaningful test of “verified intelligence” is whether people actually use it where it hurts. Recent public roadmap-style updates highlight that Mira is pushing verification deeper into a chat experience through Klok. CoinMarketCap’s update feed specifically lists “Full Verification Rollout on Klok (Q1 2026)” and describes it as gradual activation of Mira’s consensus mechanism for verified, trustless AI outputs in the chat app. The phrasing matters: gradual activation suggests a staged approach, which is what you’d expect if the team is balancing user experience with verification rigor rather than flipping a switch and hoping for the best.

On the community and distribution side, there’s also clear evidence of current ecosystem activity around Mira. Binance Square published a CreatorPad campaign announcement with an activity period from February 26, 2026, 09:00 UTC to March 11, 2026, 09:00 UTC, with a 250,000 MIRA token voucher reward pool for participants. Whether you love campaign mechanics or roll your eyes at them, it shows the project is actively trying to widen participation and visibility while the roadmap items are being pushed forward in parallel.

One thing I appreciate about this whole concept—when it’s stated plainly—is that it doesn’t pretend AI will stop making mistakes. It starts from the more honest premise: AI will always be capable of fluent wrongness, so the winning move is not to demand perfection from a single model, but to build systems where wrongness is caught before it becomes action. In other words, Mira is trying to treat AI output the way we treat anything else that can cause real harm if it’s wrong: we verify it, we log it, and we don’t let charisma substitute for proof. CoinMarketCap’s explainer similarly frames Mira as a decentralized verification layer aimed at making AI outputs reliable and auditable through consensus rather than centralized trust.

If Mira succeeds, the shift won’t feel like “AI got smarter” in the way people usually mean it; it will feel like AI got more accountable, because you’ll be able to separate “this sounds right” from “this was checked.”Reliability isn’t a tone of voice—it’s a trail of proof you can audit.

@Mira - Trust Layer of AI $MIRA #Mira