The first time an AI chatbot served up a hilariously wrong fact with the polished confidence of a seasoned professor, I chuckled. It was a party trick, a glitch in the matrix. The second time, the wrong answer wasn't funny; it was about a medical query. The third time, it was a piece of financial advice that, if followed, would have led to a real-world loss. The laughter stopped. It was replaced by a creeping unease that I can't seem to shake.

My concern isn’t that AI makes mistakes. Every tool does. My concern is that AI makes mistakes that sound like gospel. We are building a world where we query these systems for everything from coding help to draft legal documents, and we are doing it without a built-in bullshit detector. As we rush to plug artificial intelligence into the most sensitive parts of our digital lives—trading bots, automated healthcare screeners, and even the code that governs decentralized organizations—the risk of the "confident liar" becomes systemic.

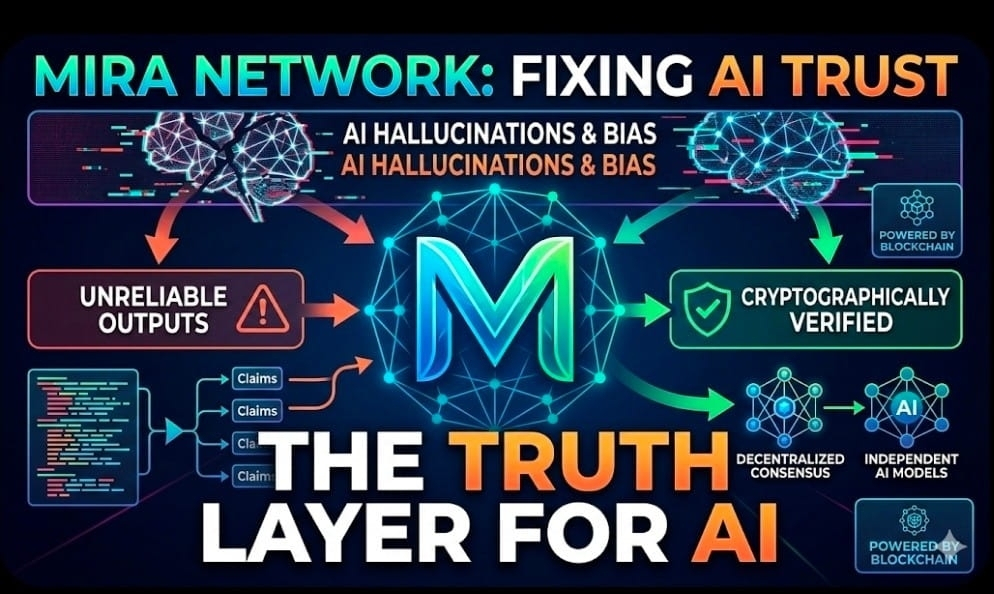

This is the rabbit hole that led me to explore projects like Mira. It’s not another large language model vying for attention. It’s something far more critical: a verification layer. In a world of infinite content generation, it’s a mechanism for establishing a semblance of truth.

The Hallucination Problem No One Wants to Admit

Let’s be clear: today's AI models are intellectual powerhouses. They can synthesize information, draft poetry, and debug code with a proficiency that borders on magic. They are the tireless interns we always wished for.

But they are also predisposed to hallucinate. They are not databases; they are prediction engines. They stitch words together based on probability, not fact. They inherit the biases of their training data and miss subtle nuances of human context. The danger is amplified by their unwavering confidence. A human expert says, "I'm not sure, but I think..." The AI says, "The answer is..." with equal vigor whether it's right or wrong.

If AI is to evolve from a passive assistant to an autonomous actor—a program that moves money, votes on proposals, or manages supply chains—that unwavering confidence becomes a critical liability. We need a system that forces the AI to prove its work.

This is where the philosophy of Mira clicks. Instead of placing blind faith in a single, centralized "brain" (like one company's flagship model), it proposes a kind of digital peer review. It breaks down a piece of AI-generated content into its core claims and distributes those claims to a network of independent AI models for verification. The results are then validated and permanently recorded using blockchain consensus.

The core idea is a paradigm shift: don't trust a single source; trust collective validation.

An Infrastructure for Trust

What I find refreshing about this approach is its focus on utility. It doesn't try to build a better AI brain; it builds a referee system around the brains we already have.

Imagine the practical applications:

· DeFi Protocols: An AI agent analyzing market risk can have its conclusions verified before a smart contract executes a large trade.

· DAO Governance: An AI-generated proposal outlining complex treasury changes can be cryptographically "fact-checked" before members vote on it.

· On-Chain Data Oracles: Data feeds that power lending and borrowing platforms can be verified for accuracy by a decentralized network, preventing manipulation based on faulty information.

· Autonomous Agents: A bot designed to manage a user's portfolio executes strategies only after the reasoning behind the trade has been validated.

It’s not glamorous work. It’s the digital equivalent of checking the engineer's math before building the bridge. It’s infrastructure. And while infrastructure isn't typically the star of the show, it's the only thing preventing a spectacular collapse.

By anchoring this verification process on a blockchain, Mira introduces transparency and economic accountability. The verification isn't happening in a private audit firm's back office; it's happening on a public ledger. Validators are incentivized by economic stakes to be honest, creating a system where trust is replaced by verifiable, cryptographically secured proof.

The Skeptic’s View: The Hard Questions Remain

However, my initial unease about AI isn't completely soothed by the promise of a decentralized referee. This new layer introduces its own set of daunting questions.

· The Cost of Certainty: Running multiple AI models to verify a single output is computationally expensive. Can this system scale economically, or will the cost of verification be a barrier that prevents widespread adoption?

· The Fragility of Incentives: Designing a system where validators are incentivized to be honest is notoriously difficult. It's a game of economic chess. If the rewards aren't perfectly aligned, the system could be gamed, producing false "verified" results.

· The Speed of Thought: Real-time applications, like high-frequency trading bots, operate in milliseconds. Can a distributed consensus model ever be fast enough to keep up, or will it always be a layer for post-hoc, non-critical verification?

And perhaps the biggest question: Will the average user care? Will a user trust a "cryptographically verified" medical suggestion more than a confident one from a free chatbot? Or will it take a major, headline-grabbing failure—an AI-driven financial meltdown—for the world to demand a reliability layer?

The Uncomfortable Evolution

We are entering a strange new phase. For the last decade, Web3 has been about decentralizing money and value. Now, we are on the cusp of decentralizing intelligence validation. We are building systems where machines check other machines, while humans sit on the sidelines, designing the rules of the game.

It’s a mind-bending loop. Five years ago, the crypto world was consumed by debates over block sizes and gas fees. Today, we are discussing the cryptographic verification of synthetic cognition. It feels like science fiction that arrived without a warning label.

Mira may not be the final, perfect solution to AI's hallucination problem. No single protocol will be. But it represents an absolutely vital mindset shift: moving from assuming AI is trustworthy to forcing AI to prove its reliability.

The quiet, unglamorous infrastructure projects often become the most foundational. Not because they are the loudest, but because everything else eventually depends on them.

For me, Mira falls into that category. It’s not flashy. It’s focused on the mundane but critical task of making our new digital co-pilots slightly less dangerous. Because if AI is going to be plugged into the core logic of our financial systems, our governance, and our digital identities, I’d rather its work be verified by a network of economic incentives and distributed consensus than by blind, unearned confidence.

That’s not just a technical preference. It’s a survival mechanism.