Artificial intelligence is no longer experimental technology operating at the edges of innovation. It now powers financial systems, healthcare diagnostics, logistics planning, research automation, and enterprise decision-making. As AI becomes deeply integrated into critical infrastructure, one question grows louder: Can we truly trust its outputs?

While modern AI models generate fast, confident, and highly sophisticated responses, confidence does not equal correctness. Beneath the surface of impressive language generation and predictive analytics lies a structural limitation — most AI systems are probabilistic engines. They predict likely answers based on patterns, not verified facts. In low-risk scenarios, minor inaccuracies may go unnoticed. But in high-stakes environments, even small reasoning gaps can lead to serious consequences.

The Hidden Reliability Gap in Advanced AI

Today’s AI architectures are optimized for scale, performance, and speed. They are trained on massive datasets to maximize predictive accuracy. However, they lack an independent verification layer capable of validating outputs before those outputs influence decisions.

This means:

Errors can pass through undetected

Contextual distortions may remain hidden

Confident language can mask factual inconsistencies

As organizations move toward automation and AI-assisted governance, this structural weakness becomes increasingly risky.

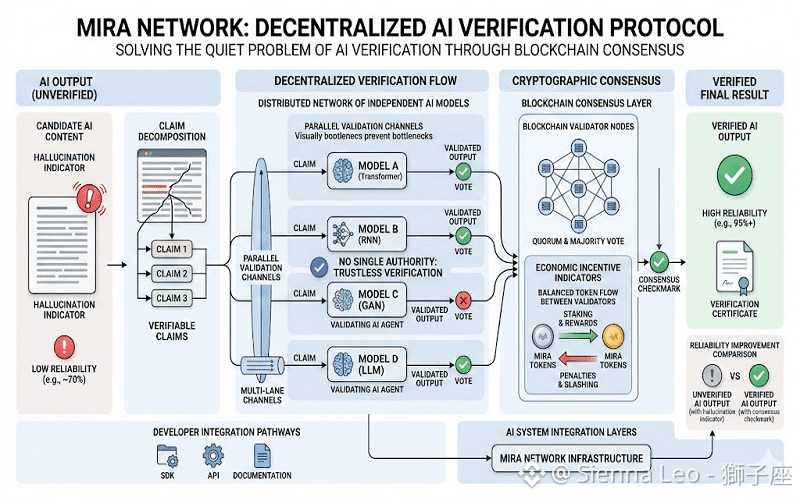

Mira Network: A Verification-First Architecture

Rather than competing to build larger models, Mira Network focuses on a different priority — post-generation validation.

Its architecture separates intelligence from confirmation. AI systems generate outputs, but those outputs are not immediately trusted. Instead, they enter a decentralized verification framework where information is examined before it is accepted or deployed.

This separation introduces accountability into an ecosystem traditionally driven only by performance metrics.

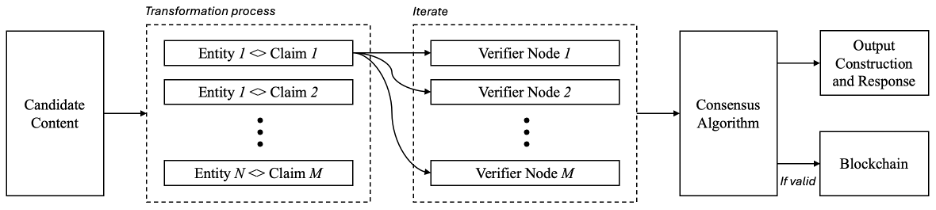

Turning AI Outputs into Testable Claims

When AI generates content, Mira restructures that output into clearly defined, testable assertions. Each assertion represents a specific claim that can be independently evaluated.

By breaking complex responses into smaller components:

Hidden inaccuracies are isolated

Logical gaps become visible

Verification occurs at a granular level

This structured decomposition prevents a single flawed assumption from compromising an entire conclusion.

Decentralized Consensus Over Centralized Authority

Instead of relying on one authority, claims are distributed across a network of independent validators. Each participant reviews assertions separately, applying diverse reasoning methods.

Approval is achieved only after sufficient agreement across the network.

This decentralized consensus model:

Reduces shared blind spots

Limits systemic bias

Strengthens collective reliability

Trust becomes a product of distributed agreement rather than centralized control.

Blockchain-Backed Transparency

Verification results are recorded on-chain, creating a permanent and immutable audit trail. This ensures:

Transparent validation history

Clear accountability

Compliance-ready documentation

For industries operating under regulatory pressure, this auditability becomes a strategic advantage.

Incentives That Reward Accuracy

Mira Network integrates economic incentives aligned with precision. Validators are rewarded for accurate assessments, encouraging responsible participation.

Over time:

Reputation reflects performance

Accuracy becomes measurable

Integrity becomes economically valuable

Instead of assuming correctness, the system financially reinforces it.

Preparing AI for High-Stakes Autonomy

As AI evolves toward autonomous execution in finance, healthcare, logistics, and scientific research, unchecked outputs become increasingly dangerous. Verification can no longer remain optional.

It must become foundational infrastructure.

Mira Network positions itself as a reliability layer — bridging powerful AI generation with structured accountability.

From Probabilistic Outputs to Verified Digital Truth

The future of artificial intelligence will not be defined solely by model size or processing speed. It will be defined by trust.

By introducing decentralized validation, structured claim analysis, and transparent consensus mechanisms, Mira Network aims to transform AI from a probabilistic prediction engine into a system of verifiable digital truth.In the age of intelligent systems, reliability is no longer a featureIt is the foundation.