Most discussions about artificial intelligence assume human supervision remains in the loop. A model suggests; a person confirms. A system predicts; an operator reviews.

That assumption is quietly dissolving.

AI is increasingly embedded into workflows that allocate capital, flag compliance violations, optimize logistics, and assist medical decisions. In some cases, automation is already moving faster than oversight. The trajectory is clear: less human friction, more autonomous execution.

Autonomy, however, magnifies error.

A hallucinated sentence in a chatbot session may cost nothing. A hallucinated compliance flag inside a financial institution can freeze accounts. A biased inference inside a credit model can distort lending decisions at scale. As autonomy expands, so does the blast radius of probabilistic error.

This is where @Mira - Trust Layer of AI framing becomes structurally relevant.

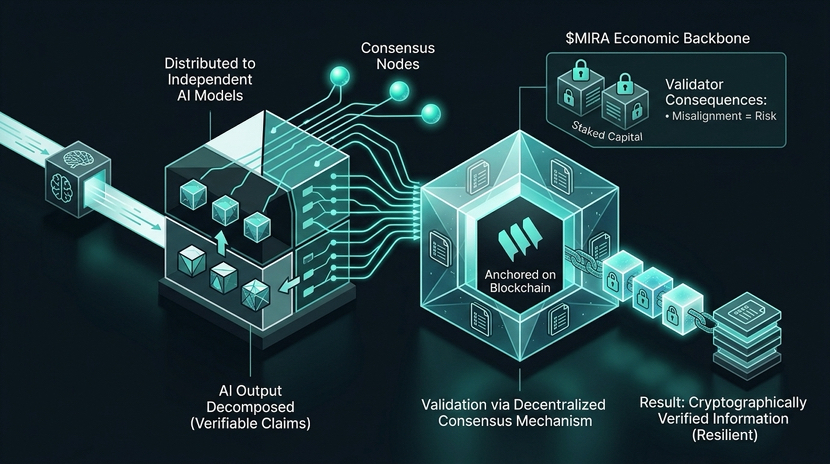

Instead of treating AI outputs as trusted conclusions, Mira decomposes them into verifiable claims. These claims are distributed across independent AI models and validated through decentralized consensus mechanisms anchored on blockchain infrastructure. The output is transformed into cryptographically verified information rather than unilateral assertion.

The subtlety is important. Verification introduces friction. Friction introduces resilience.

Within this architecture, MIRA operates as the economic backbone of coordination. Validators stake capital to participate in consensus. Incorrect validation carries consequence. Incentives are structured so that agreement reflects aligned economic exposure, not centralized authority.

Skeptics may argue that internal auditing suffices. Yet as systems grow more autonomous, the cost of single-point validation increases. Historically, when risk becomes systemic, neutral verification layers tend to separate from execution layers.

AI may be approaching that inflection.

If models begin allocating capital, influencing regulatory decisions, or operating within critical infrastructure, reliability must scale alongside autonomy. Performance alone will not secure adoption; defensibility will.

@Mira - Trust Layer of AI is building at that boundary between intelligence and accountability. And in doing so, $MIRA represents more than utility. It represents structured alignment around the cost of being wrong.

Autonomy without verification accelerates risk.

Autonomy with verification reshapes infrastructure.