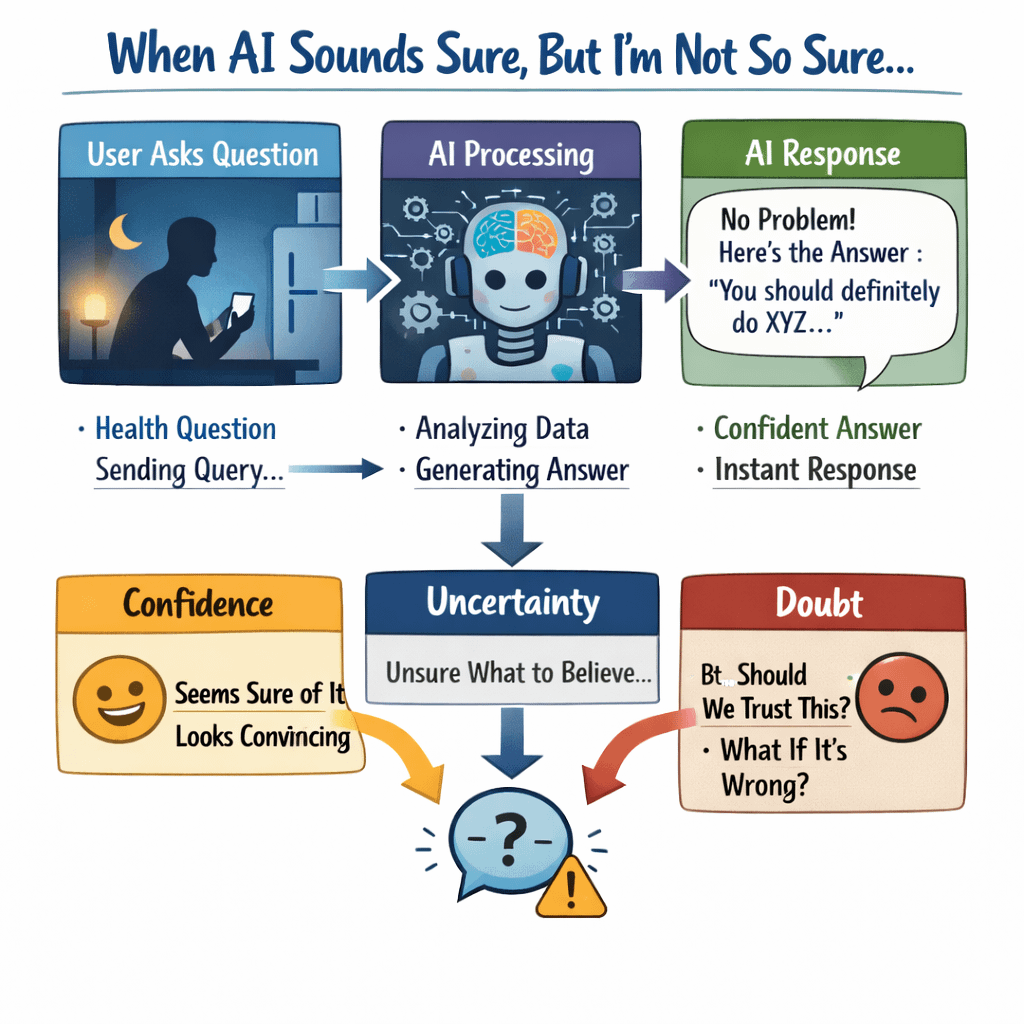

I remember sitting at my kitchen table one evening, phone in my hand, asking an AI tool a question about a health issue someone in my family had just been diagnosed with. It was late. The house was quiet except for the hum of the refrigerator and the occasional car passing outside. I typed the question, hit send, and within seconds the answer appeared.

It was long. Detailed. Calm. It even sounded a bit reassuring.

And yet something about it bothered me.

Not because it looked wrong. Honestly, it looked too right. Every sentence sounded confident, polished, like it had been written by someone who had studied medicine for twenty years. But the more I read it, the more a small voice in my head kept asking, How does this thing actually know any of this?

I’m not entirely sure when that feeling started creeping in, but lately I’ve been thinking about it more and more.

AI has quietly slipped into everyday life. We use it to write emails, fix code, answer random questions, summarize long articles, even help with homework. Most of the time it works well enough that we stop thinking about what’s happening behind the screen.

But every now and then you catch it making something up. A statistic that doesn’t exist. A research paper that no one ever wrote. A historical detail that sounds believable but falls apart if you check it.

And the strange part is that it never hesitates.

It just says the thing.

That confidence is what makes it tricky.

If a person sat across from you at the dinner table and started inventing facts, you’d probably notice pretty quickly. Their voice might waver. They might pause. They might say something like, “I think I read this somewhere, but I’m not totally sure.”

AI doesn’t do that.

It just keeps talking.

The more I’ve used these tools, the more I’ve realized something a little uncomfortable. AI isn’t really designed to know things the way we imagine. Most of these systems are trained to predict what words should come next based on patterns in enormous piles of text. That’s why they’re so good at writing paragraphs that sound natural.

But sounding right and actually being right are not the same thing.

It’s a bit like that one friend everyone has—the one who answers every trivia question at the table with absolute certainty. Sometimes they’re impressive. Sometimes they’re completely wrong. But they never say “I don’t know.”

AI feels like that friend, except the friend now lives inside your phone and can talk about literally any topic on earth.

I’m not saying AI is useless. Far from it. I use it almost every day. It’s great for brainstorming ideas or helping me think through problems when my brain feels stuck. But I’ve started to notice how easy it is to slowly trust it more than we probably should.

Especially when it speaks with that smooth, confident voice.

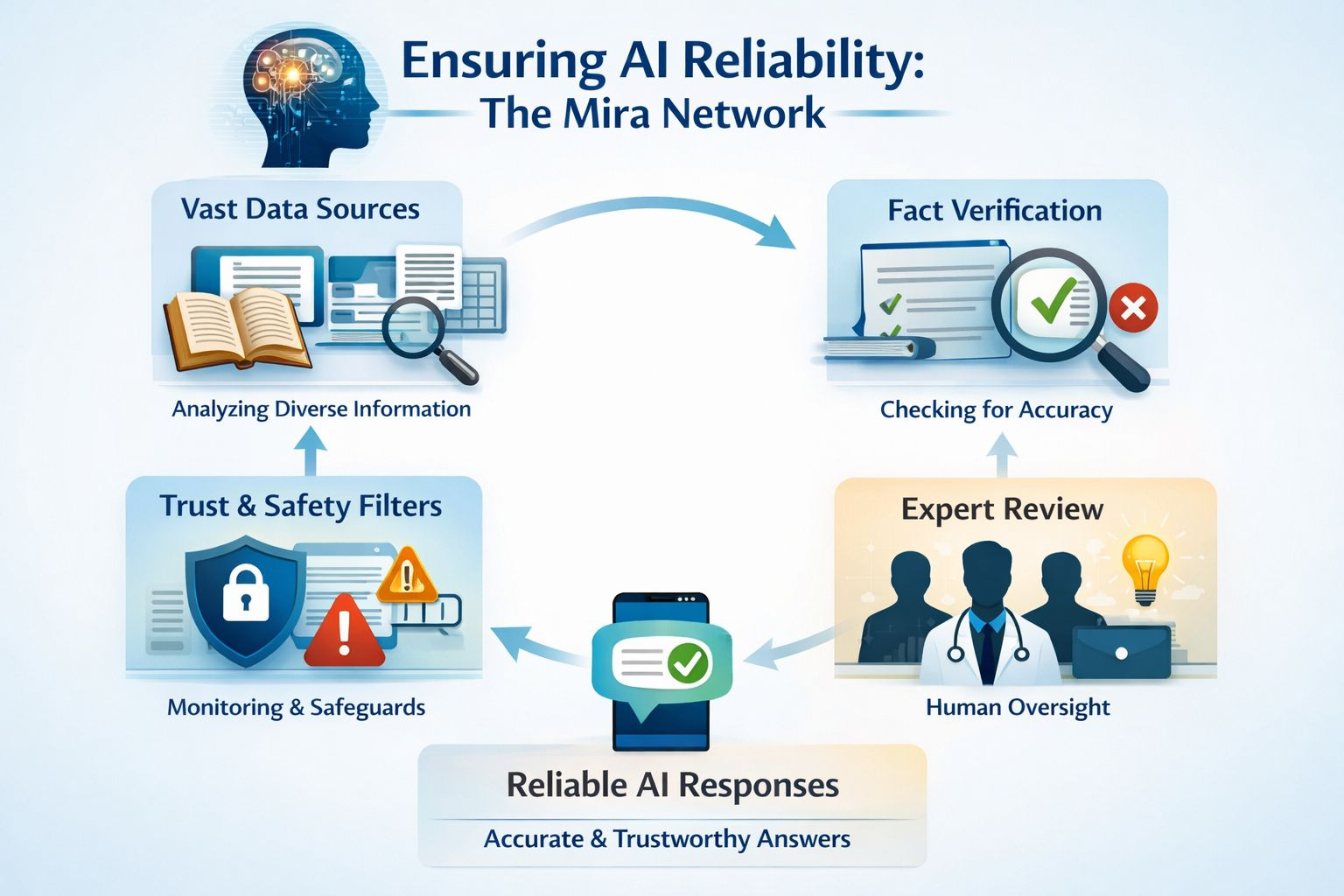

The other day I was reading about a project called Mira Network. I’ll be honest, when I first heard the description, it sounded a bit strange. Maybe even a little overcomplicated. I’m still not sure I fully understand every technical detail, and that’s okay.

But the basic idea stuck with me.

Instead of just letting AI spit out answers and hoping they’re correct, the system tries to check those answers piece by piece. Almost like pulling apart a sentence to see if every little part holds up.

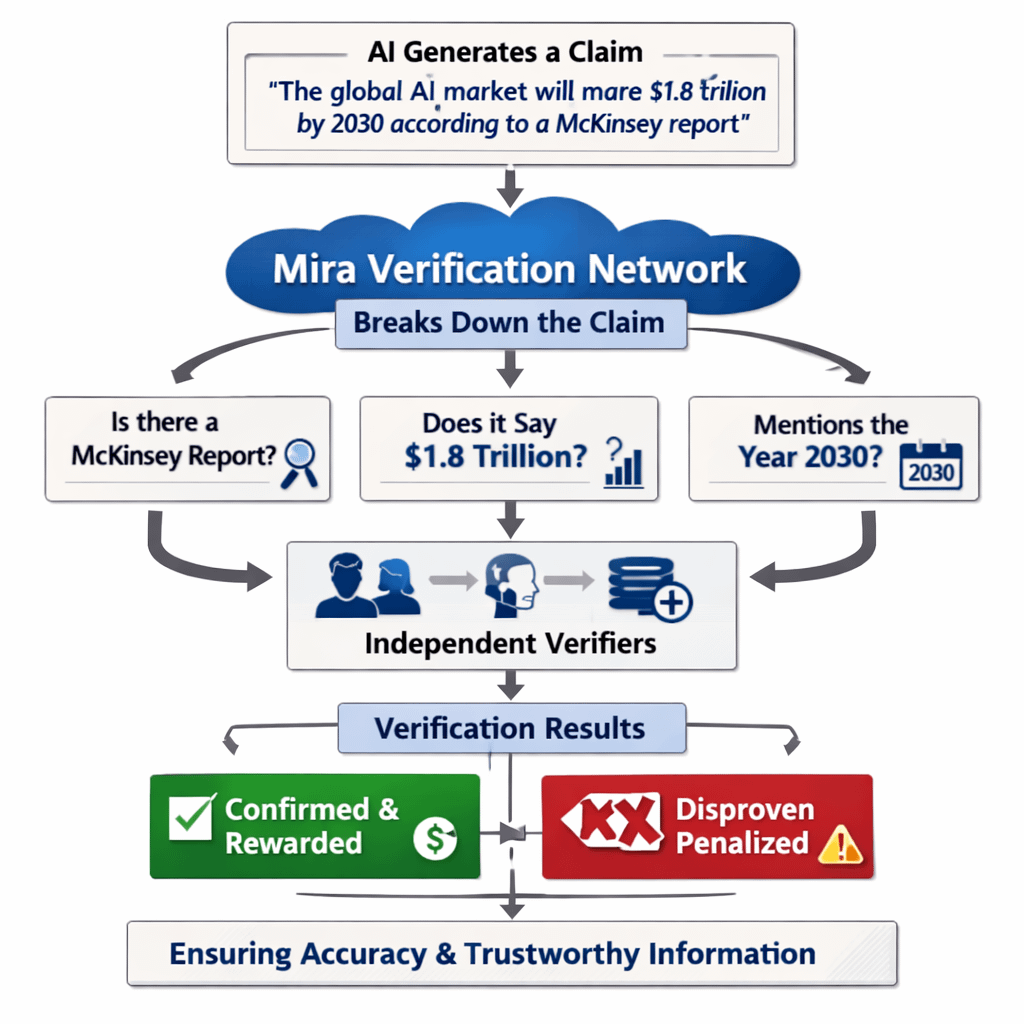

Imagine an AI writes something like this:

“The global AI market will reach $1.8 trillion by 2030 according to a McKinsey report.”

To most of us, that looks like a single statement. We read it and move on.

But if you slow down for a second, you can see it’s actually several different claims hiding inside one sentence.

Is there really a McKinsey report about this?

Does it actually say that number?

Did it mention the year 2030?

Instead of accepting the whole sentence, Mira tries to check those smaller pieces individually.

It’s a bit like when you hear a rumor and start asking questions.

“Who told you that?”

“Where did they hear it?”

“Did anyone else confirm it?”

Except in this case the checking is done by a network of systems rather than just one source.

I might be oversimplifying it, but the idea reminds me of how people used to verify stories in small towns. Someone would hear something at the grocery store, then someone else would check with a neighbor, and eventually you’d figure out whether the story was real or just gossip.

Except now the gossip comes from machines.

And the checking happens through a network.

Another part that surprised me is the incentive structure. People or systems that verify information correctly get rewarded. If they confirm something that turns out to be wrong, there’s a cost attached.

In other words, accuracy isn’t just encouraged—it’s financially motivated.

That might sound strange, but when you think about it, incentives shape almost everything humans do. Journalists build credibility by being accurate. Scientists protect their reputations by checking their work carefully. Even your friend who recommends restaurants wants to be right, because if they send you somewhere terrible you’ll never trust their suggestions again.

The difference with AI is that it doesn’t have that built-in sense of reputation.

At least not yet.

Which makes me wonder what happens when these tools start making decisions in places where mistakes actually matter.

Right now, most of us use AI for harmless things. Writing social media posts. Generating images. Asking random questions while lying on the couch.

But companies are already using AI in more serious ways. Sorting job applications. Analyzing financial data. Helping doctors review medical scans. Deciding which customer complaints get priority.

If those systems are relying on information that might be partly invented, the consequences could be messy.

And I’m not trying to be dramatic here. Humans mess up all the time too. The difference is that when a human expert makes a claim, you can usually trace their reasoning, check their sources, or question their judgment.

With AI, the reasoning often disappears inside a giant model that no one fully understands.

It’s a bit like asking someone how they arrived at an answer and they just shrug and say, “Trust me.”

That’s where this idea of verification starts to feel important.

Not perfect certainty. That probably doesn’t exist. But at least some way of checking whether the pieces of an AI-generated statement hold together.

The more I think about it, the more it feels like we’re entering a strange new phase of the internet.

For years the challenge online was finding information. Search engines solved that problem. Suddenly everything was accessible.

Now the problem is the opposite.

There’s too much information, and a lot of it isn’t reliable.

Add AI into the mix and the flood gets even bigger. Machines can now generate articles, research summaries, reports, and explanations faster than any human could possibly read them.

So the real question might not be how fast we can create information.

The real question might be how we decide what deserves our trust.

I still use AI all the time. But I’ve started doing something small that helps keep me grounded.

Whenever it gives me a statistic, I pause and ask where it came from.

Sometimes it points to a real source. Sometimes the answer gets a little fuzzy. Occasionally it quietly changes the number when I ask again.

That alone tells you something.

I don’t know if networks like Mira will end up solving this problem. Maybe they will. Maybe a completely different approach will appear in a few years.

Technology has a funny way of surprising us.

But I keep thinking about that moment at the kitchen table, staring at a perfectly written answer on my phone while a small part of my brain whispered, Are you sure about this?

And maybe that quiet question is going to matter more and more as machines keep getting better at sounding like they know what they’re talking about.