One thing I’ve noticed after using AI tools for a while is that the problem isn’t really intelligence anymore.

Most modern systems are already capable enough to be useful. They can summarize research, write code, analyze data, and explain complicated ideas in seconds. That part of the technology is improving quickly.

The part that still feels unresolved is reliability.

AI systems have a strange habit of sounding certain even when they are slightly wrong. The answer might look polished, structured, and convincing, but the moment you check the details you sometimes find small errors hiding underneath the surface.

That experience becomes more noticeable the more you rely on these tools.

You start double-checking important outputs.

You compare results across different models.

You verify numbers before using them.

Which means the responsibility for trust is still sitting with the human.

That is the gap where Mira Network begins to make sense.

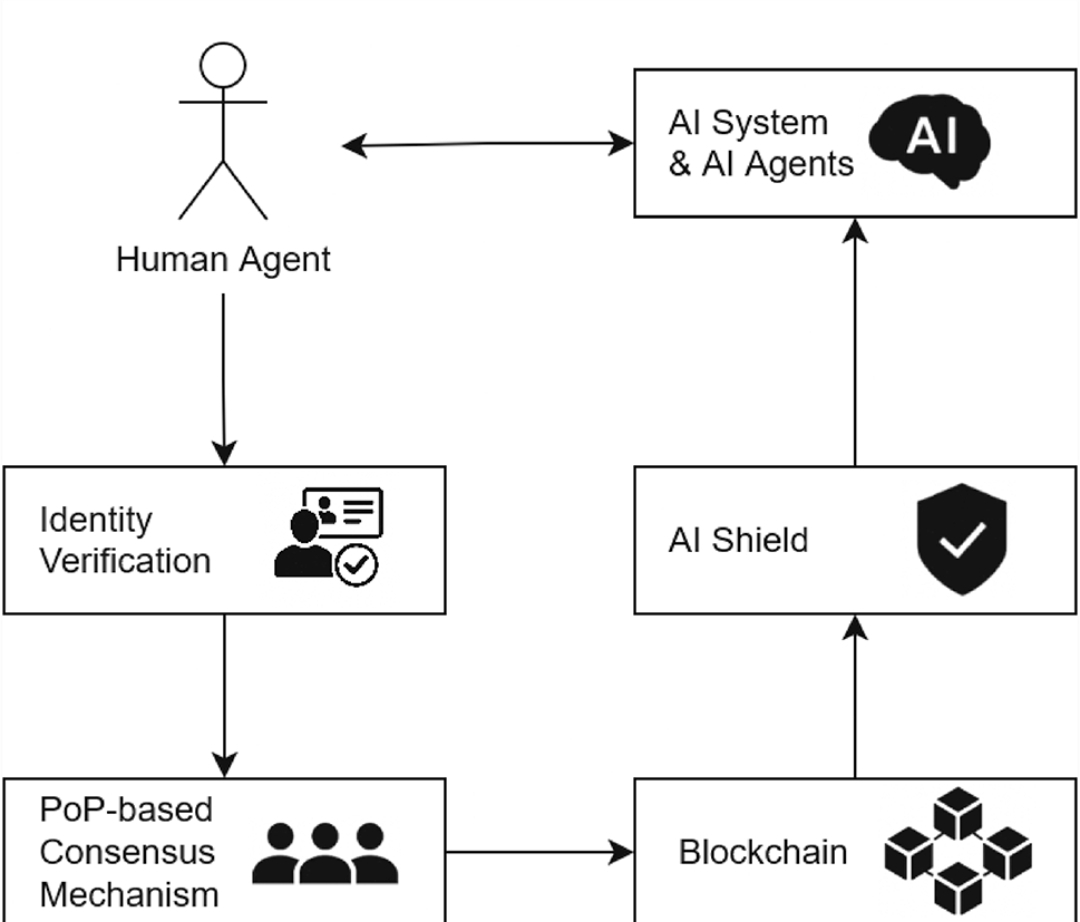

Mira isn’t trying to compete with the companies building larger models. It is approaching the problem from a different direction. Instead of making AI answers more persuasive, it focuses on verifying whether those answers are actually reliable.

The idea behind the protocol is fairly simple once you step back.

When an AI system produces a response, Mira doesn’t treat that output as a single piece of information. Instead, it breaks the response into smaller claims that can be evaluated individually.

Those claims are then distributed across a network of independent validators.

Each validator — which can itself be an AI system — checks the claim and submits an evaluation. The network reaches agreement through blockchain coordination and economic incentives.

So instead of trusting the confidence of one model, the system relies on distributed consensus.

That approach shifts the trust model significantly.

Right now most AI systems operate under centralized verification. If something goes wrong, the responsibility sits with the company that built the model. Mira replaces that structure with a network where validation happens collectively and transparently.

Economic incentives also play a role.

Validators are rewarded for accurate verification and penalized for incorrect validation. This creates a system where honesty is economically aligned with network health.

The design becomes particularly interesting when you think about where AI is heading.

Today AI mostly assists humans. It generates drafts, ideas, and suggestions that people review before taking action. But that relationship is already beginning to change.

AI agents are starting to interact with financial systems.

They execute automated tasks across platforms.

They process large volumes of information without constant human supervision.

In those environments, “probably correct” isn’t enough.

If an autonomous system is making decisions or triggering actions, the information it relies on needs a higher standard of verification. That’s where a protocol like Mira begins to look less like an experiment and more like infrastructure.

The system assumes hallucinations and bias will continue to exist in AI models. Instead of pretending those problems disappear with scale, it builds a framework that can verify outputs after they are generated.

In other words, Mira treats intelligence and verification as two separate layers.

Models generate ideas.

The network verifies them.

There are still challenges to solve. Claim decomposition needs to be accurate. Validator diversity must prevent shared bias. Verification must remain efficient enough to be practical.

But the direction feels logical.

As AI systems move closer to autonomous operation, the most important question may no longer be how intelligent they are.

It may be whether their outputs can actually be trusted.

And that’s exactly the problem Mira Network is trying to solve.