I stopped trusting the word verified the day a perfectly correct answer failed a second check.

It happened during an internal review of an automated decision pipeline. The model output looked clean. The verification system approved it. Logs matched. Nothing appeared careless. But when another team attempted to replay the same verification process in a slightly different environment, the outcome shifted. Not dramatically, just enough to expose something uncomfortable.

Both systems were technically correct. They were simply operating under slightly different assumptions.

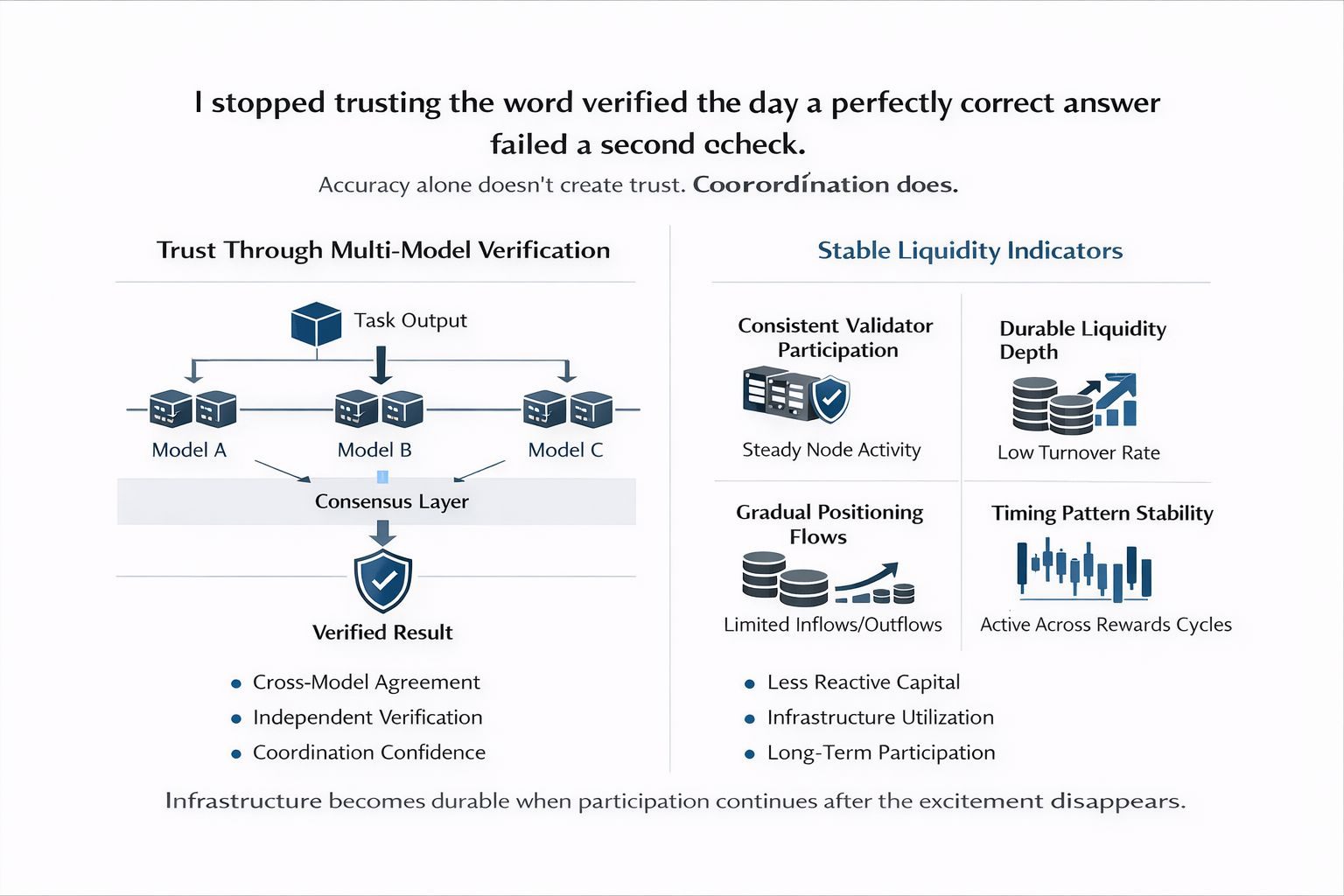

That moment changed how I evaluate AI infrastructure. Accuracy alone doesn’t create trust. Coordination does.

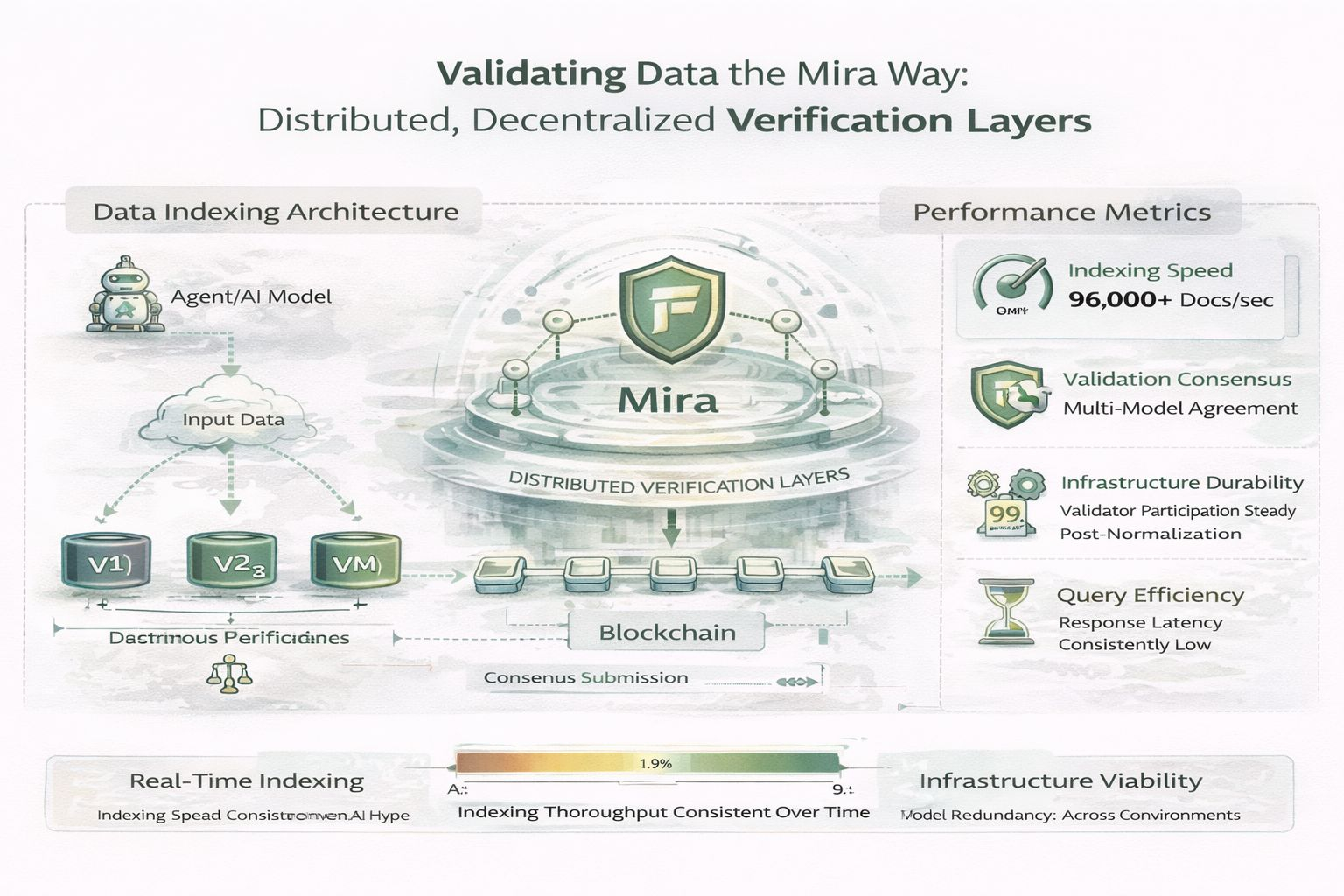

This is why #Mira ’s design around multi model verification layers stands out to me. The improvement is subtle but structurally meaningful. Instead of asking one model to produce and verify outputs, Mira distributes verification across multiple models that independently evaluate the same result. Trust becomes a process of cross model agreement rather than a property of any single system.

It doesn’t sound dramatic. Infrastructure improvements rarely do.

What matters more is how people behave around it.

Over the past months, the signals around Mira have been less about announcements and more about workflow behavior. Developer discussions increasingly reference verification layers as part of agent pipelines rather than experimental tooling. Multi model checks are appearing inside automated task frameworks where reliability matters more than speed.

When infrastructure participation remains stable even as reward intensity fluctuates, it usually suggests operators are running tasks because the network is operationally useful not simply profitable in the short term.

Liquidity behavior offers another clue.

Speculative ecosystems tend to show liquidity that surges with narrative cycles and disappears just as quickly. For verification networks in particular, that stability can matter more than growth.

Incentives tend to reveal the real health of coordination systems.

If validators only appear when rewards spike, verification reliability collapses under normal conditions. But if participation remains steady even when incentives compress, the network begins to resemble infrastructure rather than a yield program.

The behavioral signals worth watching are not dramatic ones. Liquidity depth relative to turnover rather than headline volume. Exchange flows that show gradual positioning rather than sudden inflows.

Even timing patterns can matter. When participants remain active through multiple reward adjustments, it often indicates that operational familiarity is forming. They are no longer testing the system. They are relying on it.

Capital stops behaving like speculation and starts behaving like provisioning. Liquidity becomes less reactive and more structural, providing access rather than chasing narratives.

Verification layers may follow the same trajectory that other forms of infrastructure have followed in crypto. At first they attract attention because the concept feels novel. Eventually they fade into the background as they become routine components of larger systems.

Developers stop talking about them. They simply use them.

If @Mira - Trust Layer of AI multi model verification approach works as intended, the protocol’s most important achievement may not be visibility. It may be quiet reliability, multiple systems checking each other until trust becomes procedural rather than assumed.

The real test will not come from announcements or token cycles. It will come from whether the behavioral signals remain consistent as the novelty fades.

Do developers continue integrating verification layers once the narrative attention moves elsewhere?

Infrastructure becomes durable when participation continues after the excitement disappears.

And the strongest systems in technology history share a common trait: eventually, they stop being discussed altogether.