@Mira - Trust Layer of AI $MIRA #Mira

The feeling that pushed this idea into existence

I’m watching the AI wave the same way a lot of people are, with that mix of excitement and a quiet tension in the chest, because the tools can feel almost magical one minute and then the next minute they can confidently hand you something that looks perfect but is subtly wrong, and that specific problem, the gap between how sure the output sounds and how sure it actually is, is what makes people hesitate to let AI touch anything critical like money, health, law, research, or autonomous agents, because if a system can hallucinate or carry bias while speaking in a calm, authoritative voice, then the risk is not only that it makes a mistake, it’s that it makes a mistake that slips past human attention, and We’re seeing this over and over in real life where humans become the exhausted editors of machine confidence, so the deeper question becomes less about “can models get smarter” and more about “can we build a way to verify what they say without trusting a single company’s private rules,” and that is the emotional origin of Mira Network as a decentralized verification layer, not as another model that promises perfection, but as a system that tries to make truth feel less like a vibe and more like a process you can audit.

What Mira is in simple language

Mira Network is easiest to understand if you imagine it as a neutral checkpoint that sits between an AI output and a real decision, where instead of letting one model’s answer move forward on pure confidence, the system tries to break that answer into smaller claims and then asks a network of independent verifiers to check those claims and agree on what holds up, and If it becomes normal to attach verification to sensitive outputs, then the whole culture around AI changes, because the output stops being “a pretty paragraph” and becomes “a set of statements that survived scrutiny,” and the reason decentralization matters here is simple: when one organization controls the verification pipeline, They’re the ones deciding what counts as evidence, what models are allowed to judge, what gets hidden, what gets ignored, and how disputes are handled, but when verification is a network outcome, the goal is to make the process harder to capture and easier to audit, so trust comes from the structure and incentives rather than from a brand name.

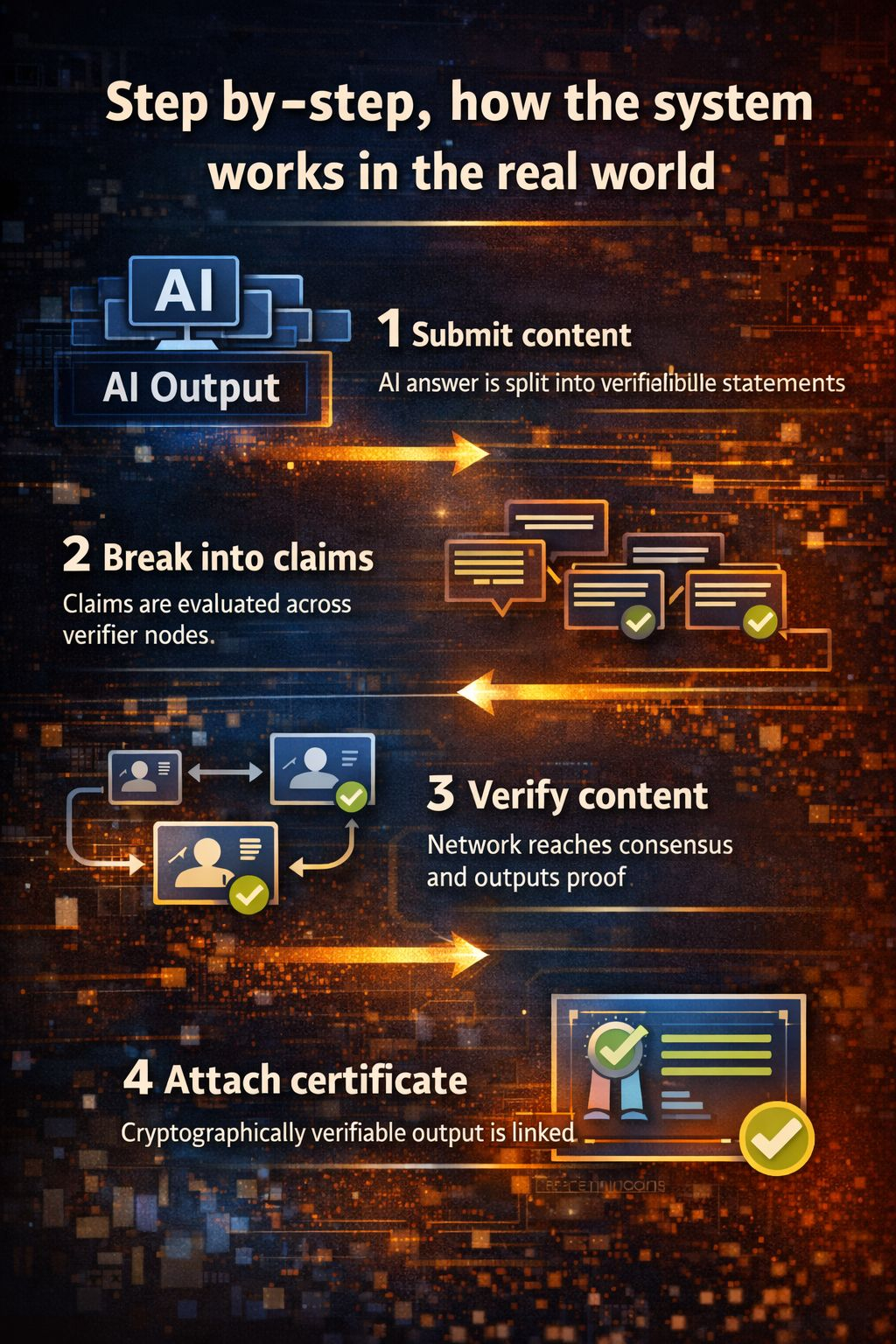

Step by step, how the system works in the real world

The flow starts when an application, agent, or user submits an output that needs to be trusted, and instead of treating that output as one giant blob, Mira’s approach is to transform it into smaller verifiable claims, because when you try to verify a long paragraph as a single unit, verifiers can drift into different interpretations, some might focus on one sentence, others might judge tone rather than truth, so breaking it down is the first discipline that makes verification possible at scale, then those claims are distributed across verifier participants who evaluate them, and what matters here is that verification is not supposed to be a private conversation inside one company’s server, it’s a repeatable routine where many independent nodes produce judgments, then the network aggregates those judgments into a consensus outcome, which can be tuned by thresholds depending on how strict the application wants to be, and finally the system produces a result that can be attached back to the original output as a certificate or proof trail, meaning a downstream app can say “this part was verified, this part was disputed, this part needs human review,” and that is where the practical value shows up, because We’re seeing that people don’t necessarily need AI to be perfect, they need it to be honest about confidence and they need the risky parts to be flagged before harm happens.

The technical choices that actually decide whether it scales

A lot of projects can describe verification in a nice sentence, but the hard part is the engineering and the incentives that keep it stable when usage rises and attackers show up, and one key choice is claim design itself, because the smaller and clearer a claim is, the more consistent verification becomes, but if claims are too tiny or too many, the cost explodes, so the system has to balance granularity with efficiency, and another choice is verifier diversity, because consensus is only meaningful when the verifiers are not all behaving like copies of each other, otherwise you can get a false sense of safety where everyone agrees and everyone is wrong the same way, and then there’s the question of latency and throughput, because If it becomes slow to verify, developers will skip verification in production workflows, so the system has to be optimized for parallel checking, fast aggregation, and predictable costs, and privacy is always sitting in the background too, because the more sensitive the content, the more important it becomes to minimize exposure, reduce what any single verifier can see, and make sure the system isn’t quietly turning into a data vacuum, so the “quiet” technical decisions are really about shaping a verification pipeline that can be fast, affordable, difficult to game, and respectful of data boundaries.

Why incentives matter, and what the token mechanism is really doing

Mira’s economic design is trying to answer a very human problem: if verification is valuable, people will try to fake it, so the system needs a way to reward careful work and punish lazy or dishonest participation, and this is where staking and slashing concepts come in, because a verifier who has something at risk is less likely to spam random answers, and a verifier who consistently behaves badly should lose money or reputation so the network learns and cleans itself over time, and many people talk about a token like it’s only a price chart, but in a protocol like this the token is supposed to function like a security deposit and a coordination tool, meaning the network uses economic pressure to keep the verification market honest, and if the incentives are tuned well, They’re not forcing people to “be good,” they’re simply making it unprofitable to be sloppy, and that is the point where trust becomes mechanical rather than emotional, because you’re no longer trusting someone’s promise, you’re trusting a system where bad behavior has a clear cost.

The metrics that matter if you want to judge it like infrastructure

If I’m evaluating Mira as a serious trust layer, I’m not looking first at hype, I’m looking at whether verification actually improves outcomes in measurable ways and whether it stays usable as demand grows, so the first thing is accuracy uplift compared to raw model outputs, meaning how often verified results avoid hallucinations that would otherwise slip through, and then dispute rate matters too, because a healthy verification system should be willing to say “uncertain” instead of forcing a fake certainty, and then there’s latency, the time from submission to verified outcome, because real products need predictable speed, and cost per claim matters because If it becomes too expensive, only a few high margin applications will use it, so it won’t become default behavior, and throughput matters because serious adoption means high volume checking, and decentralization metrics matter because a verification network that is controlled by a small cluster is easier to capture, so you want to watch verifier distribution, stake concentration, and whether participation grows beyond a narrow circle, and adoption signals are the biggest proof of all, meaning whether real apps integrate verification in the flow where decisions happen, and if it ever becomes relevant to mention Binance, it should be only as a visibility point, because the real story is not where something trades, it’s whether the verification layer becomes a habit inside products.

The risks and open questions that still have teeth

No matter how clean the idea is, the world has sharp edges, and Mira’s biggest risks are the kind that arrive when the network becomes valuable, because collusion is always possible if enough verifiers coordinate, and even without explicit collusion, correlated models can create consensus that looks strong but is actually brittle, and ambiguity is a real challenge too, because some claims are time sensitive or context dependent, and a system that pretends everything is binary can end up certifying the wrong kind of certainty, and privacy pressure will increase as people try to verify more sensitive content, so data minimization has to stay a core principle rather than an afterthought, and then there is the social risk of what “verified” means, because users may treat a verified label like a guarantee, but verification is still a process of agreement under constraints, not a divine stamp, so the network has to communicate uncertainty clearly, handle disputes honestly, and evolve fast when edge cases appear, and If it becomes slow, expensive, or confusing, then the world will route around it, because people only adopt safety when it fits into real workflows.

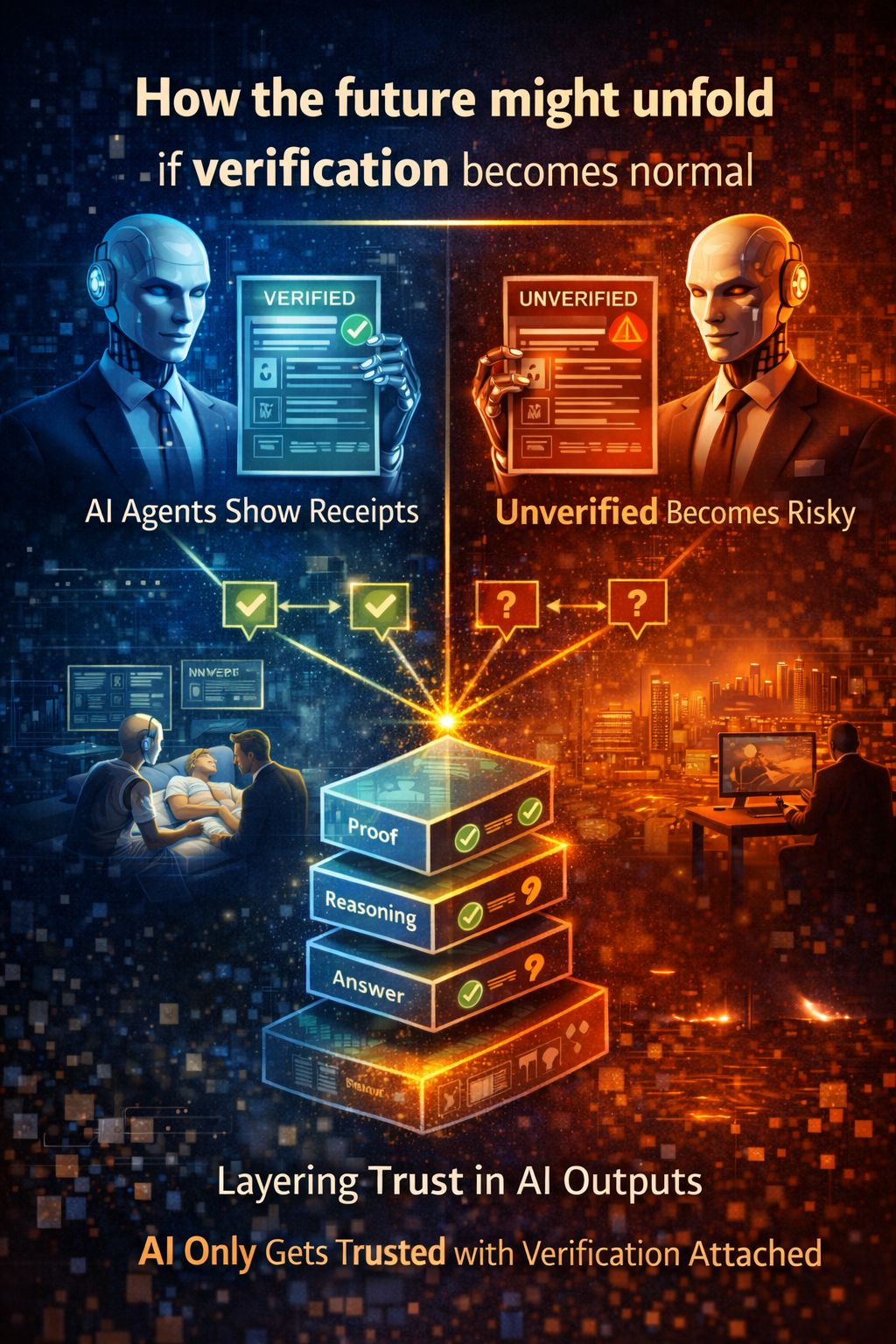

How the future might unfold if verification becomes normal

The future I can imagine is not one where AI suddenly stops making mistakes, it’s one where mistakes stop slipping through unnoticed, because We’re seeing the early shape of a world where AI outputs get treated like drafts until they pass a verification step, and once that mindset spreads, unverified answers start to feel like driving without a seatbelt, not illegal, but careless for anything that matters, and if Mira and systems like it scale, verification can move from simple factual checks into deeper checks of reasoning chains and action plans for agents, where the question becomes not only “is this claim true” but “is this decision path defensible,” and that would be a cultural shift as much as a technical shift, because it changes what people expect from AI, and It becomes possible for autonomous systems to take on more responsibility without forcing humans to do endless manual review, because the network becomes a neutral sanity layer that catches the confident nonsense before it turns into real damage.

In the end, what I like about the Mira direction is that it respects the reality of AI, that models can be powerful and still unreliable, and it tries to build a bridge from raw intelligence to earned trust, not through marketing, but through repeatable verification and incentives that make honesty the easiest path, and if that bridge holds, then the future won’t feel like humans constantly arguing with machines, it will feel like humans finally have a system that helps them trust the parts that deserve trust, question the parts that don’t, and move forward with a little more calm and a little less fear.